Monday, March 16, 11:00 a.m. PT 🔗

Live Updates From the GTC Keynote

Welcome to GTC 2026

A capacity crowd at the SAP Center, all waiting for the same thing.

The keynote opened with a video framing the token as the basic unit of modern AI — the building block behind systems used for scientific discovery, virtual worlds and machines operating in the physical world.

NVIDIA founder and CEO Jensen Huang then took the stage to raucous applause from the crowd.

He opened by thanking the pregame show hosts and highlighting the partners participating in the show, along with the 450+ sponsors, 1,000 sessions and 2,000 speakers.

“This conference is going to cover every single layer of the five-layer cake of artificial intelligence,” Huang said.

He marked the 20th anniversary of CUDA — describing it as the “flywheel” driving accelerated computing and the platform that supports “every single phase of the AI lifecycle.”

Huang turned to GeForce, describing NVIDIA as “the house that GeForce made,” the platform that brought CUDA to the world. He walked through GeForce’s history, tying it all back to AI, and introduced DLSS 5, launching a video showing how 3D-guided neural rendering enables real-time, photoreal 4K performance on local hardware. Learn more in the press release.

Next, Huang walked through the field of data processing and how it’s being accelerated for the era of AI. He detailed work with IBM, Dell, Google Cloud, AWS, Microsoft Azure, Oracle and CoreWeave, to serve their customers.

Huang then surveyed the accelerated computing ecosystem — automotive, financial services, healthcare, industrial, media, quantum, retail, robotics and telecom.

“All of these different vectors of AI have platforms that NVIDIA provides,” Huang said, highlighting NVIDIA’s broad range of CUDA-X libraries, which he described as the “crown jewels” of the company.

Huang highlighted the rise of “AI natives” — brand-new companies, some well-known, such as OpenAI and Anthropic, and some still emerging. “This last year, it just skyrocketed,” Huang said, citing $150 billion of investment into venture startups and walking through the history of the technologies that sparked the latest technology boom.

As a result of this boom, the computing demand for NVIDIA GPUs is “off the charts,” he said. “I believe computing demand has increased by 1 million times over the last few years.”

As a result, Huang said he now sees at least $1 trillion in revenue from 2025 through 2027.

Vera Rubin and Beyond — A Generational Leap in Computing

Huang noted that NVIDIA’s token cost is the best in the world, thanks to extreme codesign, reveling in one analyst description of NVIDIA as “the inference king.” “This is the incredible power of extreme codesign,” Huang said, referencing a process where software and silicon are designed in tandem.

The next step: NVIDIA Vera Rubin, a new full-stack computing platform comprising seven chips, five rack-scale systems and one supercomputer for agentic AI. The platform includes the new NVIDIA Vera CPU and BlueField-4 STX storage architecture.

“When we think Vera Rubin, we think the entire system, vertically integrated, complete with software, extended end to end, optimized as one giant system,” Huang said, walking the audience through the insides of new systems built on these technologies.

Looking beyond Vera Rubin, NVIDIA’s next major architecture is Feynman.

It will include a new CPU, NVIDIA Rosa, named for Rosalind Franklin, Huang said, whose X‑ray crystallography revealed the structure of DNA and reshaped modern biology. As Franklin exposed the hidden architecture of life, Rosa is built to move data, tools and tokens efficiently across the full stack of agentic AI infrastructure.

Rosa anchors a new platform that pairs LP40, NVIDIA’s next‑generation LPU, with NVIDIA BlueField‑5 and CX10, connected through NVIDIA Kyber for both copper and co‑packaged optics scale‑up, and NVIDIA Spectrum‑class optical scale‑out, Huang said. Together, the Feynman generation advances every pillar of the AI factory: compute, memory, storage, networking and security.

And to help accelerate the scale-out of new AI capacity, Huang announced the NVIDIA Vera Rubin DSX AI Factory reference design and the NVIDIA Omniverse DSX Blueprint. DSX Air, part of the broader DSX platform, lets companies simulate AI factories in software before building them in the physical world.

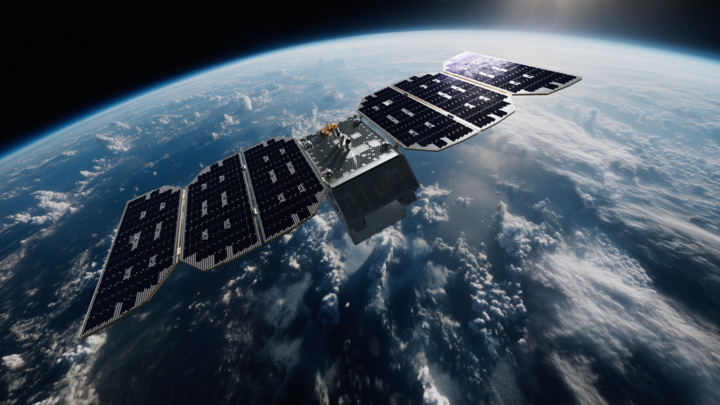

Finally, Huang announced NVIDIA is going to space. Its new Vera Rubin architecture honors the astronomer whose work revealed dark matter, and future systems like NVIDIA Space-1 Vera Rubin are being designed to bring AI data centers into orbit, extending accelerated computing from Earth to space.

NVIDIA NemoClaw for OpenClaw, Nemotron Coalition

Huang spotlighted OpenClaw, an open source project from developer Peter Steinberger that he called “the most popular open source project in the history of humanity.”

“OpenClaw has open sourced the operating system of agentic computers … Now, OpenClaw has made it possible for us to create personal agents,” Huang said.

With a single command, developers can pull down OpenClaw, stand up an AI agent and begin extending it with tools and context. NVIDIA is announcing support for OpenClaw across its platform, making it easier for developers to safely build, deploy and accelerate AI agents on NVIDIA‑powered infrastructure.

Every single company in the world today has to have an OpenClaw strategy, Huang said.

To ensure this technology can be deployed securely inside enterprises, Huang introduced the NVIDIA OpenShell runtime and the NVIDIA NemoClaw stack — combining policy enforcement, network guardrails and privacy routing. These technologies can serve as “the policy engine of all the SaaS companies in the world,” Huang said.

In addition, NVIDIA is expanding its open model ecosystem with a new Nemotron Coalition, rallying partners around six frontier model families: NVIDIA Nemotron (language and reasoning), NVIDIA Cosmos (world and vision), NVIDIA Isaac GR00T (general‑purpose robotics), NVIDIA Alpaymayo (autonomous driving), NVIDIA BioNeMo (biology and chemistry) and NVIDIA Earth‑2 (weather and climate).

Physical AI

NVIDIA is extending AI from digital agents into physical AI that can navigate the real world.

Huang said NVIDIA’s robotaxi‑ready platform is drawing new automaker partners, including BYD, Hyundai, Nissan and Geely.

He also highlighted a partnership with Uber to deploy these vehicles into its ride‑hailing network.

Beyond automakers, NVIDIA is working with industrial software giants and robotics leaders such as ABB, Universal Robots and KUKA to integrate its physical AI models and simulation tools, enabling deployment of smarter robots on manufacturing lines, and with telecom providers like T‑Mobile as base stations evolve into edge AI platforms.

That’s a Wrap

Huang capped off his keynote with a surprise visit from Olaf, the snowman from Disney’s Frozen, who appeared to walk straight off a digital screen and onto the stage.

“Ladies and gentlemen, Olaf,” Huang said, as the character waddled out, driven by NVIDIA’s physical AI stack, the Newton physics engine and NVIDIA Omniverse-powered simulation.

“Olaf, how are you? I know because I gave you your computer — Jetson,” Huang joked.

When Olaf asked what that was, Huang replied, “Well, it’s in your tummy … and you learned how to walk inside Omniverse.”

The demo underscored Huang’s broader point: everything on display — from humanoid robots to animated characters — had been simulated, not pre-rendered.

Huang closed by recapping the themes — inference, the AI factory, the OpenClaw, physical AI and robotics — then handed the stage to a musical ensemble: singing robots, a digital Jensen avatar and an animated lobster, performing a campfire song.

“All right, have a great GTC,” Huang said, exiting stage left as Olaf lingered, hamming it up for the crowd as he sank back beneath the stage through a trap door.

Read all NVIDIA news from GTC on the online press kit.

Build an agent of your own at NVIDIA’s build-a-claw event in the GTC Park, March 16-19 — 1-5 p.m. on Monday, and 8 a.m.-5 p.m. on Tuesday through Thursday — to customize and deploy a proactive, always-on AI assistant.

Wednesday, March 11, 9 a.m. PT 🔗

Build-a-Claw at GTC Park

GTC attendees can be among the first to get their hands on a “claw” — or long-running agent — using OpenClaw, the fastest-growing open source project in history.

Stop by NVIDIA’s build-a-claw event in the GTC Park March 16-19, between 1 p.m.-5 p.m. Monday and anytime between 8 a.m.-5 p.m. Tuesday through Thursday, to customize and deploy a proactive, always-on AI assistant.

With support from NVIDIA experts, GTC attendees can quickly have an AI agent at their fingertips. Whether technical or just curious, participants will name an agent, define its personality and grant it access to the tools it needs. Think of it as the personal assistant you’ve always wanted, reachable via your preferred messaged app.

To run the custom agent, use cloud compute provided onsite, or harness local accelerated computing by bringing your NVIDIA DGX Spark or GeForce RTX laptop, with no personal data on the device. DGX Spark systems will also be available to buy on site from the NVIDIA Gear Store and Micro Center.

These always-on AI assistants can be applied to virtually any task, including managing a calendar, suggesting vacation destinations, recommending new workout routines and coding a useful app. Make it a specialist or a generalist: it’ll continuously learn new skills that it’s directed toward and prompt the user with new findings.

To learn more, explore NVIDIA’s new OpenClaw Playbook: a step‑by‑step guide to run OpenClaw on DGX Spark. This playbook helps developers build always-on, local‑first AI agents that work directly with their files, apps and workflows without relying on the cloud.

Check back for updates and see the full lineup of events and activities at GTC.

Physical AI 🔗

Monday, March 16, 1:30 p.m. PT 🔗

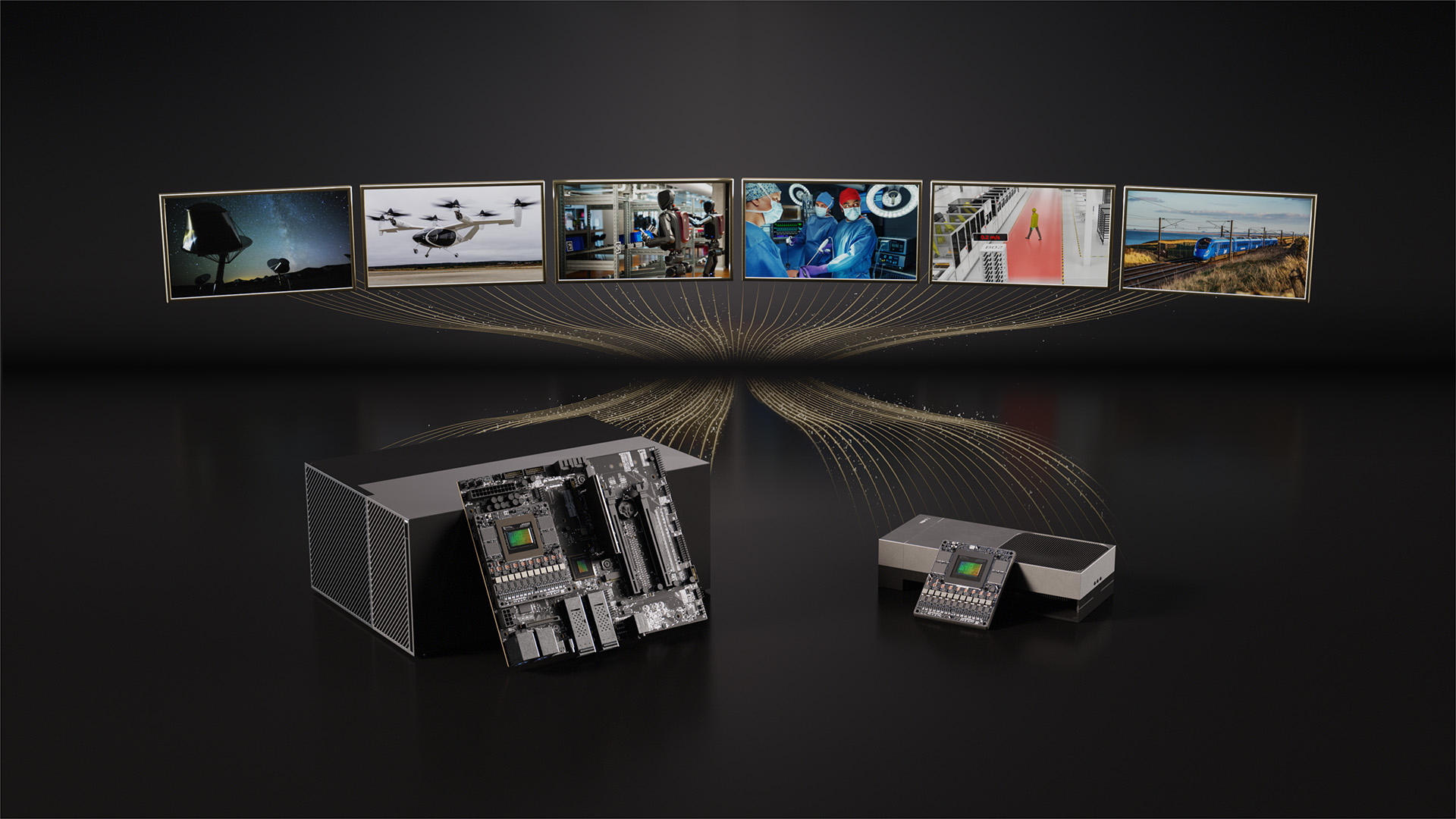

NVIDIA IGX Thor Now Generally Available, Bringing Real-Time Physical AI to the Industrial Edge

As industries move beyond rigid automation toward physical AI, they need a new class of intelligent edge computing devices capable of real-time sensing and inference to power autonomous, safety-critical machines in complex environments.

To meet this demand, NVIDIA IGX Thor — a powerful, industrial-grade platform that delivers real-time physical AI at the edge with high-speed sensor processing, enterprise-grade reliability and functional safety — is now generally available.

Industry Leaders Scale Physical AI With IGX Thor

NVIDIA IGX Thor boosts edge applications in construction, manufacturing, logistics, healthcare and life sciences, and even space exploration.

Caterpillar is developing an in-cabin conversational AI assistant, powered by IGX Thor, to enhance worker productivity and safety, and Hitachi Rail is using IGX Thor to deploy advanced predictive maintenance and autonomous inspection systems on rail networks.

KION Group, the supply chain solutions company, is harnessing IGX Thor and the NVIDIA Halos Outside-In Safety workflow to enable “outside-in” perception — meaning an AI agent augments autonomous robot functional safety mechanisms by using infrastructure-mounted cameras and dynamic virtual safety fences. Ecosystem partners like SICK are accelerating certification of autonomous industrial robots with sensor competence safety critical applications.

Agility and Hexagon Robotics are adopting IGX Thor for real-time AI reasoning and multimodal sensor fusion for their safe, humanoid robots.

Johnson & Johnson is adopting IGX Thor to power its Polyphonic digital surgery platform, bringing real-time AI inference to the operating room. KARL STORZ is using IGX Thor to develop next-generation endoscopy and imaging tools for more accurate diagnoses. Medtronic is evaluating IGX Thor, and LEM Surgical and Horizon Surgical Systems are adopting IGX Thor to deliver precision and functional safety in surgical robot systems.

Built on the NVIDIA Holoscan platform, these systems can process and orchestrate multimodal sensor data — including video, imaging and device telemetry — enabling low-latency AI pipelines required for next-generation, software-defined medical devices and intelligent operating rooms.

Planet Labs is adopting IGX Thor to transform terabytes of multidimensional satellite data into actionable intelligence in orbit, at lower costs. And researchers at CERN are using IGX Thor to run advanced physics-inspired AI models, processing massive data streams at high throughput.

Expanding Ecosystem, Faster Time to Deployment in Physical AI

Beyond performance and safety, industrial AI requires production-ready systems, multimodal sensors and reliable actuators.

Analog Devices, Infineon, NXP Semiconductors, STMicroelectronics and Texas Instruments are integrating radar devices, sensors and motor controllers into the NVIDIA Isaac Sim framework and using NVIDIA Holoscan Sensor Bridge to accelerate the integration of next-generation sensors and actuators for faster, safer and smarter physical AI systems.

Leopard Imaging, D3 Embedded, Sensing and e-con Systems have introduced Ethernet-based camera modules powered by Holoscan Sensor Bridge, enabling low-latency sensor data streaming directly to the GPU for real-time AI processing.

Advantech, ASRockRack, NEXCOM, Connect Tech, Onyx, Inventec and Yuan are building industrial-grade and medical-grade IGX Thor systems with enterprise performance, flexible input-output and custom configurations for different applications.

Barco, Cosmo and XRlabs are adopting IGX Thor and NVIDIA Holoscan to build medical-grade, off-the-shelf edge AI platforms for the medtech industry, enabling device manufacturers to accelerate development and deploy production-ready, medical certified clinical solutions using NVIDIA’s full-stack accelerated computing and AI software.

IGX Thor Developer Kits are available for purchase from worldwide distribution partners. The IGX T5000 module for embedded systems with functional safety and IGX 7000 board kit for high-performance workstations will be available later.

Watch the GTC keynote from NVIDIA founder and CEO Jensen Huang and explore physical AI, robotics and vision AI sessions.

Workstation 🔗

Monday, March 16, 1:30 p.m. PT 🔗

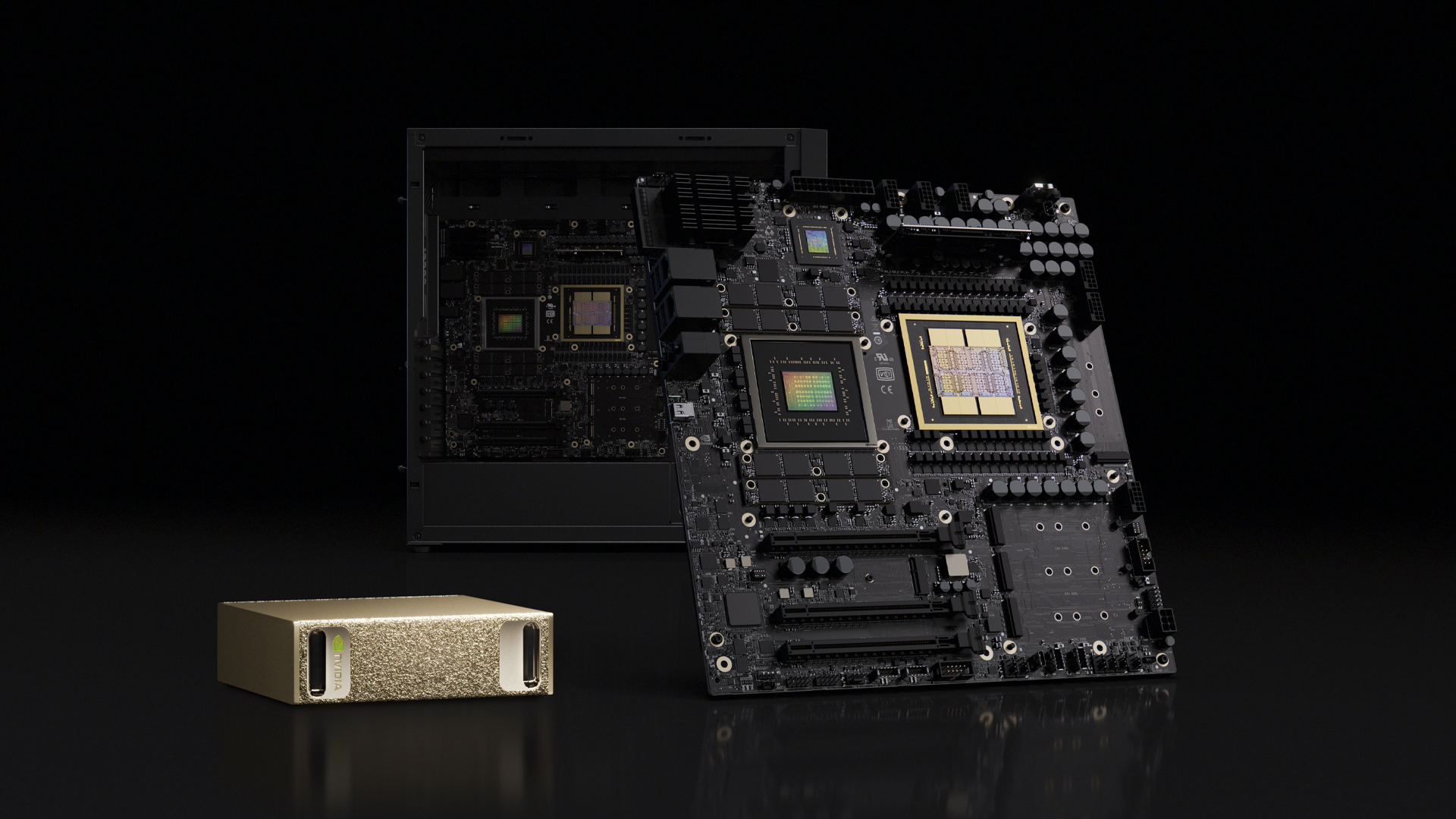

DGX Spark and DGX Station Paired With NVIDIA NemoClaw Deliver Full-Stack Platform for Autonomous Agents

Artificial intelligence is moving from simple, prompt-based tools to intelligent, long-running systems that reason, plan and act. These autonomous agents don’t just generate text. They can write code, call tools, analyze data, simulate outcomes and continuously improve.

To build and run always-on agents with supercomputing-intelligence, developers need the right infrastructure.

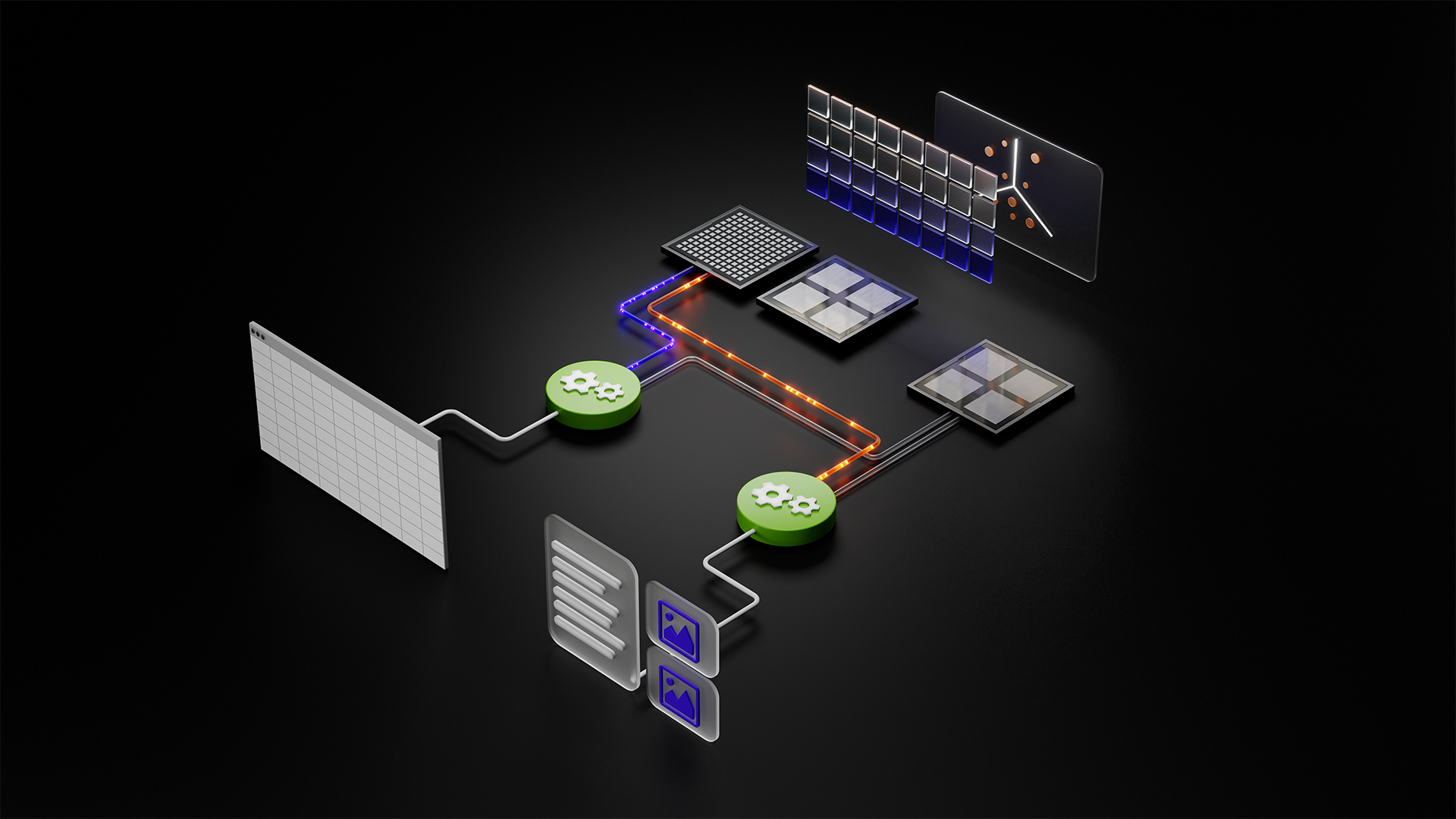

Pairing NVIDIA DGX Spark and NVIDIA DGX Station systems with the new NVIDIA NemoClaw open source stack provides the ultimate platform for locally developing and deploying autonomous, long-running agents, aka claws. The systems bring AI-factory-class performance directly to where intelligence is created — at the desk and inside the enterprise.

Securing Agentic AI With NemoClaw

NVIDIA NemoClaw is an open source stack that simplifies running always-on OpenClaw assistants — more safely and with a single command. It installs the NVIDIA OpenShell runtime, part of the NVIDIA Agent Toolkit, a secure environment for running autonomous agents, and open source models like NVIDIA Nemotron.

Enterprises deploying autonomous agents across proprietary workflows need governance, isolation and control. OpenShell defines how agents access data, use tools and operate within policy boundaries, providing the architectural foundation for secure, always-on AI systems.

Self-evolving agents need dedicated computing to build tools and software as they complete tasks autonomously. DGX Spark and DGX Station are ideal environments for running NemoClaw to build and validate agents with OpenClaw locally before scaling to data center AI factories.

DGX Spark: Scalable AI for Enterprise Teams

While DGX Station represents the most powerful deskside AI system, DGX Spark brings scalable AI infrastructure to domain teams across the enterprise.

With large local memory, strong performance and integration with NemoClaw, DGX Spark is ideal for autonomous agent development and deployment.

DGX Spark now supports clustering up to four systems in a unified configuration, creating a compact “desktop data center” with up to linear performance scaling — without the complexity of traditional rack deployments.

An upcoming software release for DGX Spark is expected to further strengthen orchestration and manageability, enabling faster iteration and smoother transitions from prototype to production.

DGX Spark supports the latest AI models, including NVIDIA Nemotron 3 and leading open models, ensuring developers can build on a modern, continuously evolving AI software stack.

Across industries, organizations are moving DGX Spark from evaluation environments into active enterprise deployment. Financial institutions are accelerating risk modeling. Healthcare researchers are compressing discovery timelines. Energy companies are optimizing operations. Media and telecommunications teams are building real-time content and communications workflows.

DGX Station: Data-Center-Class AI at the Desk

NVIDIA DGX Station — the world’s most powerful deskside supercomputer — has arrived for this new phase of long-thinking, autonomous thinking.

Powered by the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, DGX Station provides 748 gigabytes of coherent memory and up to 20 petaflops of AI compute performance. It connects a 72-core NVIDIA Grace CPU and NVIDIA Blackwell Ultra GPU through NVIDIA NVLink-C2C, creating a unified, high-bandwidth architecture built for frontier AI workloads.

It enables developers to run open models of up to 1 trillion parameters and to develop long-thinking autonomous agents directly from their desks.

DGX Station can operate as a personal AI supercomputer or as a shared, on-demand compute node for teams. It supports air-gapped configurations, making it well suited for regulated industries and sovereign environments. Applications developed locally can move seamlessly to NVIDIA GB300 NVL72 systems in the data center or cloud without rearchitecting.

Industry leaders are already harnessing DGX Station to accelerate real-world innovation. Snowflake is using DGX Station to locally test its open source Arctic training framework. EPRI is using and testing it to advance AI-powered weather forecasting to strengthen grid reliability. Medivis is integrating vision language models with DGX Station into surgical workflows. Sungkyunkwan University is using the system to accelerate protein structure analysis. Microsoft Research and Cornell University are tapping DGX Station, enabling hands-on AI training at scale. Respo.Vision, WSP and 1X are deploying AI for advanced sports analytics, synthetic data training, autonomous agents and humanoid robotics.

Systems are available to order now and will begin shipping in the coming months from ASUS, Dell Technologies, GIGABYTE, MSI and Supermicro, and later in the year from HP.

One Architecture — From Desktop to AI Factory

DGX Station and DGX Spark ship preconfigured with the NVIDIA AI software stack, enabling developers to use familiar tools and move seamlessly between local development and large-scale infrastructure.

Developers can run and fine-tune state-of-the-art models on DGX Station — including OpenAI gpt-oss-120b, Google Gemma 3, Qwen3, Kimi K2.5, Mistral Large 3, DeepSeek V3.2 and NVIDIA Nemotron — and tap into a wide variety of familiar tools and platforms from 1x, Aible AI, Anaconda, Docker, Red Hat, JetBrains, Docker, Inc., Ollama, llama.cpp, ComfyUI, LM Studio, Llm.c, Weights & Biases (acquired by CoreWeave), Odyssey, Roboflow, VLLM, SGLang, Unsloth, Learning Machine, Quali, Lightning AI and more.

By unifying chips, systems, networking and software into a coherent architecture, NVIDIA enables enterprises to build once and scale everywhere — from a deskside DGX Station to multi-node DGX Spark clusters to full AI factories.

Learn more about DGX Spark at NVIDIA GTC. Attend sessions on scalable autonomous agents and AI infrastructure, see live NemoClaw-enabled AI agents and multi-node Spark demos in the exhibit hall, or visit this webpage to access deployment guides and beta resources.

Developers can get started quickly on DGX Station and test a large sample of AI workflows. Learn more and order a DGX Station from an NVIDIA partner.

See notice regarding software product information.

Monday, March 16, 1:30 p.m. PT 🔗

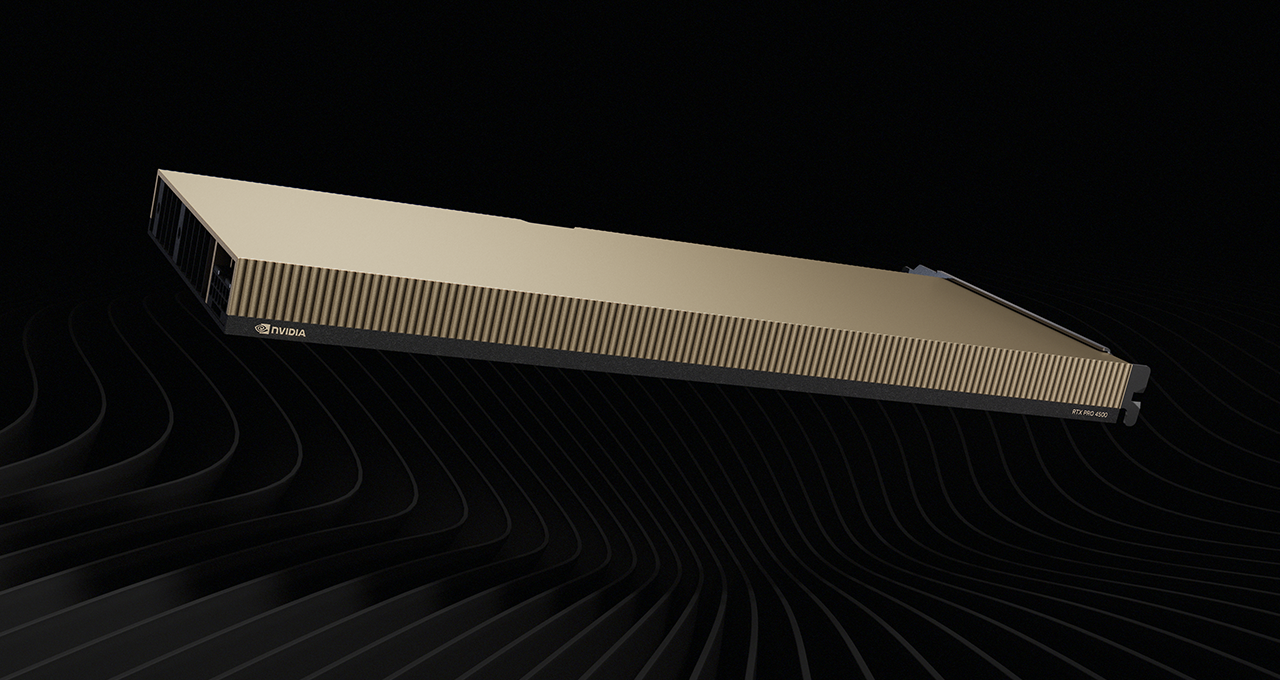

NVIDIA RTX PRO 4500 Blackwell Server Edition Brings Universal Acceleration From Data Center to the Edge

The NVIDIA RTX PRO 4500 Blackwell Server Edition brings GPU acceleration capabilities to the world’s most widely adopted enterprise data center and edge computing platforms, delivering a staggering leap in performance compared with traditional CPU-only servers: 100x performance for vision AI applications and up to 50x performance for vector databases.

Power-Efficient Performance for Enterprise Data Centers

For enterprises looking to optimize performance, efficiency and costs, RTX PRO 4500 Blackwell delivers breakthrough capabilities in a compact, 165-watt, single-slot form factor. Fifth-generation Tensor Cores, fourth-generation RTX technology and a fully integrated media pipeline make it an optimal platform for data processing, vision AI, small language model (SLM) inference, video processing and visual computing.

It’s also slated to support virtual workstations with the upcoming vGPU 20.0 release, and NVIDIA Multi-Instance GPU technology for hardware-based partitioning.

Additional highlights include:

- SLM AI Inference gets up to a 10x performance boost for the NVIDIA Nemotron Nano 9B model vs. the NVIDIA L4 GPU.

- Apache Spark accelerated by NVIDIA cuDF provides up to 5x faster query performance and 10x better total cost of ownership on 10 terabytes of data vs. CPUs.

- Vision AI with the NVIDIA Metropolis platform provides up to 4x performance for video summarization with the NVIDIA Cosmos Reason 2 model vs. the NVIDIA L4 GPU.

Industry Leader Adoption

Leaders across multiple industries including IT services, life sciences and telecommunications are adopting the NVIDIA RTX PRO 4500 Blackwell GPU to power AI and visual computing workloads.

NTT DATA is accelerating client access to secure, AI-driven data insights from video captured at edge locations. In biotech, Pacific Biosciences plans to integrate RTX PRO 4500 Blackwell into its sequencing platforms to drive breakthroughs in genomic analysis. Nokia will deploy RTX PRO 4500 Blackwell in its AI‑RAN Base Stations, creating a distributed computing network that connects billions of devices for edge AI agents.

Comprehensive Ecosystem Support

Enterprises adopting RTX PRO 4500 Blackwell will benefit from broad software ecosystem support. This includes AI infrastructure software from Broadcom, Canonical, Red Hat, Nutanix and SUSE; data platforms including Databricks and Snowflake; AI platforms such as Dataiku, DataRobot and Ray on Anyscale; security software including Cisco AI Defense; and vision AI and analytics solutions such as Ambient AI, BriefCam and Ipsotek, an Eviden Business.

NVIDIA RTX PRO Servers with the RTX PRO 4500 Blackwell Server Edition GPU are also featured as part of the updated NVIDIA Enterprise AI Factory validated design and the NVIDIA AI Data Platform, a customizable reference design for building modern storage systems for enterprise agentic AI.

Storage partners integrating RTX PRO 4500 Blackwell as a part of their AI Data Platform include Cloudian, DDN, Dell Technologies, Everpure, Hammerspace, Hitachi Vantara, IBM, NetApp, Nutanix, VAST Data and WEKA.

Availability

RTX PRO Servers with RTX PRO 4500 Blackwell GPUs are available to order from system builders including Cisco, Dell, HPE, Lenovo and Supermicro — with additional server configurations from Aivres, ASRock Rack, ASUS, Compal, Foxconn, GIGABYTE, Inventec, Lanner, MiTAC Computing, MSI, Pegatron, Quanta Cloud Technology (QCT), Sanmina, Wistron and Wiwynn.

Akamai Cloud and Amazon Web Services (AWS) will be among the first cloud service providers to offer RTX PRO 4500 Blackwell Server Edition instances.

Learn more about the RTX PRO 4500 Blackwell Server Edition GPU.

See notice regarding software product information.

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA Partners Unveil New NVIDIA RTX PRO Blackwell-Based Workstations

At GTC, NVIDIA partners unveiled a new wave of AI‑ready workstations that pair NVIDIA RTX PRO Blackwell GPUs with the Intel Xeon 600 processors for workstations. These refreshed designs power workflows from everyday AI exploration to demanding 3D design and simulation.

Lenovo is expanding its ThinkPad P series mobile workstations with — ThinkPad P14s i Gen 7, ThinkPad P16s Gen 5 AMD, ThinkPad P16s I Gen 5 and ThinkPad P1 Gen 9 — as well as its ThinkStation lineup with the powerful new ThinkStation P5 Gen 2, configurable with up to two RTX PRO 6000 Blackwell Max-Q Workstation Edition GPUs.

Dell is introducing a modernized Dell Pro Precision portfolio with Dell Pro Precision 5 and 7 Series mobile workstations and Dell Pro Precision 9 T2, T4 and T6 desktop workstations.

HP plans to future-proof HP Z desktop workstations to support future NVIDIA GPU generations. Learn more at HP Imagine on March 24.

This new generation of workstations gives enterprises more flexibility in how they refresh fleets — whether prioritizing mobility, deskside performance or maximum expandability. RTX PRO Blackwell GPUs with the latest drivers provide day-zero model readiness — including for NVIDIA Nemotron open models and key community models — so teams can begin experimenting with and deploying AI workloads right away.

Software providers such as Ollama, SGLang and LM Studio offer models and tools tuned for RTX PRO, enabling developers and creators to run advanced AI workloads directly on these new workstations using familiar workflows.

Securing Agentic AI Workflows Locally With NVIDIA NemoClaw

As AI moves from simple prompts to agentic AI — long-running systems that reason, plan and act — developers need secure infrastructure to build always-on AI assistants. These new workstations provide an ideal desktop platform for the NVIDIA NemoClaw open source stack, which is designed for safely developing and deploying autonomous, long-running agents.

Harnessing up to 4,000 TOPS of local AI compute and 96 gigabytes of GPU memory on the RTX PRO 6000 Blackwell Workstation Edition and RTX PRO 6000 Blackwell Max-Q Workstation Edition GPUs, NemoClaw simplifies running OpenClaw assistants safely. As part of the NVIDIA Agent Toolkit, it installs the NVIDIA OpenShell runtime — a secure environment for running autonomous agents and open source models like NVIDIA Nemotron. This gives enterprises the governance, control and privacy required to tackle complex business tasks entirely on premises.

Learn more and see these RTX PRO‑powered workstations in action at GTC in the NVIDIA demo booth and at partner booths (Dell 721, HP 1931 and Lenovo 431).

Cloud 🔗

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA and Amazon Web Services Expand Compute Capacity in the Agentic AI Era

NVIDIA and Amazon Web Services (AWS), an Amazon.com company, are announcing an expanded partnership to deploy NVIDIA AI infrastructure to support growing compute demand for every industry in the age of agentic AI. AWS will deploy NVIDIA AI infrastructure including LPUs and more than 1 million NVIDIA GPUs.

This partnership will enable the large-scale deployment of NVIDIA AI infrastructure across AWS’s compute portfolio. The expanded infrastructure will span the full NVIDIA AI computing stack, including the NVIDIA Blackwell and Rubin GPU architectures, NVIDIA RTX PRO Blackwell Server Edition GPUs for enterprise AI workloads and NVIDIA Groq 3 LPUs for ultralow-latency inference. AWS and NVIDIA are also collaborating on Spectrum networking and other infrastructure areas.

The deployment of more than 1 million NVIDIA GPUs will begin this year across AWS’s global cloud regions. Built on NVIDIA accelerated computing and AWS’s global cloud platform, these deployments will enable AWS AI factories to operate as unified compute engines — delivering unmatched efficiency, performance and security for training and deploying next-generation AI systems.

By integrating NVIDIA’s accelerated computing with AWS’s advanced cloud services and infrastructure technology, the companies aim to provide enterprises, startups and research institutions with the infrastructure needed to build and scale agentic AI systems — capable of reasoning, planning and acting autonomously across complex workflows. Together, the companies will deliver a significant leap forward in the speed, scale and reliability of AI infrastructure worldwide.

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA and AWS Collaborate to Expand GPU-Accelerated Solutions

NVIDIA and Amazon Web Services (AWS) are expanding their collaboration to extend the capabilities of NVIDIA-powered data processing on AWS, as well as adding support for the NVIDIA Nemotron family of open models.

Since 2010, NVIDIA and AWS have collaborated to deliver large-scale, cost-effective and flexible GPU-accelerated solutions spanning infrastructure, software and services to offer a full-stack suite that accelerates time to solution when building and deploying AI in production.

Accelerate Data Processing on AWS With NVIDIA RTX PRO 4500

The new NVIDIA RTX PRO 4500 Blackwell Server Edition GPU is coming soon to AWS through a new type of accelerated computing Amazon EC2, delivering NVIDIA Blackwell performance in the cloud. AWS is the first cloud provider to announce support for NVIDIA RTX PRO 4500. These Amazon EC2 instances, when used with Amazon EMR, are well-suited for data processing workloads. The new Amazon EC2 instances are built on the AWS Nitro System, which delivers the enhanced security, stability and resource efficiency that data processing workloads will require in production.

NVIDIA Nemotron Powers Salesforce Agentforce

The NVIDIA Nemotron Nano 3 model is available as a model on Amazon Bedrock for Salesforce Agentforce, expanding Agentforce to new high-throughput applications such as batch processing or high-concurrency B2C apps. According to Salesforce’s Agentic Benchmark for CRM, Nemotron 3 Nano is the most cost-efficient model for summarization and generation use cases.

Reinforcement Fine-Tuning for NVIDIA Nemotron Models Coming Soon on Amazon Bedrock

Developers will soon be able to fine-tune NVIDIA Nemotron models directly on Amazon Bedrock using reinforcement fine-tuning (RFT). This is significant for teams that need to align model behavior to specific domains, whether that’s legal, healthcare, finance or any other specialized field. Reinforcement fine-tuning lets developers shape how a model reasons and responds, not just what it knows. Nemotron Nano 3 will soon support RFT, bringing these capabilities to AWS customers.

See notice regarding software product information.

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA Open Models With Microsoft Foundry and Azure Local Power Agentic AI, Sovereign AI and Physical AI Systems

At GTC, Microsoft announced updates to its unified platform for agentic and physical AI systems, accelerating the shift from experimentation to production. Combining Microsoft Foundry with NVIDIA’s open models and accelerated computing creates a unified stack to simplify customization while meeting strict data sovereignty requirements.

To power these inference-heavy workloads, Microsoft has rapidly integrated the latest NVIDIA accelerated computing platforms into its liquid-cooled Azure data centers. This builds on a massive infrastructure expansion in which Microsoft has deployed hundreds of thousands of GPUs liquid-cooled NVIDIA Grace Blackwell GPUs across its global data centers in less than a year. Azure was also the first hyperscale cloud provider to power up the new NVIDIA Vera Rubin NVL72 systems, which will roll out globally over the coming months.

Building Specialized Enterprise Agents With NVIDIA Nemotron on Microsoft Foundry

Developers can now build and deploy specialized agents with NVIDIA Nemotron open models directly in Microsoft Foundry.

At the agent platform layer, Microsoft Foundry Agent Service is generally available to support building, deploying and operating AI agents at scale. New features include enhanced observability and voice capabilities for agents, enabling customers to move from experimentation to production fast on an agent platform that prioritizes security and governance.

Using Azure compute, accelerated by NVIDIA Blackwell and Rubin platforms, teams can easily access these models in Foundry’s model-as-a-platform environment. The lineup will expand across the latest Nemotron 3 family of reasoning, speech and vision models, including Nemotron 3 Super, as well as AI safety guardrail models. Nemotron models will also arrive on Azure as a managed application programming interface service later this year.

Microsoft Security is also working on NVIDIA Nemotron and NVIDIA NemoClaw to increase agent safety, security and efficiency.

“At Microsoft, trust and security are critical to responsible agentic AI adoption. That’s why we’ve been partnering with NVIDIA on adversarial learning that includes Nemotron and OpenShell to better protect the industry and enterprise security applications with real-time, adaptive runtime protection,” said Alexander Stojanovic, vice president of Microsoft Security’s NEXT AI team. “Early results are showing 160x improvement in finding and mitigating AI-based attacks.”

Accelerating Sovereign AI on Azure Local

For regulated industries and government customers, Azure Local is expanding sovereign AI capabilities in customer-controlled environments. Azure Local now supports the NVIDIA RTX PRO 6000 Blackwell Server Edition GPU. Support for the NVIDIA RTX PRO 4500 Blackwell Server Edition GPU is coming soon, with support for the NVIDIA Rubin platform expected later this year. Paired with Foundry Local services, customers can run models on premises while keeping data, inference and governance under their control.

Powering Physical AI on Azure

To accelerate robotics and physical AI, NVIDIA blueprints and open foundation models, including NVIDIA Cosmos world models and NVIDIA Alpamayo models for autonomous vehicle development, are now available on GitHub and Foundry. Find more on NVIDIA’s physical AI announcements from GTC.

Learn more about this strategic collaboration in the Official Microsoft Blog.

Tuesday, March 17, 9:00 a.m. PT 🔗

Oracle and NVIDIA Team to Accelerate Vector Search and Enterprise Data Processing With NVIDIA cuVS

Oracle and NVIDIA are partnering with customers to put GPU‑accelerated vector index builds into action for real‑world workloads. Oracle Private AI Services Container, initially announced with CPU execution, is designed to support NVIDIA GPUs and the NVIDIA cuVS open source library for vector search and index generation. This announcement builds on the launch of Oracle AI Database 26ai and Oracle Private AI Services Container at Oracle AI World 2025.

Companies using Oracle AI Database 26ai to manage massive unstructured and multimodal data estates can now offload vector index creation to NVIDIA accelerated computing, significantly reducing index build times.

Bolstering Healthcare Workflows

Early healthcare innovators Biofy and Sofya are among the first to explore GPU‑accelerated index builds on Oracle Database, targeting faster, more accurate AI‑powered clinical and analytics use cases.

Sofya — an AI healthcare company delivering real‑time medical transcription and structured clinical documentation, with over 1 million clinical encounters already processed — offers a real-time platform that generates clinical notes and suggests evidence‑based protocols during patient interactions. This demands fresh, searchable access to massive medical datasets.

Roughly 1.5 million new medical articles are published each year, and Sofya must keep its AI recommendations closely aligned with the latest clinical evidence. Sofya taps into a dataset of roughly 500 million vectors over 3 terabytes of U.S. health library data, originally requiring days to build vector indexes. GPU‑accelerated index builds through Oracle Private AI Services Container and NVIDIA cuVS can help Sofya vastly accelerate this process.

Biofy, a health-tech company, uses AI to rapidly identify bacterial infections, predict antibiotic resistance and recommend targeted treatments in hours instead of days. It uses Oracle Cloud Infrastructure with NVIDIA GPUs to scale training and inference, storing genomic vectors in Oracle Autonomous Database with AI Vector Search to deliver low latency, cost efficient queries. With a fine‑tuned Llama‑based model, Biofy continuously generates synthetic DNA sequences to stay ahead of evolving bacteria, so scientists don’t need to constantly run expensive wet‑lab experiments. cuVS enables Biofy to accelerate the creation of vector search indexes.

Learn more about all the latest Oracle and NVIDIA announcements at GTC, including the new OCI Supercluster with Vera Rubin, on the Oracle blog.

Tuesday, March 17, 9:00 a.m. PT 🔗

NVIDIA Cloud Partners Double Global AI Factory Footprint to Advance Sovereign AI

AI factory growth is soaring.

Announced at GTC, NVIDIA Cloud Partners (NCPs) have doubled their AI factory footprint year over year, advancing sovereign AI in the U.S., Australia, Germany, Indonesia, India and more.

NCPs have now cumulatively deployed more than 1 million NVIDIA GPUs in AI factories across the globe, representing more than 1.7 gigawatts of AI capacity.

That’s enough compute power to study the molecular interactions of billions of protein structures and pharmaceutical compounds in just weeks, or to simulate tens of billions of driving miles per day to train autonomous vehicles in hyperrealistic 3D physics engines.

The 2x growth of NCP deployments since last year’s GTC — when NCPs had deployed a cumulative 400,000 NVIDIA GPUs representing 550 megawatts of AI capacity — showcases how organizations are generating intelligence at a larger scale than ever. And being a part of the NCP program means they’re all equipped to support future generations of NVIDIA AI infrastructure.

Here are some key NCPs deploying cloud-based AI factories that bring together NVIDIA accelerated computing, networking and optimized AI software:

- Fintech startup innovation hub TechQuartier is working with sandbox-as-a-service provider NayaOne to create a sovereign Supercharged Sandbox platform in Germany. NayaOne runs on Sovereign AI Factory Frankfurt — which harnesses NVIDIA AI Enterprise software and is powered by NCP Polarise.

- Zadara and DDN are announcing a strategic partnership to deliver high-performance AI infrastructure for multi-tenant sovereign clouds and AI factories, built on NVIDIA reference architectures. Together, the companies will enable service providers, telcos and enterprises to deploy NVIDIA-powered AI infrastructure quickly, securely and efficiently while maintaining full control over compliance, cost, tenant isolation and performance service-level agreements.

- YTL, a Malaysia-based NCP and AI lab, is using Nemotron 3 technologies and data in its ILMU family of large language models, trained on local contextualized data and deployed in Malaysia.

- German research consortium SOOFI, funded by the German Federal Ministry for Economic affairs and Energy, is training new sovereign AI models as part of the Sovereign Open Source Foundation Models initiative to strengthen European AI sovereignty and build the foundation for new, widely used AI applications. SOOFI is building its foundation models using NVIDIA Nemotron 3 Nano and Super, as well as Deutsche Telekom’s industrial AI cloud, to provide the sovereign, secure, high-performance environment required to build trusted and scalable AI models.

Learn more about the NCP program.

Healthcare 🔗

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA Releases Open Physical AI Models for Healthcare Robotics

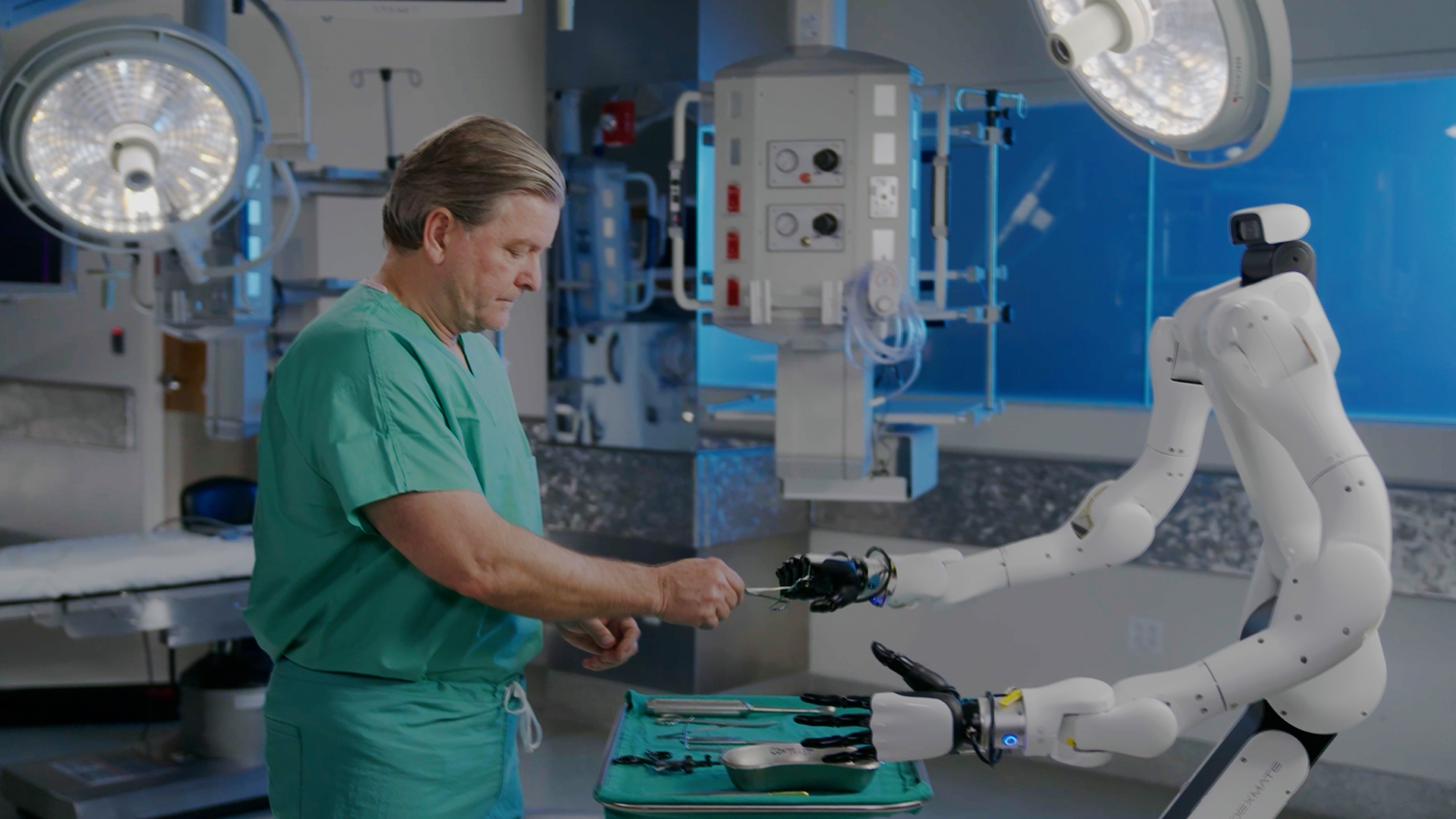

Surgical robotics leaders including CMR Surgical and Johnson & Johnson MedTech, along with surgical physical AI platform developers PeritasAI and Proximie, are among the first to adopt healthcare-specific physical AI tools unveiled at GTC.

For years, AI in healthcare has lived primarily on screens, analyzing medical images and predicting patient outcomes. Today, it moves beyond the screen and into the physical world as NVIDIA launches the first domain-specific physical AI platform for healthcare robotics.

Leaders and innovators in surgical robotics — including CMR Surgical, Johnson & Johnson MedTech, Moon Surgical and Rob Surgical — are adopting NVIDIA’s healthcare-specific physical AI tools to accelerate workflows including synthetic data generation, robotic policy evaluation and digital twin creation.

PeritasAI is integrating these technologies to build a physical AI platform for surgical operations, integrating robots and multi-agent intelligence to sense, coordinate and act in real time. Another physical AI platform developer, Proximie, is developing vision language models that can support surgical teams in the operating room.

Announced at GTC, these tools provide the robust, open infrastructure developers need to transform care delivery with a new generation of healthcare robots. They include:

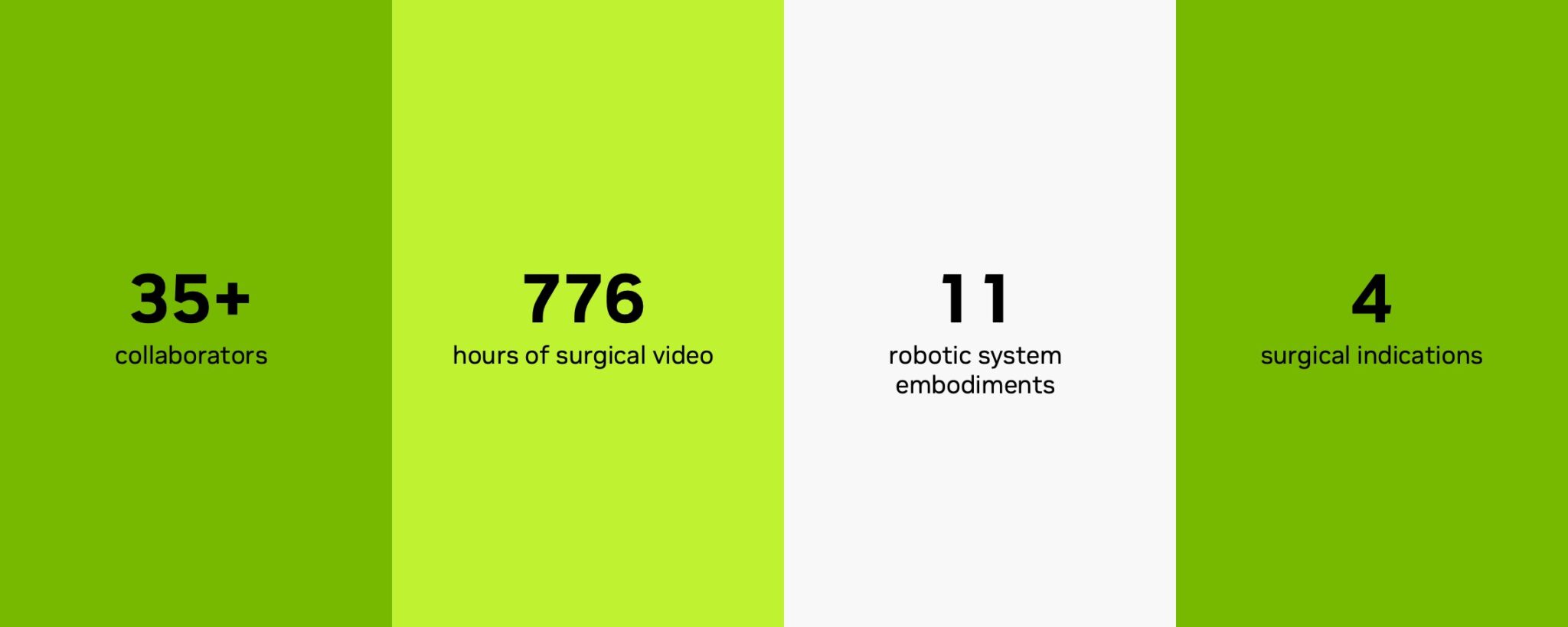

- Open-H, the world’s largest healthcare robotics dataset. Built with about three dozen collaborators, Open-H represents the real-world diversity and complexity of surgical procedures, providing over 700 hours of surgical video to accelerate the development of advanced, generalizable robotic systems.

- Cosmos-H, an open model family including Cosmos-H-Surgical, based on NVIDIA Cosmos for domain-specific, physics-based synthetic data generation at scale. Featuring three models that generate surgical video from prompts, reference images or videos, and paired robot kinematics, Cosmos-H enables developers to augment real datasets with lifelike simulations — and evaluate robotic policies by predicting the future state of surgical environments.

- GR00T-H: This vision language action (VLA) model, based on NVIDIA Isaac GR00T N, processes text commands describing clinical tasks and generates motion commands, known as action tokens. Trained on Open-H, the model can be used to train and evaluate robots developed to perform complex physical actions in healthcare environments.

- Rheo: This developer blueprint, available within the Isaac for Healthcare developer framework for AI healthcare robotics, enables developers to create physically accurate simulations of hospital environments. It can be used to safely develop and test automation strategies at scale by simulating clinical workflows, medical device interactions, human movement and hospital logistics.

Surgical robotics, imaging and hospital automation leaders are already using NVIDIA physical AI technology for healthcare robotics:

- CMR Surgical is contributing close to 500 hours of surgical video to Open-H to help pretrain GR00T-H. The company is also using Cosmos-H to generate physically accurate synthetic surgical data and evaluate new robotic policies.

- Johnson & Johnson MedTech is using a Cosmos-based foundation model and anatomical simulation in Isaac for Healthcare to generate and enhance data for post-training workflows for the MONARCH Platform for Urology.

- PeritasAI, is using Isaac for Healthcare and Rheo to train humanoid robots and VLA models that bring embodied intelligence into surgical environments — supporting surgical teams through real-time situational awareness, sterile coordination and intelligent management of instruments, implants and operating room workflows. This work is in collaboration with Lightwheel and Advent Health Hospitals.

- Proximie is using Cosmos-H to train multimodal vision language models that combine operating room images with intraoperative video. These models power real-time AI agents that provide surgical insights and orchestration across the surgical pathway.

Additional adopters of NVIDIA healthcare robotics technology include Moon Surgical and Rob Surgical.

Open-H, Cosmos-H and GR00T-H are now available on GitHub and Hugging Face for developers to post-train and adapt for specific surgical scenarios. These tools pair with NVIDIA Isaac for Healthcare and the Rheo blueprint to power simulation pipelines for the training and evaluation of surgical robots.

Learn more about the Rheo blueprint on the NVIDIA technical blog.

Monday, March 16, 1:30 p.m. PT 🔗

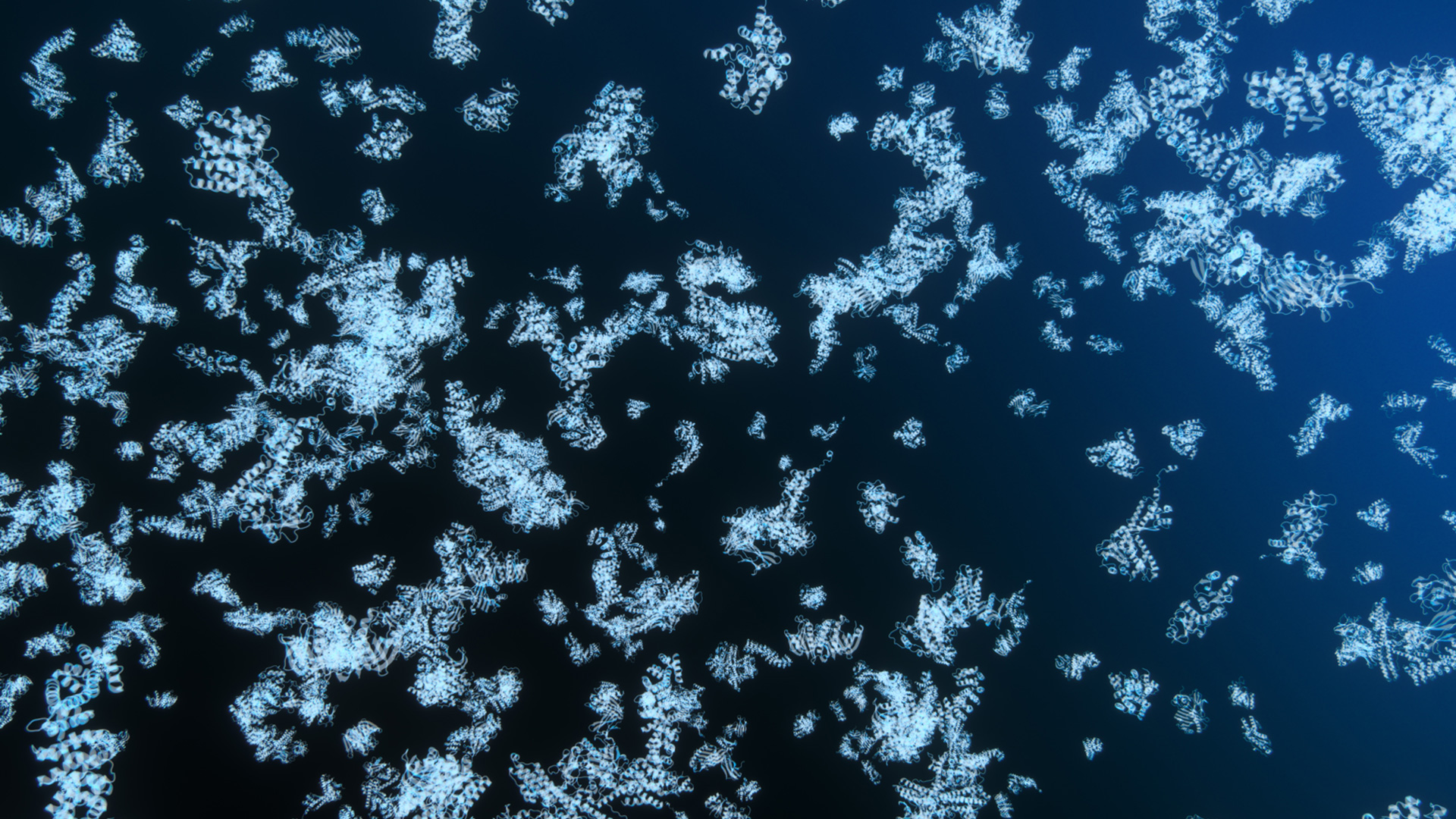

NVIDIA, Google DeepMind, EMBL Unveil World’s Largest Dataset of Protein Complexes to Accelerate AI-Driven Biology and Drug Discovery

NVIDIA, Google DeepMind, EMBL’s European Bioinformatics Institute and the Seoul National University Steinegger Lab have massively expanded the AlphaFold Protein Structure Database, adding 1.7 million high-confidence predicted protein complexes to the searchable database, while making about 30 million additional predicted structures available for bulk download.

The newly added dataset — the largest of its kind — turns the database into a comprehensive resource for modeling protein-to-protein interactions at an unprecedented scale.

Google DeepMind’s AlphaFold-Multimer model provided AI-predicted protein structures for the database. It was accelerated by integrating NVIDIA computing libraries — NVIDIA TensorRT and cuEquivariance — into the OpenFold inference pipeline, achieving over 100x faster inference compared to the original.

The database provides these precomputed protein structures to serve as hypotheses, accelerating experimental testing in the discovery of new drug targets and disease biology.

This removes a massive computational barrier for researchers, particularly those in low-resource settings lacking access to advanced supercomputers.

This initiative prioritizes reference proteomes — sets of proteins that represent taxonomic diversity — and the World Health Organization priority list to bolster infectious disease research.

For the pharmaceutical industry, these predicted structures serve as powerful starting hypotheses, significantly accelerating downstream wet-lab experiments and saving crucial time and resources.

Research and Open Source 🔗

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA cuDF and cuVS Adopted by World’s Leading Data Platforms, Fueling Modern Enterprise Data Processing

Enterprises are generating hundreds of zettabytes each year, and organizations are racing to turn that information into insights. NVIDIA cuDF and cuVS — accelerated data libraries built on NVIDIA CUDA‑X — are being adopted by data platforms across industries to deliver up to 5x faster performance while reducing costs for structured and unstructured data processing.

Integrated with the world’s most widely used open source data engines — downloaded over 200 million times monthly by developers — these libraries are harnessed across enterprise data platforms, databases and data lakes. This helps organizations accelerate innovation, develop more accurate models and process more data while managing costs.

For structured data, NVIDIA cuDF accelerates open source data processing engines such as Apache Spark, Presto, DuckDB, Polars and Velox, delivering up to 5x faster processing compared with CPU-only deployments.

For unstructured data — which represents 80% of today’s enterprise data and is growing rapidly — NVIDIA cuVS accelerates leading engines including FAISS, Amazon OpenSearch Service and Milvus. This helps agents and applications extract context, facts and recommendations from vast stores of text, images and video in a fraction of the time.

Powering Enterprise Data Processing Platforms

Google Cloud integrates NVIDIA cuDF to accelerate Apache Spark within Dataproc and cuDF can be easily used within Google Kubernetes Engine (GKE) to reduce processing times for massive ETL jobs from hours to seconds while lowering compute costs.

At Snap, which serves more than 946 million active users, NVIDIA cuDF on GKE cut daily data processing costs by 76%. This enables 10 petabytes of data to be analyzed within a three-hour window — saving millions of dollars.

“Our collaboration with NVIDIA and Google Cloud helps us innovate faster for more than a billion Snapchatters worldwide,” said Saral Jain, chief information officer of Snap. “By lowering data processing costs and scaling experiments across petabytes of data, we’re delivering AI-powered experiences more quickly and efficiently.”

IBM watsonx.data is a hybrid, open data platform that includes open source analytics engines such as Apache Spark and Presto engines for structured data, and a vector engine based on OpenSearch. In early experiments with Nestlé’s Order-to-Cash mart, watsonx.data with NVIDIA cuDF accelerated workloads ran five times faster, with 83% lower cost savings.

“For a company that serves billions, data underpins decision making across our global operations,” said Chris Wright, chief information and digital officer of Nestlé. “Working with IBM and NVIDIA, a targeted proof of concept has demonstrated the ability to refresh global operations data in a few minutes and at reduced cost. Our focus now is on turning this capability into tangible business impact — further improving decision speed in areas such as manufacturing and warehousing, and scaling these capabilities across our enterprise.”

The Dell AI Data Platform with NVIDIA includes accelerated data engines that enable enterprises to quickly and securely activate their Dell AI Factory with AI-ready data. It features an Apache Spark-based processing engine accelerated with NVIDIA cuDF, delivering up to 3x faster performance, and an enterprise-grade vector database accelerated with NVIDIA cuVS, delivering up to 12x higher throughput for vector indexing compared with CPUs.

“Purpose-built for agentic AI, the Dell AI Data Platform with NVIDIA uses accelerated data processing engines to make multimodal data AI-ready in hours instead of days,” said Michael Dell, chairman and CEO of Dell Technologies.

Oracle announced that Oracle Private AI Services Container can greatly accelerate vector index creation in Oracle AI Database using NVIDIA cuVS, helping organizations speed up AI-enabled decisions with the latest information.

“Enterprise AI is moving from experimentation to production,” said Clay Magouyrk, CEO of Oracle. “Oracle AI Database with NVIDIA technology delivers AI-ready data within minutes, enabling applications that were previously impossible.”

NVIDIA cuDF and cuVS are supported by leading enterprise data platforms including EDB Postgres AI, NetApp, Snowflake, Starburst and VAST Data — setting the foundation for the AI‑powered future of data processing.

Financial Services 🔗

Tuesday, March 17, 10:00 a.m. PT 🔗

Jump Trading to Become One of the First Trading Firms to Adopt NVIDIA Rubin Platform

Jump Trading will be among the first trading firms in financial services to adopt the NVIDIA Rubin platform, accelerating AI-powered capital markets research and financial modeling.

A key success factor for Jump is “research velocity” — the ability to explore diverse deep learning approaches, rapidly adapt models to shifting market conditions and scale trading across global markets. Financial markets generate some of the world’s most dynamic and complex datasets, with the largest U.S. stocks averaging over 10 million exchange messages per day and peak rates exceeding 200,000 messages per second.

To meet these extraordinary demands, NVIDIA Vera Rubin NVL72 delivers supercomputer-class compute density, expanded memory bandwidth and significantly increased power efficiency to enhance performance while reducing token costs.

Driving liquidity and price discovery requires relentless research and the ability to scale new ideas instantly. Equipped with NVIDIA accelerated computing, Jump can achieve massive research velocity, rapidly iterating on complex model architectures to adapt to shifting market conditions with AI.

Learn more about how Jump is using NVIDIA accelerated computing to power the next wave of capital markets research and algorithmic trading by visiting booth 189 in the FSI Pavilion at GTC.

Quantum 🔗

Monday, March 16, 1:30 p.m. PT 🔗

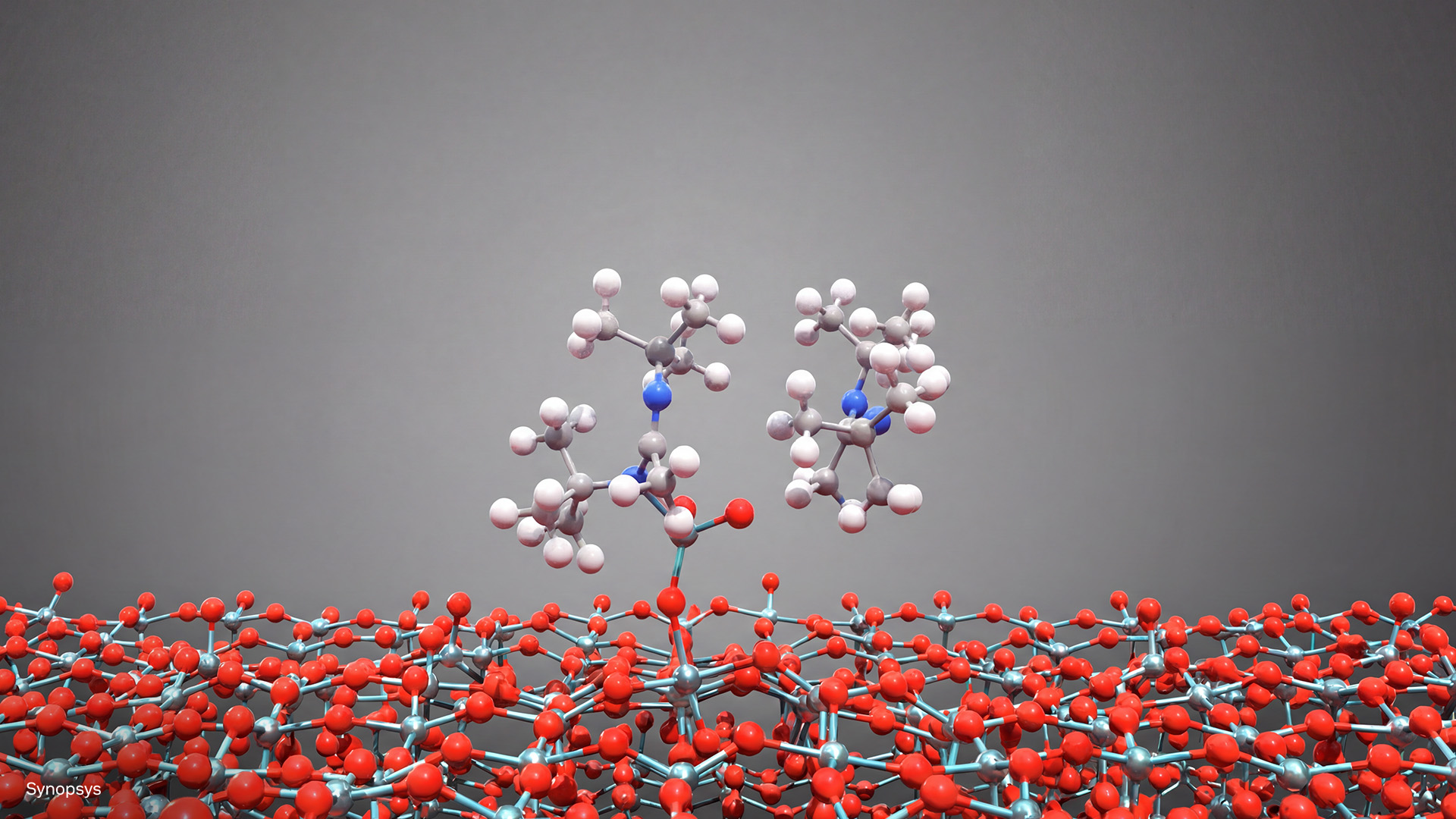

NVIDIA Launches cuEST for Accelerated Quantum Chemistry in Semiconductor Design

NVIDIA this week launched NVIDIA cuEST, a new NVIDIA CUDA-X library that shifts electronic-structure calculations onto GPUs. Applied Materials, Samsung, Synopsys and TSMC are among the initial adopters.

A leading-edge chip now contains over 50 billion transistors. Engineering them requires answering fundamental physics questions at the atomic scale: how electrons bond, how they migrate and how they interact across films just a few atoms thick.

“As semiconductor scaling reaches the physical limits of materials, the industry requires a massive increase in computing performance to simulate the quantum mechanics of next-generation chip designs,” said Tim Costa, general manager for industrial and computational engineering at NVIDIA. “With NVIDIA cuEST, industry leaders can move past the quantum bottleneck and take high-fidelity chemical modeling directly into production to accelerate semiconductor innovation.”

Industry Impact

- Applied Materials: Applied Materials uses cuEST-accelerated density functional theory (DFT) to model challenging structures, predict material properties and study reaction pathways.

- Samsung: Samsung integrated cuEST into its internal pipeline, already accelerated on GPUs, to deliver yet another up to 5x end-to-end speedup for key quantum-chemistry workloads.

- Synopsys: Powered by cuEST and QuantumATK, Synopsys expanded its functionality to include Gaussian-basis DFT, accelerating simulations up to 30x for semiconductor workflows.

- TSMC: TSMC uses cuEST’s accelerated quantum chemistry to advance processes for next-generation silicon design.

From the Lab to the Fab

The most common method for atomistic modeling is density functional theory. DFT offers a strong balance between accuracy and scalability; however, its computational cost has limited its widespread use in industry, keeping most applications confined to research. With cuEST, NVIDIA makes high‑accuracy quantum‑chemistry feasible at an industrial scale and in real production workflows.

Historically, the industry has relied on CPU clusters to run these simulations, evaluating candidate materials, including gate dielectrics and interconnect metals, one batch at a time over hours or days.

cuEST provides optimized routines so GPUs can accelerate the core matrices of a Gaussian-basis DFT calculation, including overlap, kinetic energy, nuclear attraction, Coulomb and exchange-correlation. It also supports functional approximations ranging from standard generalized gradient approximation to hybrid functionals, allowing engineers to balance computational cost with accuracy.

NVIDIA’s goal for cuEST: moving high-fidelity material modeling from the lab to the fab.

Learn more about cuEST by joining the NVIDIA demo booth and Synopsys’ booth at GTC, and dive deeper in the GTC session, “Next-Generation Discovery: Agentic AI for Science, AI-Driven Simulation and GPU-Accelerated Chemistry.”

Monday, March 16, 8:00 a.m. PT 🔗

Now Live: Keynote Pregame Show

It’s almost time for the GTC keynote by NVIDIA founder and CEO Jensen Huang. Tune in now to the pregame show — hosted by Sarah Guo, founder of AI-native investing firm Conviction; Gavin Baker, managing partner and chief investment officer of Atreides Management; and Alfred Lin, partner at Sequoia Capital — as industry titans from across the ecosystem share insights into the state of AI.

The keynote will begin at 11 a.m. PT, with live coverage here on the blog.

Friday, March 13, 8:30 a.m. PT 🔗

Pregame the GTC Keynote With Leaders Shaping the Age of AI

Before Monday’s GTC keynote with NVIDIA founder and CEO Jensen Huang, catch NVIDIA GTC Live — a preshow featuring analysts, founders and industry leaders shaping the next phase of AI — starting at 8 a.m. PT.

Hosts Sarah Guo, founder of AI-native investing firm Conviction; Gavin Baker, managing partner and chief investment officer of Atreides Management; and Alfred Lin, partner at Sequoia Capital, will talk with guests about the shift from general-purpose to accelerated computing and the five-layer foundation behind one of the largest infrastructure buildouts in history.

Correspondent Tiffany Janzen, founder of TiffinTech, will chat with guests in the crowd — including a few notable tech leaders — to get live reactions.

The program’s themes focus on the workloads, infrastructure and applications shaping today’s AI systems:

- Accelerated computing: Palantir President Aki Jain, Cadence CEO Anirudh Devgan, IBM’s Dinesh Nirmal and Morgan Stanley’s Mark Edelstone discuss how accelerated computing is expanding what’s possible in simulation, digital twins and large-scale analytics across industries.

- AI infrastructure: Caterpillar CEO Joe Creed, Fireworks AI CEO Lin Qiao, Dell CEO Michael Dell and CoreWeave CEO Michael Intrator examine the infrastructure required to support modern AI systems. Topics include token generation as a new unit of computing, the realities of power and cooling, and how AI infrastructure is scaling globally.

- Open models: Cohere CEO Aidan Gomez, Perplexity CEO Aravind Srinivas, Mistral AI CEO Arthur Mensch and Black Forest Labs CEO Robin Rombach will explore how open models enable AI development across biology, physics, manufacturing and enterprise software. They’ll share how teams build on open source, approach model strategy and launch AI-native startups.

- Agentic AI: LangChain CEO Harrison Chase, Edison Scientific CEO Samuel Rodriques, OpenClaw creator Peter Steinberger and PrimeIntellect CEO Vincent Weisser look at the rise of agentic systems that reason step by step, use tools and complete complex tasks.

- Physical AI: OpenEvidence founder Daniel Nadler, SkildAI CEO Deepak Pathak, PhysicsX CEO Jacomo Corbo and Waabi CEO Raquel Urtasun will explore advances in robotics and physical AI — covering how simulation, digital twins and foundation models are moving systems from virtual training environments into real-world deployment.

Watch the livestream on March 16.

Sunday, March 15, 10:30 a.m. PT 🔗

Downtown San Jose Turns Green as GTC Kicks Off

NVIDIA GTC begins today at the San Jose Convention Center with full-day technical workshops on topics including multimodal AI agents, end-to-end robotics workflows and accelerated networking for AI infrastructure.

Tomorrow, thousands of attendees will pack the SAP Center for the keynote address by NVIDIA founder and CEO Jensen Huang at 11 a.m. PT. The preshow begins at 8 a.m. — see the lineup of guests below.

After the keynote, take in sessions and activities across downtown San Jose.

Wednesday, March 11, 8 a.m. PT 🔗

GTC 2026: What to Expect From the Biggest AI Conference of the Year

Every March, San Jose gets a little electric.

NVIDIA founder and CEO Jensen Huang will walk onto the floor of the SAP Center — home of the San Jose Sharks — on Monday, March 16, at 11 a.m. PT to deliver a keynote to a crowd that has been arriving since Sunday from 190 countries.

Thirty-thousand attendees. Ten venues across downtown. Register, and you’ll hit your step count. The keynote streams free from nvidia.com for those who want to attend virtually.

This year’s GTC spans topics from physical AI and AI factories to agentic AI and inference. Huang’s keynote will cover the full stack: chips, software, models and applications. It’s a buildout measured in gigawatts. More than 700 sessions provide all the details.

The pregame show — featuring the CEOs of Perplexity, LangChain, Mistral, Skild AI and OpenEvidence — starts at 8 a.m. PT on Monday, three hours before Huang takes the stage. And the keynote is just the capstone of day one.

On Wednesday, March 18, at 12:30 p.m. PT, Huang will moderate a panel on open models with Harrison Chase, cofounder and CEO of LangChain, and leaders from A16Z, AI2, Cursor and Thinking Machines Lab. The conversation will be about where open models stand against the frontier closed ones, and what it means for everyone building on top of them. Doors open at 11:30 a.m. PT. Seating is first come, first served.

Tuesday, March 17, at 9 a.m. PT, Dario Gil, Undersecretary at the U.S. Department of Energy, joins NVIDIA Vice President of Hyperscale and High-Performance Computing Ian Buck to discuss AI in climate and energy research. At 2 p.m. PT later that day, Universal Music Group chairman and CEO Sir Lucian Grainge joins NVIDIA Vice President of Media and Entertainment Richard Kerris on music and AI.

On March 18, tune in to the GTC Developer Community Livestream for a full day of real-time coverage of show floor demos, interviews with top builders and looks at the parts of GTC most people don’t get to see. Plus, help shape the coverage — use #ShowMeGTC and drop suggestions on social or in the YouTube chat to determine what gets shown on stream.

Off the main stages: 150 researcher posters on robotics, model innovation and systems architecture, with the researchers there to talk through their work. Seventy-plus hands-on training labs, an hour and 45 minutes each. Certification exams onsite, no extra cost.

There are snacks, too. At Cesar Chavez Park, the center of the GTC campus, a day market runs 11 a.m. to 2 p.m. The night market goes until 9 p.m.

The conference ends Thursday afternoon. Stay tuned all week on the blog for updates.

NVIDIA GTC runs March 16-19 in San Jose, California. Register at nvidia.com/gtc — use code GTC26-20 for 20% off. The keynote streams free at nvidia.com, no registration required.