There are more galaxies in the universe than anyone ever expected. Unfortunately, they all showed up at once.

When the first images from the James Webb Space Telescope (JWST) began returning data in 2022, Brant Robertson and his colleagues did what astronomers have always done: They stared at the sky and tried to understand what they were seeing. This time, the sky arrived as terabytes.

“There were galaxies everywhere,” Robertson recalled. “So many, and so far away, that we were genuinely shocked.”

Robertson is a professor of astronomy and astrophysics at the University of California, Santa Cruz, where he leads a team studying how the earliest galaxies formed after the Big Bang.

It’s the kind of work Spring Astronomy Day was made for — and thanks to the datasets his team releases publicly, anyone marking the occasion this year can explore the early universe in more depth than was possible even a few years ago.

Over the past several years, his group has broken the record for the most distant known galaxy more than once, each time pushing observation closer to the universe’s first light.

Without computation at this scale, the data would just pile up.

Observational limits require calculation. Copernicus used mathematics to resolve observational inconsistencies. Robertson does the same using computational models.

JWST is the most powerful observatory ever launched, observing in infrared, capturing light that has traveled for more than 13 billion years. Each deep-field image is crowded with hundreds of thousands of galaxies, some of them 13 billion years old.

That abundance is the problem.

“These datasets are far too large and complex for humans to analyze by hand,” Robertson said. “Even teams of experts would take years to do what now needs to happen in days.”

“AI doesn’t just help scientists understand the universe faster — it helps us all access and understand work at the cutting edge — that’s the real breakthrough,” said Dion Harris, senior director of high-performance computing and AI hyperscale infrastructure solutions at NVIDIA.

At UC Santa Cruz, an analysis pipeline was built to keep up. AI models handle classification. GPUs accelerate nearly every step: data reduction, catalog generation, anomaly detection and simulation. Without that acceleration, much of the work would simply stall.

Some of that computation runs on campus on the UCSC’s Lux cluster — funded by a $1.6 million National Science Foundation grant.

Larger GPU runs move off campus to supercomputers run by the U.S. government. Development work happens closer to hand. In Robertson’s office in the Interdisciplinary Sciences Building, a gold-edition NVIDIA DGX Station system sits beside the desk — whisper-quiet and used for testing models before they’re sent elsewhere to run at scale.

One of the central tools in the pipeline is an AI system called Morpheus, developed by Ryan Hausen, a former graduate student at UCSC who’s now a research software engineer at Johns Hopkins, in collaboration with Robertson and originally applied to earlier galaxy surveys.

Built on techniques from semantic segmentation — the same approach self-driving cars use to distinguish roads from pedestrians — the system was later adapted and scaled to handle JWST’s far larger and more detailed images.

Rather than classifying an entire galaxy at once, Morpheus examines every pixel, distinguishing a spheroidal bulge from its surrounding disk, even when both occupy the same image.

Applied across JWST data, the system enabled the team’s first large-scale AI analysis of the telescope’s observations, surfacing results that were difficult to reconcile with existing models.

Among the results were rotating disk galaxies — systems resembling the Milky Way — appearing far earlier than expected. The early universe was thought to be a violent place, dominated by mergers and disruption. Disk galaxies were not supposed to be there. They were.

“At first, people didn’t believe it,” Robertson said. “But the result has now been independently confirmed multiple times.”

Mapping a Half-Million Galaxies

Modern observatories do more than produce images. They produce catalogs — large datasets describing brightness, color, mass, shape and motion. Hundreds of variables for each galaxy.

Taken together, the measurements form a kind of map — showing which galaxies resemble each other, which don’t and where the outliers sit. A graduate student in the group, Anavi Uppal, built a tool the team calls GalaxyFriends to make that structure visible.

Rather than sorting galaxies one by one, the system organizes just under 90,000 of them into similarity neighborhoods, allowing researchers to move through the dataset in bulk — finding related objects, isolating rare cases and seeing patterns that would otherwise take years to notice.

“It lets us see structure in the universe we couldn’t see before,” Robertson said. “And it tells us when something doesn’t look like anything else we’ve ever observed.”

In practice, the group has become a kind of clearinghouse: turning raw observations into structured datasets that astrophysicists around the world can actually use.

Removing Atmospheric Blur From Ground Telescopes

Some of the most ambitious surveys will not come from space.

The Vera C. Rubin Observatory, coming online in Chile, will scan the entire southern sky every few nights. Its mirror is too large to launch into space, which means its images must pass through Earth’s atmosphere, introducing blur that space telescopes avoid. Once fully operational, Rubin will generate roughly 20 terabytes of raw data every night.

Robertson’s group is using AI to correct for that.

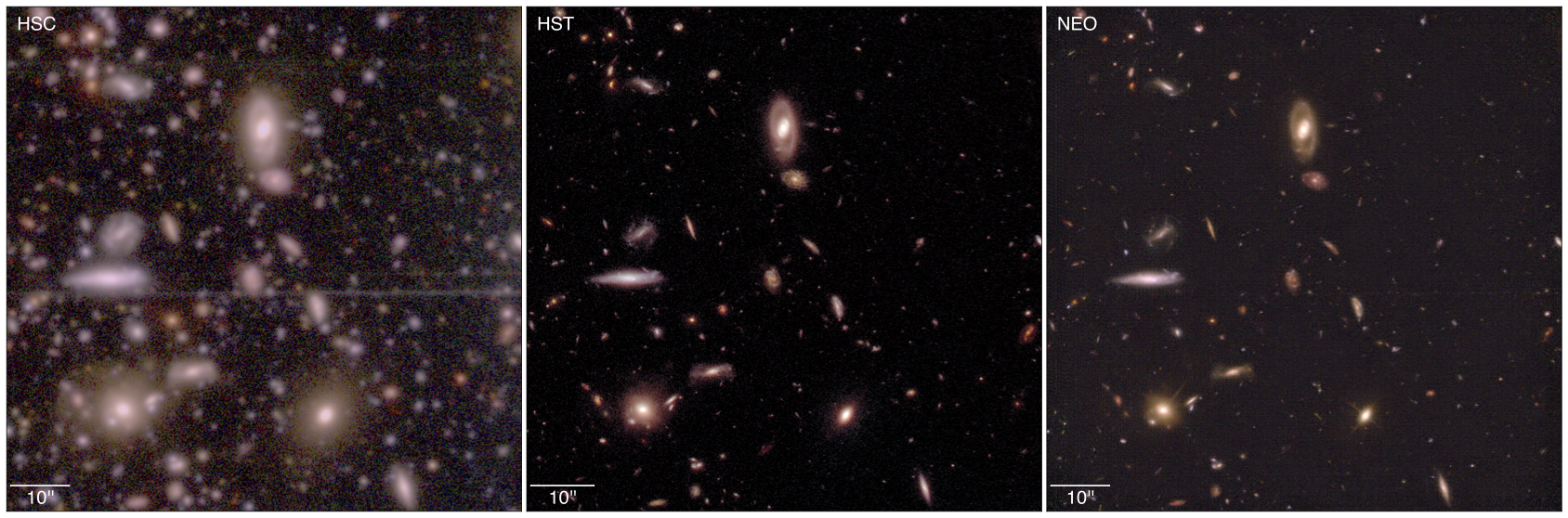

The approach borrows from an unexpected place: video games. It’s conceptually similar to NVIDIA DLSS technology, which uses AI to reconstruct higher-resolution images in real time. The team trains models on space-based data, then applies them to ground-based images, removing atmospheric distortion and recovering finer detail.

Because its Earth-based mirror must observe through the atmosphere, images are subject to distortion, while generating roughly 20 terabytes of data per night.

The result is imagery from Earth that begins to approach the clarity of space telescopes. At Rubin’s scale, that process depends on GPUs.

JWST will not be the last instrument to pose this problem. Rubin’s survey will generate a continuous stream of sky-wide data at a scale astronomy has not dealt with before.

Other observatories are already queued behind it, including NASA’s Nancy Grace Roman Space Telescope and, further out, the proposed Habitable Worlds Observatory, designed to directly image Earthlike exoplanets around nearby stars.

The volume and quality of astronomical data are increasing quickly, which makes the kind of work Robertson’s group does less of a luxury and more of a baseline requirement.

Using Simulations to Test Observations

GPUs are used to analyze observations, as well as to simulate the universe itself. Robertson’s group runs large-scale simulations — virtual volumes evolving over cosmic time — then compares them against telescope data.

Observations inform theory. Simulations test it. The results loop back, refining what astronomers look for next.

“The data feeds the theory,” Robertson said. “And the theory helps us understand what the data means.”

Robertson did not set out to become an astronomer. He once wanted to be a writer. A NASA scholarship pushed him toward physics. The rest followed.

That background shapes how he thinks about access. His team releases its data publicly — nearly 500,000 galaxies, spanning the full history of the universe. The same images used in analysis can be downloaded by anyone.

“The universe isn’t for professors in ivory towers,” Robertson said. “It’s for everyone.”

Read the latest paper by Robertson and his colleagues.