Joseph Fraunhofer was a 19th-century pioneer in optics who brought together scientific research with industrial applications. Fast forward to today and Germany’s Fraunhofer Society — Europe’s largest R&D organization — is setting its sights on the applied research of key technologies, from AI to cybersecurity to medicine.

Its Fraunhofer IML unit is aiming to push the boundaries of logistics and robotics. The German researchers are harnessing NVIDIA Isaac Sim to make advances in robot design through simulation.

Like many — including BMW, Amazon and Siemens — Fraunhofer IML relies on NVIDIA Omniverse. It’s using it to make gains in applied research in logistics for fulfillment and manufacturing.

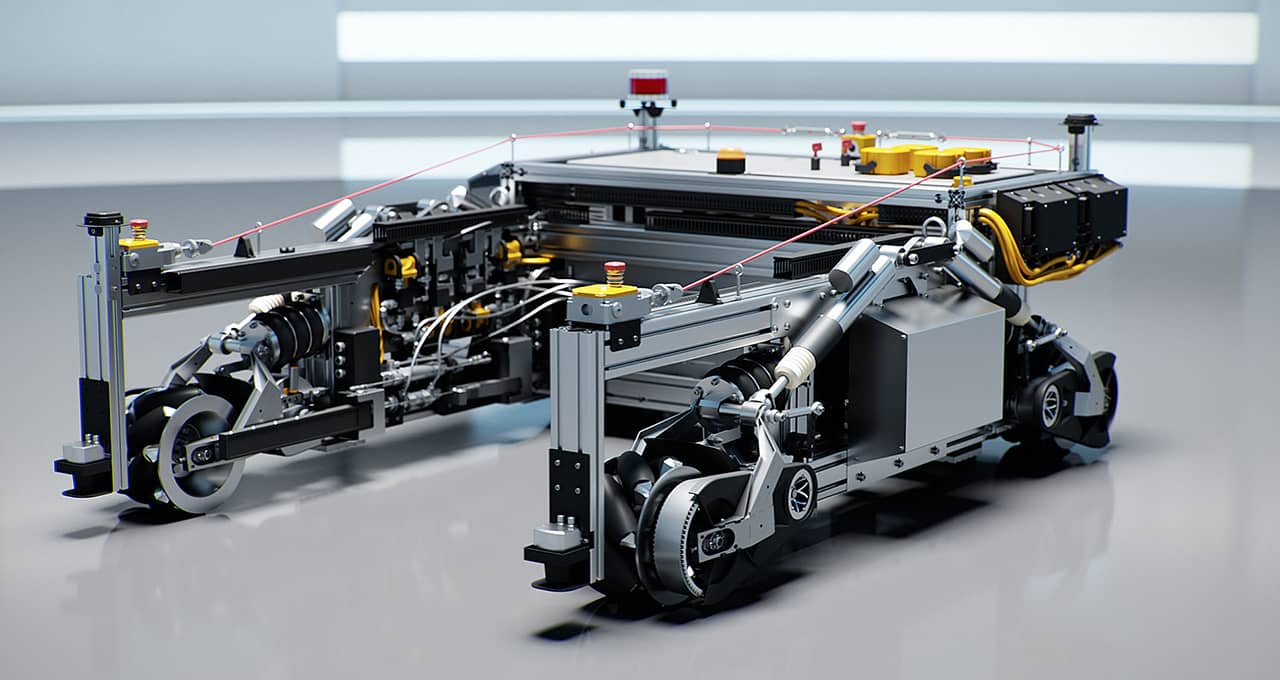

Fraunhofer’s newest innovation, dubbed O3dyn, uses NVIDIA simulation and robotics technologies to create an indoor-outdoor autonomous mobile robot (AMR).

Its goal is to enable the jump from automated guided vehicles to fast-moving AMRs that aren’t even yet available on the market.

This level of automation advancement promises a massive uptick in logistics acceleration.

“We’re looking at how we can go as fast and as safely as possible in logistics scenarios,” said Julian Esser, a robotics and AI researcher at Fraunhofer IML.

From MP3s to AMRs

Fraunhofer IML’s parent organization, based in Dortmund, near the country’s center, has more than 30,000 employees and is involved in hundreds of research projects. In the 1990s, it was responsible for the development of the MP3 file format, which led to the digital music revolution.

Seeking to send the automated guided vehicle along the same path as the compact disc, Fraunhofer in 2013 launched a breakthrough robot now widely used by BMW in its assembly plants and others.

This robot, known as the STR, is a workhorse for industrial manufacturing. It’s used for moving goods for the production lines. Fraunhofer IML’s AI work benefits the STR and other updates to this robotics platform, such as the O3dyn.

Fraunhofer IML is aiming to create AMRs that deliver a new state of the art. The O3dyn relies on the NVIDIA Jetson edge AI and robotics platform for a multitude of camera and sensor inputs to help navigate.

Advancing speed and agility, it’s capable of going up to 30 miles per hour and has wheels assisted by AI for any direction of movement to maneuver tight situations.

“The omnidirectional dynamics is very unique, and there’s nothing like this that we know of in the market,” said Sören Kerner, head of AI and autonomous systems at Fraunhofer IML.

Fraunhofer IML gave a sneak peek at its latest development on this pallet-moving robot at NVIDIA GTC.

Bridging Sim to Real

Using Isaac Sim, Fraunhofer IML’s latest research strives to develop and validate these AMRs in simulation by closing the sim-to-real gap. The researchers rely on Isaac Sim for virtual development of its highly dynamic autonomous mobile robot by exercising the robot in photorealistic, physically accurate 3D worlds.

This enables Fraunhofer to import into the virtual environment its robot’s more than 5,400 parts from computer-aided design software. It can then rig them with physically accurate specifications with Omniverse PhysX.

The result is that the virtual robot version can move as swiftly in simulation as the physical robot in the real world. Harnessing the virtual environment allows Fraunhofer to accelerate development, safely increase accuracy for real-world deployment and scale up faster.

Minimizing the sim-to-real gap makes simulation become a digital reality for robots. It’s a concept Fraunhofer refers to as simulation-based AI.

To make faster gains, Fraunhofer is releasing the AMR simulation model into open source so developers can make improvements.

“This is important for the future of logistics,” said Kerner. “We want to have as many people as possible work on the localization, navigation and AI of these kinds of dynamic robots in simulation.”

Learn more by watching Fraunhofer’s GTC session: “Towards a Digital Reality in Logistics Automation: Optimization of Sim-to-Real.”

Register for the upcoming GTC, running Sept. 19-22, and explore the robotics-related sessions.