AI agent systems today juggle separate models for vision, speech and language — losing time and context as they pass data from one model to the other.

Unveiled today, NVIDIA Nemotron 3 Nano Omni is an open multimodal model that brings these capabilities together into one system, enabling agents to deliver faster, smarter responses with advanced reasoning across video, audio, image and text. This best-in-class model gives enterprises and developers a production path for more efficient and accurate multimodal AI agents with full deployment flexibility and control.

Nemotron 3 Nano Omni sets a new efficiency frontier for open multimodal models with leading accuracy and low cost, topping six leaderboards for complex document intelligence, and video and audio understanding.

AI and software companies already adopting Nemotron 3 Nano Omni include Aible, Applied Scientific Intelligence (ASI), Eka Care, Foxconn, H Company, Palantir and Pyler, with Dell Technologies, Docusign, Infosys, K-Dense, Lila, Oracle and Zefr evaluating the model.

“To build useful agents, you can’t wait seconds for a model to interpret a screen,” said Gautier Cloix, CEO of H Company. “By building on Nemotron 3 Nano Omni, our agents can rapidly interpret full HD screen recordings — something that wasn’t practical before. This isn’t just a speed boost: It’s a fundamental shift in how our agents perceive and interact with digital environments in real time.”

Nemotron 3 Nano Omni Enables Faster, Leaner Multimodal Agents

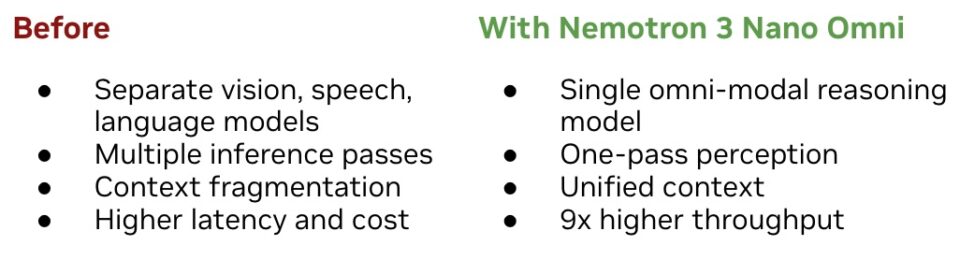

Consider an AI agent for customer support processing a screen recording while analyzing uploaded call audio and checking data logs — or an agent for finance tasked with parsing PDFs, spreadsheets, charts and voice notes. Today, most agentic systems accomplish these tasks with separate models for vision, speech and language.

This approach increases latency through repeated inference passes, fragments context across modalities, and adds cost and inaccuracies over time.

By combining vision and audio encoders within its 30B-A3B, hybrid mixture-of-experts architecture, Nemotron 3 Nano Omni eliminates the need for separate perception models, driving inference efficiency at scale. It pairs this efficiency with strong multimodal perception accuracy, enabling AI systems to achieve 9x higher throughput than other open omni models with the same interactivity. The result is lower costs and better scalability without sacrificing responsiveness or quality.

In agentic systems, Nemotron 3 Nano Omni can work alongside proprietary cloud models or other NVIDIA Nemotron open models — such as Nemotron 3 Super for high-frequency execution or Nemotron 3 Ultra for complex planning — as well as proprietary models from other providers, to power sub-agents for agentic workflows such as computer use, document intelligence and audio-video reasoning.

- Computer use agents — Nemotron 3 Nano Omni powers the perception loop for agents navigating graphical user interfaces, reasoning over onscreen content and understanding user interface state over time. H Company’s latest computer usage agent, powered by Nemotron 3 Nano Omni, uses a native input resolution of 1920×1080 pixels to achieve high-fidelity visual reasoning. In preliminary evaluations on the OSWorld benchmark, this integration showed a significant leap in navigating complex graphical interfaces and used Nemotron 3 Nano Omni’s ability to process very high-resolution images.

- Document intelligence — Interprets documents, charts, tables, screenshots and mixed-media inputs, enabling agents to reason across visual structure and text content coherently. Critical for enterprise analysis and compliance workflows.

- Audio and video understanding — For customer service, research and monitoring workflows, Nemotron 3 Nano Omni maintains audio-video context, tying what was said, shown and documented into a single reasoning stream instead of disconnected summaries.

Open and Customizable, Deployable Anywhere

Nemotron 3 Nano Omni is released with open weights, datasets and training techniques — giving organizations full transparency and control over how the model is customized and deployed.

Developers can use tools like NVIDIA NeMo for customization, evaluation and optimization for domain-specific use cases. Because the Nemotron family of models is open, organizations can deploy them in environments that meet regulatory, sovereignty or data localization requirements.

The Nemotron 3 family — including Nano, Super and Ultra models — has seen over 50 million downloads in the past year. Omni extends the family’s capabilities into multimodal and agentic domains.

The model is available on Hugging Face, OpenRouter and build.nvidia.com as an NVIDIA NIM microservice and through a broad ecosystem of NVIDIA Cloud Partners, inference platforms and cloud service providers.

Its open, lightweight architecture supports consistent deployment from local systems like NVIDIA Jetson hardware, NVIDIA DGX Spark and DGX Station to data center and cloud environments.

Visit the NVIDIA technical blog for tutorials, cookbooks and deployment guides for Nemotron 3 Nano Omni use cases. Stay up to date on agentic AI, NVIDIA Nemotron and more by subscribing to NVIDIA news, joining the community and following NVIDIA AI on LinkedIn, Instagram, X and Facebook.