The creation of 3D objects for building scenes for games, virtual worlds including the metaverse, product design or visual effects is traditionally a meticulous process, where skilled artists balance detail and photorealism against deadlines and budget pressures.

It takes a long time to make something that looks and acts as it would in the physical world. And the problem gets harder when multiple objects and characters need to interact in a virtual world. Simulating physics becomes just as important as simulating light. A robot in a virtual factory, for example, needs to have not only the same look, but also the same weight capacity and braking capability as its physical counterpart.

It’s hard. But the opportunities are huge, affecting trillion-dollar industries as varied as transportation, healthcare, telecommunications and entertainment, in addition to product design. Ultimately, more content will be created in the virtual world than in the physical one.

To simplify and shorten this process, NVIDIA today released new research and a broad suite of tools that apply the power of neural graphics to the creation and animation of 3D objects and worlds.

These SDKs — including NeuralVDB, a ground-breaking update to industry standard OpenVDB, and Kaolin Wisp, a PyTorch library establishing a framework for neural fields research — ease the creative process for designers while making it easy for millions of users who aren’t design professionals to create 3D content.

Neural graphics is a new field intertwining AI and graphics to create an accelerated graphics pipeline that learns from data. Integrating AI enhances results, helps automate design choices and provides new, yet to be imagined opportunities for artists and creators. Neural graphics will redefine how virtual worlds are created, simulated and experienced by users.

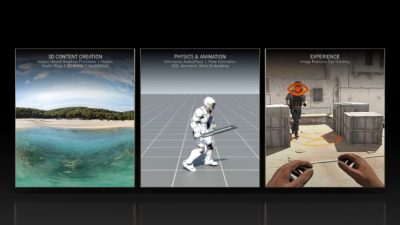

These SDKs and research contribute to each stage of the content creation pipeline, including:

3D Content Creation

- Kaolin Wisp – an addition to Kaolin, a PyTorch library enabling faster 3D deep learning research by reducing the time needed to test and implement new techniques from weeks to days. Kaolin Wisp is a research-oriented library for neural fields, establishing a common suite of tools and a framework to accelerate new research in neural fields.

- Instant Neural Graphics Primitives – a new approach to capturing the shape of real-world objects, and the inspiration behind NVIDIA Instant NeRF, an inverse rendering model that turns a collection of still images into a digital 3D scene. This technique and associated GitHub code accelerate the process by up to 1,000x.

- 3D MoMa – a new inverse rendering pipeline that allows users to quickly import a 2D object into a graphics engine to create a 3D object that can be modified with realistic materials, lighting and physics.

- GauGAN360 – the next evolution of NVIDIA GauGAN, an AI model that turns rough doodles into photorealistic masterpieces. GauGAN360 generates 8K, 360-degree panoramas that can be ported into Omniverse scenes.

- Omniverse Avatar Cloud Engine (ACE) – a new collection of cloud APIs, microservices and tools to create, customize and deploy digital human applications. ACE is built on NVIDIA’s Unified Compute Framework, allowing developers to seamlessly integrate core NVIDIA AI technologies into their avatar applications.

Physics and Animation

- NeuralVDB – a groundbreaking improvement on OpenVDB, the current industry standard for volumetric data storage. Using machine learning, NeuralVDB introduces compact neural representations, dramatically reducing memory footprint to allow for higher-resolution 3D data.

- Omniverse Audio2Face – an AI technology that generates expressive facial animation from a single audio source. It’s useful for interactive real-time applications and as a traditional facial animation authoring tool.

- ASE: Animation Skills Embedding – an approach enabling physically simulated characters to act in a more responsive and life-like manner in unfamiliar situations. It uses deep learning to teach characters how to respond to new tasks and actions.

- TAO Toolkit – a framework to enable users to create an accurate, high-performance pose estimation model, which can evaluate what a person might be doing in a scene using computer vision much more quickly than current methods.

Experience

- Image Features Eye Tracking – a research model linking the quality of pixel rendering to a user’s reaction time. By predicting the best combination of rendering quality, display properties and viewing conditions for the least latency, It will allow for better performance in fast-paced, interactive computer graphics applications such as competitive gaming.

- Holographic Glasses for Virtual Reality – a collaboration with Stanford University on a new VR glasses design that delivers full-color 3D holographic images in a groundbreaking 2.5-mm-thick optical stack.

Join NVIDIA at SIGGRAPH to see more of the latest research and technology breakthroughs in graphics, AI and virtual worlds. Check out the latest innovations from NVIDIA Research, and access the full suite of NVIDIA’s SDKs, tools and libraries.