High performance computing and AI are accelerating the scientific discoveries that shape our future.

From weather forecasting and energy production to precision agriculture and life sciences, researchers are fusing traditional simulations with AI, machine learning, deep learning, big data analytics and edge computing to solve the world’s greatest challenges.

Running May 29-June 2, ISC High Performance 2022 gathers thousands together for a hybrid conference held online and in Hamburg, Germany. The international supercomputing conference will feature keynote speakers, talks, demos, workshops and tutorials to discover the latest breakthroughs in HPC and AI.

1. Hear From Technology Leaders

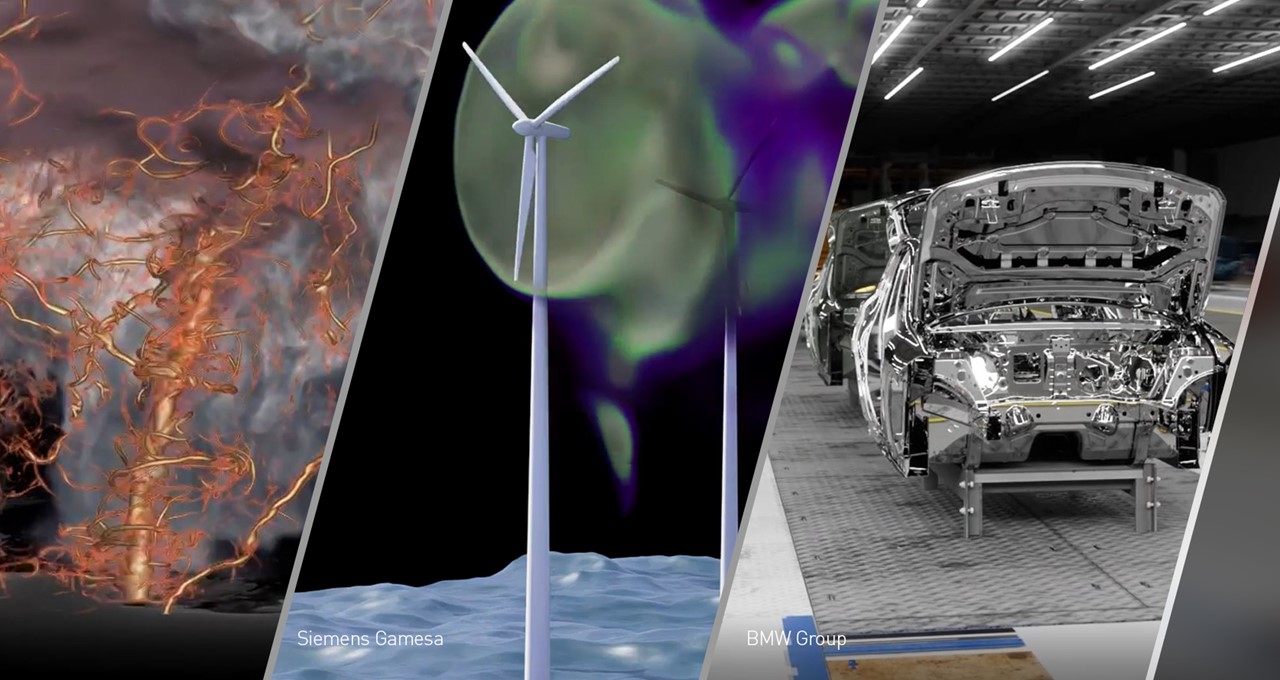

ISC kicks off with the keynote address, this year delivered by Rev Lebaredian, vice president of Omniverse and simulation technology at NVIDIA, who’ll be joined by Michele Melchiorre, senior vice president of production system, technical planning, tool shop and plant construction at BMW Group.

They’ll cover how technology like NVIDIA Omniverse Enterprise has led to a big bang in large-scale, virtual world and digital twin simulation. Discover how digital twins enable the next era of industrial virtualization and AI. Plus, Melchiorre will give attendees the opportunity to explore BMW’s virtual production system.

Catch the keynote, “Supercomputing: The Key to Unlocking the Next Level of Digital Twins,” on Monday, May 30, at 9:15 a.m. Central European time online or in hall 4.

ISC’s opening day ends with the NVIDIA special address delivered by Ian Buck, vice president of accelerated computing at NVIDIA. Learn about the latest technologies and innovations transforming the world of AI and computational science, from the data center to the cloud to the edge.

Watch his special address, “Accelerating a New Wave of AI Innovation and Scientific Discovery,” on Monday, May 30, at 6:30 p.m. CET in hall 4.

2. Learn From NVIDIA Experts

NVIDIA technology powers eight of the top 10 supercomputers in the world, as well as almost 70 percent of the TOP500 list.

Attend these key ISC sessions hosted by NVIDIA experts, in person or online:

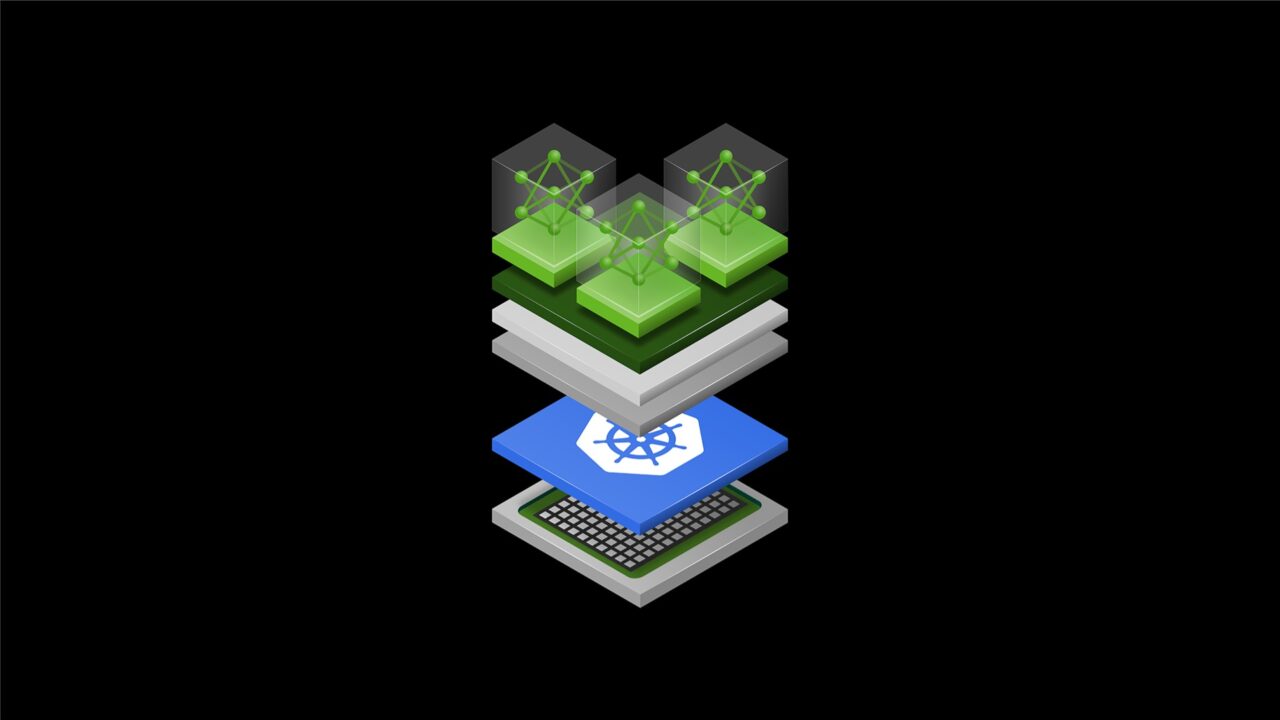

- Join the tutorial from NVIDIA Deep Learning Institute experts on “Accelerating Scientific Computing Workflows Through GPU-Based Quantum-Classical Programming, Compilation, and Simulation.”

- Tom Gibbs, senior business development manager at NVIDIA, joins a panel to discuss the question: “Have Artificial Intelligence and Machine Learning Taken Us into the Post-Algorithmic Era?”

- Gilad Shainer, senior vice president of networking at NVIDIA, delivers the birds of a feather session, “InfiniBand In-Network Computing Technology and Roadmap.”

- Thorsten Kurth, senior software engineer at NVIDIA, joins a panel to talk through “MLPerf: A Benchmark for Machine Learning.”

- Mozhgan Kabiri Chimeh, GPU developer advocate at NVIDIA, hosts the “Women in HPC: Creating a Diverse and Inclusive Community” workshop.

Check out these sessions and more in NVIDIA’s ISC schedule.

3. See the Technology in Action

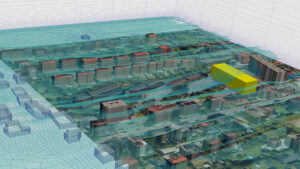

Industry leaders, scientists and researchers will present a variety of ISC demos that explain their groundbreaking work using GPU and HPC technologies.

The demos, available at in-person partner booths and online, explore how NVIDIA technologies are transforming industries with new capabilities, with NVIDIA GPUs being used to view, measure and track plant growth, predict global weather extremes, sequence genomes at record-breaking speeds and more.

4. Master New Skills

Developers are changing the world, one line of code at a time.

Learn key skills in accelerated computing at a Deep Learning Institute tutorial at ISC, and get an exclusive discount on upcoming hands-on workshops in May. Attendees of the NVIDIA special address will also get complementary codes for online, self-paced courses.

Student teams will have the opportunity to battle it out in the virtual student cluster competition, allowing teams to show off their HPC knowledge. They’ll design state-of-the-art systems that must achieve the highest performance across a series of benchmarks and challenges, while adhering to the competition’s strict power constraints.

This year, 17 student teams from all continents will participate, designing and building virtual clusters using the NVIDIA Quantum InfiniBand platform and NVIDIA GPUs.

The student cluster competition award ceremony takes place on Wednesday, June 1, at 5:25 p.m. CET.

Keep up to date on all things ISC on NVIDIA’s event page and follow NVIDIA Europe on social media @NVIDIAEU.