Editor’s note: This post is part of the AI Decoded series, which demystifies AI by making the technology more accessible and showcases new hardware, software, tools and accelerations for NVIDIA RTX PC and workstation users.

In the rapidly evolving world of artificial intelligence, generative AI is captivating imaginations and transforming industries. Behind the scenes, an unsung hero is making it all possible: microservices architecture.

The Building Blocks of Modern AI Applications

Microservices have emerged as a powerful architecture, fundamentally changing how people design, build and deploy software.

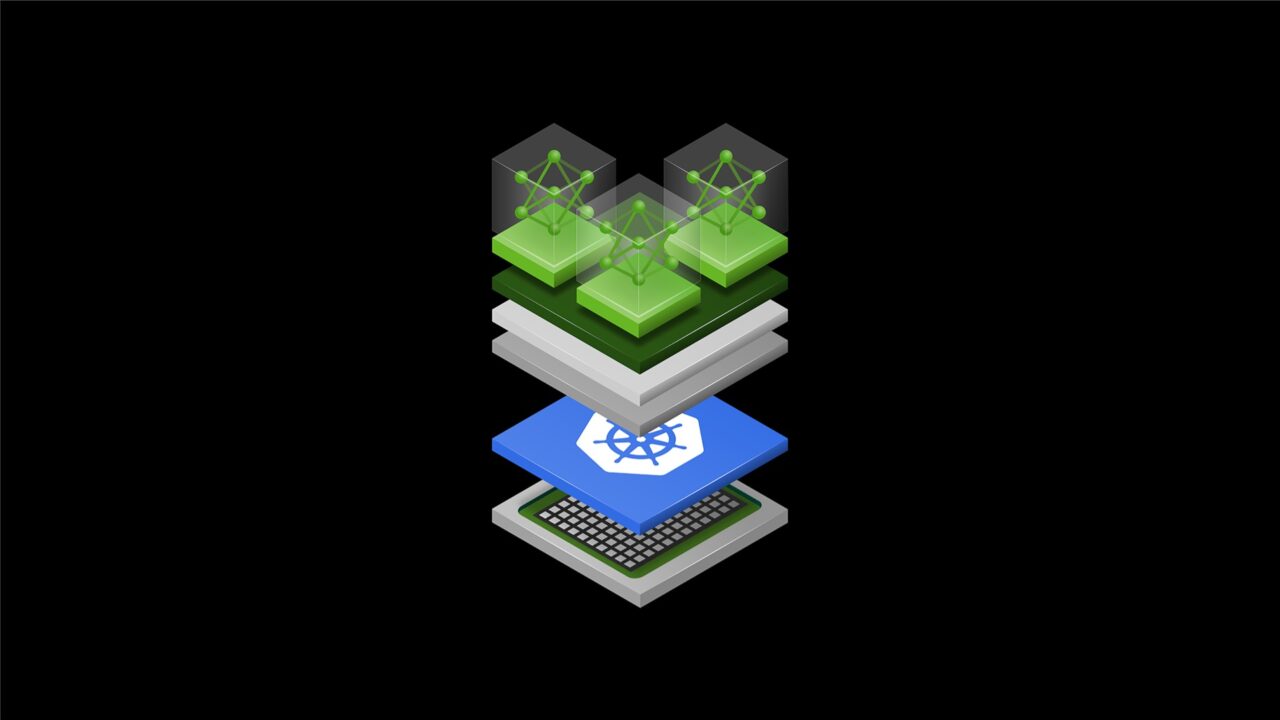

A microservices architecture breaks down an application into a collection of loosely coupled, independently deployable services. Each service is responsible for a specific capability and communicates with other services through well-defined application programming interfaces, or APIs. This modular approach stands in stark contrast to traditional all-in-one architectures, in which all functionality is bundled into a single, tightly integrated application.

By decoupling services, teams can work on different components simultaneously, accelerating development processes and allowing updates to be rolled out independently without affecting the entire application. Developers can focus on building and improving specific services, leading to better code quality and faster problem resolution. Such specialization allows developers to become experts in their particular domain.

Services can be scaled independently based on demand, optimizing resource utilization and improving overall system performance. In addition, different services can use different technologies, allowing developers to choose the best tools for each specific task.

A Perfect Match: Microservices and Generative AI

The microservices architecture is particularly well-suited for developing generative AI applications due to its scalability, enhanced modularity and flexibility.

AI models, especially large language models, require significant computational resources. Microservices allow for efficient scaling of these resource-intensive components without affecting the entire system.

Generative AI applications often involve multiple steps, such as data preprocessing, model inference and post-processing. Microservices enable each step to be developed, optimized and scaled independently. Plus, as AI models and techniques evolve rapidly, a microservices architecture allows for easier integration of new models as well as the replacement of existing ones without disrupting the entire application.

NVIDIA NIM: Simplifying Generative AI Deployment

As the demand for AI-powered applications grows, developers face challenges in efficiently deploying and managing AI models.

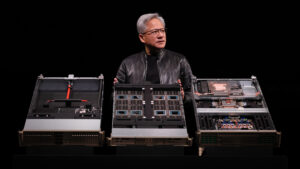

NVIDIA NIM inference microservices provide models as optimized containers to deploy in the cloud, data centers, workstations, desktops and laptops. Each NIM container includes the pretrained AI models and all the necessary runtime components, making it simple to integrate AI capabilities into applications.

NIM offers a game-changing approach for application developers looking to incorporate AI functionality by providing simplified integration, production-readiness and flexibility. Developers can focus on building their applications without worrying about the complexities of data preparation, model training or customization, as NIM inference microservices are optimized for performance, come with runtime optimizations and support industry-standard APIs.

AI at Your Fingertips: NVIDIA NIM on Workstations and PCs

Building enterprise generative AI applications comes with many challenges. While cloud-hosted model APIs can help developers get started, issues related to data privacy, security, model response latency, accuracy, API costs and scaling often hinder the path to production.

Workstations with NIM provide developers with secure access to a broad range of models and performance-optimized inference microservices.

By avoiding the latency, cost and compliance concerns associated with cloud-hosted APIs as well as the complexities of model deployment, developers can focus on application development. This accelerates the delivery of production-ready generative AI applications — enabling seamless, automatic scale out with performance optimization in data centers and the cloud.

The recently announced general availability of the Meta Llama 3 8B model as a NIM, which can run locally on RTX systems, brings state-of-the-art language model capabilities to individual developers, enabling local testing and experimentation without the need for cloud resources. With NIM running locally, developers can create sophisticated retrieval-augmented generation (RAG) projects right on their workstations.

Local RAG refers to implementing RAG systems entirely on local hardware, without relying on cloud-based services or external APIs.

Developers can use the Llama 3 8B NIM on workstations with one or more NVIDIA RTX 6000 Ada Generation GPUs or on NVIDIA RTX systems to build end-to-end RAG systems entirely on local hardware. This setup allows developers to tap the full power of Llama 3 8B, ensuring high performance and low latency.

By running the entire RAG pipeline locally, developers can maintain complete control over their data, ensuring privacy and security. This approach is particularly helpful for developers building applications that require real-time responses and high accuracy, such as customer-support chatbots, personalized content-generation tools and interactive virtual assistants.

Hybrid RAG combines local and cloud-based resources to optimize performance and flexibility in AI applications. With NVIDIA AI Workbench, developers can get started with the hybrid-RAG Workbench Project — an example application that can be used to run vector databases and embedding models locally while performing inference using NIM in the cloud or data center, offering a flexible approach to resource allocation.

This hybrid setup allows developers to balance the computational load between local and cloud resources, optimizing performance and cost. For example, the vector database and embedding models can be hosted on local workstations to ensure fast data retrieval and processing, while the more computationally intensive inference tasks can be offloaded to powerful cloud-based NIM inference microservices. This flexibility enables developers to scale their applications seamlessly, accommodating varying workloads and ensuring consistent performance.

NVIDIA ACE NIM inference microservices bring digital humans, AI non-playable characters (NPCs) and interactive avatars for customer service to life with generative AI, running on RTX PCs and workstations.

ACE NIM inference microservices for speech — including Riva automatic speech recognition, text-to-speech and neural machine translation — allow accurate transcription, translation and realistic voices.

The NVIDIA Nemotron small language model is a NIM for intelligence that includes INT4 quantization for minimal memory usage and supports roleplay and RAG use cases.

And ACE NIM inference microservices for appearance include Audio2Face and Omniverse RTX for lifelike animation with ultrarealistic visuals. These provide more immersive and engaging gaming characters, as well as more satisfying experiences for users interacting with virtual customer-service agents.

Dive Into NIM

As AI progresses, the ability to rapidly deploy and scale its capabilities will become increasingly crucial.

NVIDIA NIM microservices provide the foundation for this new era of AI application development, enabling breakthrough innovations. Whether building the next generation of AI-powered games, developing advanced natural language processing applications or creating intelligent automation systems, users can access these powerful development tools at their fingertips.

Ways to get started:

- Experience and interact with NVIDIA NIM microservices on ai.nvidia.com.

- Join the NVIDIA Developer Program and get free access to NIM for testing and prototyping AI-powered applications.

- Buy an NVIDIA AI Enterprise license with a free 90-day evaluation period for production deployment and use NVIDIA NIM to self-host AI models in the cloud or in data centers.

Generative AI is transforming gaming, videoconferencing and interactive experiences of all kinds. Make sense of what’s new and what’s next by subscribing to the AI Decoded newsletter.