Editor’s note: This post is part of our weekly In the NVIDIA Studio series, which celebrates featured artists, offers creative tips and tricks, and demonstrates how NVIDIA Studio technology improves creative workflows. We’re also deep diving on new GeForce RTX 40 Series GPU features, technologies and resources, and how they dramatically accelerate content creation.

RTX Video HDR — first announced at CES — is now available for download through the January Studio Driver. It uses AI to transform standard dynamic range video playing in internet browsers into stunning high dynamic range (HDR) on HDR10 displays.

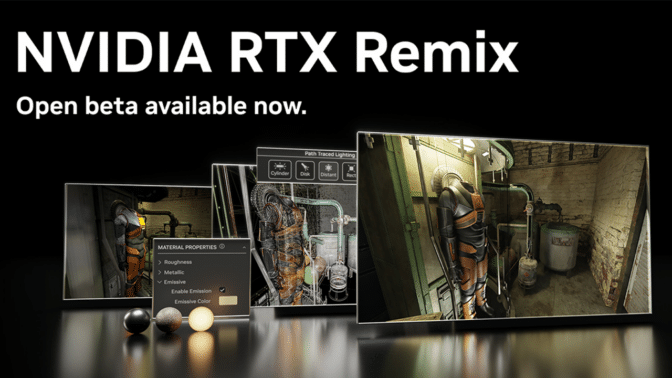

PC game modders now have a powerful new set of tools to use with the release of the NVIDIA RTX Remix open beta.

It features full ray tracing, NVIDIA DLSS, NVIDIA Reflex, modern physically based rendering assets and generative AI texture tools so modders can remaster games more efficiently than ever.

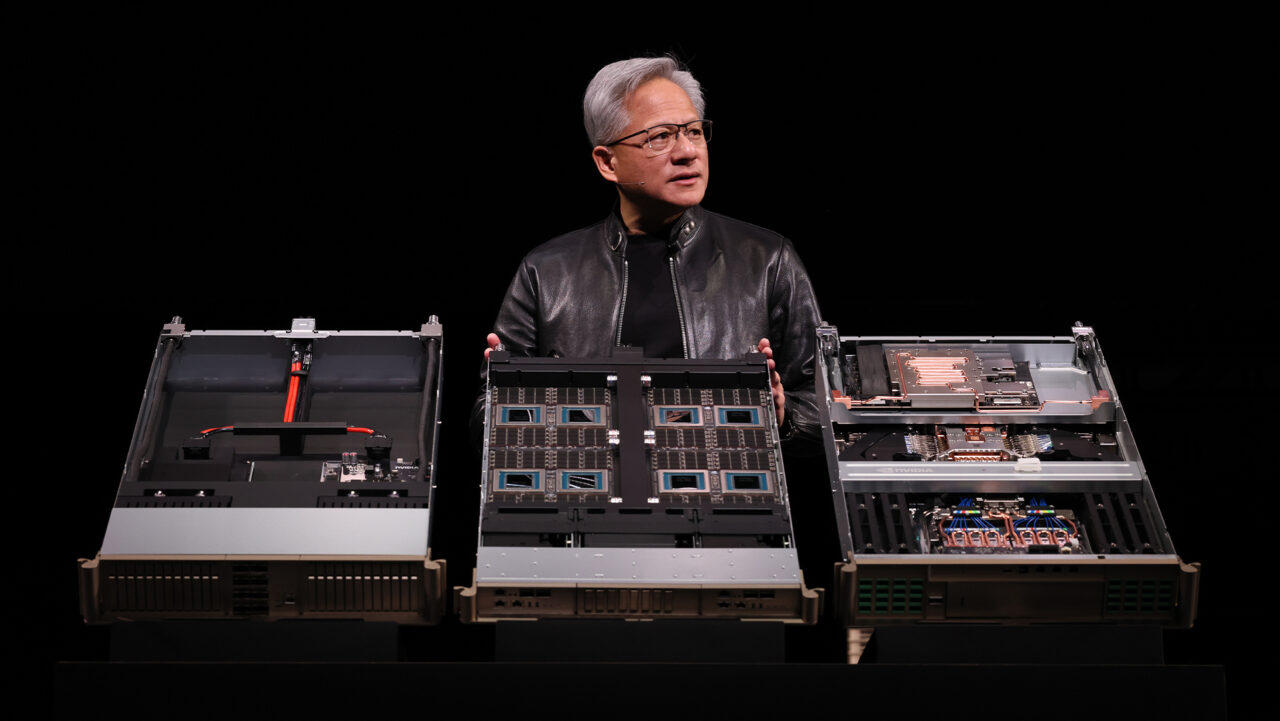

Pick up the new GeForce RTX 4070 Ti SUPER available from custom board partners in stock-clocked and factory-overclocked configurations to enhance creating, gaming and AI tasks.

Part of the 40 SUPER Series announced at CES, it’s equipped with more CUDA cores than the RTX 4070, a frame buffer increased to 16GB, and a 256-bit bus — perfect for video editing and rendering large 3D scenes. It runs up to 1.6x faster than the RTX 3070 Ti and 2.5x faster with DLSS 3 in the most graphics-intensive games.

And this week’s featured In the NVIDIA Studio technical artist Vishal Ranga shares his vivid 3D scene Disowned — powered by NVIDIA RTX and Unreal Engine with DLSS.

RTX Video HDR Delivers Dazzling Detail

Using the power of Tensor Cores on GeForce RTX GPUs, RTX Video HDR allows gamers and creators to maximize their HDR panel’s ability to display vivid, dynamic colors, preserving intricate details that may be inadvertently lost due to video compression.

RTX Video HDR and RTX Video Super Resolution can be used together to produce the clearest streamed video anywhere, anytime. These features work on Chromium-based browsers such as Google Chrome or Microsoft Edge.

To enable RTX Video HDR:

- Download and install the January Studio Driver.

- Ensure Windows HDR features are enabled by navigating to System > Display > HDR.

- Open the NVIDIA Control Panel and navigate to Adjust video image settings > RTX Video Enhancement — then enable HDR.

Standard dynamic range video will then automatically convert to HDR, displaying remarkably improved details and sharpness.

RTX Video HDR is among the RTX-powered apps enhancing everyday PC use, productivity, creating and gaming. NVIDIA Broadcast supercharges mics and cams; NVIDIA Canvas turns simple brushstrokes into realistic landscape images; and NVIDIA Omniverse seamlessly connects 3D apps and creative workflows. Explore exclusive Studio tools, including industry-leading NVIDIA Studio Drivers — free for RTX graphics card owners — which support the latest creative app updates, AI-powered features and more.

RTX Video HDR requires an RTX GPU connected to an HDR10-compatible monitor or TV. For additional information, check out the RTX Video FAQ.

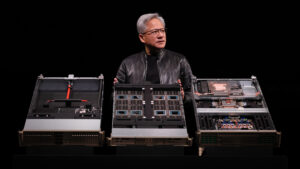

Introducing the Remarkable RTX Remix Open Beta

Built on NVIDIA Omniverse, the RTX Remix open beta is available now.

It allows modders to easily capture game assets, automatically enhance materials with generative AI tools, reimagine assets via Omniverse-connected apps and Universal Scene Description (OpenUSD), and quickly create stunning RTX remasters of classic games with full ray tracing and NVIDIA DLSS technology.

RTX Remix has already delivered stunning remasters, such as Portal with RTX and the modder-made Portal: Prelude RTX. Orbifold Studios is now using the technology to develop Half-Life 2 RTX: An RTX Remix Project, a community remaster of one of the highest-rated games of all time. Check out the gameplay trailer, showcasing Orbifold Studios’ latest updates to Ravenholm:

Learn more about the RTX Remix open beta and sign up to gain access.

Leveling Up With RTX

Vishal Ranga has a decade’s worth of experience in the gaming industry, where he pursues level design.

“I’ve loved playing video games since forever, and that curiosity led me to game design,” he said. “A few years later, I found my sweet spot in technical art.”

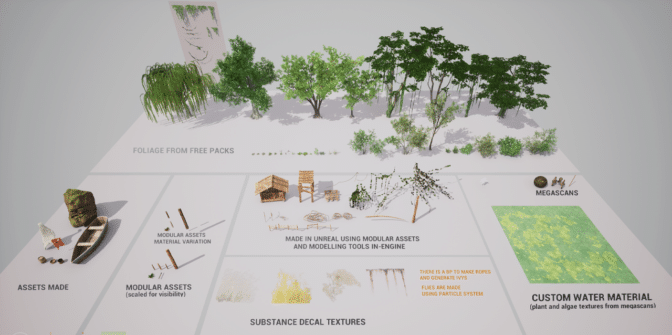

His stunning scene Disowned was born out of experimentation with Unreal Engine’s new ray-traced global illumination lighting capabilities.

Remarkably, he skipped the concepting process — the entire project was conceived solely from Ranga’s imagination.

Applying the water shader and mocking up the lighting early helped Ranga set up the mood of the scene. He then updated old assets and searched the Unreal Engine store for new ones — what he couldn’t find, like fishing nets and custom flags, he created from scratch.

“I chose a GeForce RTX GPU to use ray-traced dynamic global illumination with RTX cards for natural, more realistic light bounces.” — Vishal Ranga

Ranga’s GeForce RTX graphics card unlocked RTX-accelerated rendering for high-fidelity, interactive visualization of 3D designs during virtual production.

Next, he tackled shader work, blending in moss and muck into models of wood, nets and flags. He also created a volumetric local fog shader to complement the assets as they pass through the fog, adding greater depth to the scene.

Ranga then polished everything up. He first used a water shader to add realism to reflections, surface moss and subtle waves, then tinkered with global illumination and reflection effects, along with other post-process settings.

Ranga used Unreal Engine’s internal high-resolution screenshot feature and sequencer to capture renders. This was achieved by cranking up screen resolution to 200%, resulting in crisper details.

Throughout, DLSS enhanced Ranga’s creative workflow, allowing for smooth scene movement while maintaining immaculate visual quality.

When finished with adjustments, Ranga exported the final scene in no time thanks to his RTX GPU.

Ranga encourages budding artists who are excited by the latest creative advances but wondering where to begin to “practice your skills, prioritize the basics.”

“Take the time to practice and really experience the highs and lows of the creation process,” he said. “And don’t forget to maintain good well-being to maximize your potential.”

Check out Ranga’s portfolio on ArtStation.

Follow NVIDIA Studio on Instagram, X and Facebook. Access tutorials on the Studio YouTube channel and get updates directly in your inbox by subscribing to the Studio newsletter.