The fastest supercomputers are driven by x86 and Power architectures in conjunction with NVIDIA GPUs. But with sites in China, Europe and Japan working on their first exascale systems powered by Arm processors, the energy-efficient CPU architecture is gaining adoption in the tier 1 high performance computing space.

As sites diversify, NVIDIA’s NGC container registry helps system admins reduce the complexity of managing clusters by simplifying application deployments on their Arm systems.

Containers eliminate the need to build and manage complex environment modules and allow users to deploy applications faster and get reproducible results without impacting performance.

To give users faster access and higher productivity, NVIDIA will enable NGC deep learning containers to run on Singularity.

NGC Containers Improve Productivity

The NGC registry offers containers for HPC applications, deep learning frameworks, machine learning algorithms and scientific visualization tools. These containers are optimized for performance, support multi-GPU and multi-node systems, and are tested to run on various platforms including workstations, servers and cloud environments.

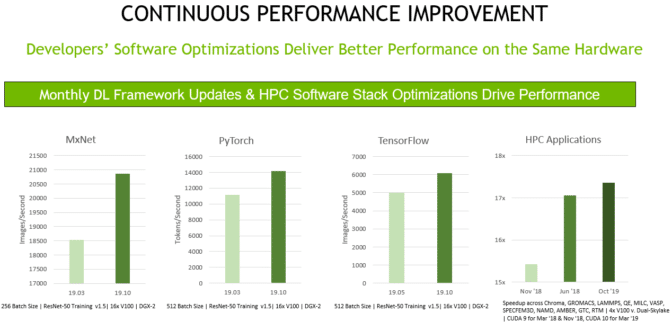

Deep learning frameworks like TensorFlow, PyTorch, MxNet and NVCaffe are continuously updated for performance and released monthly on NGC, so users can build larger, faster and higher accuracy networks. HPC application containers are built by developers, with new releases giving users easy access to the latest features and better performance on the same hardware.

Deep Learning Containers Run in Singularity

Scientists are increasingly using AI in their research, requiring system admins to navigate complex installations of frequently released deep learning frameworks.

Singularity is one of the most popular container runtimes in HPC because it allows users to pull and run containers in unprivileged mode on a multi-tenant system. The lack of support for Singularity has discouraged HPC researchers and system admins from using deep learning containers from NGC.

We’re now extending support, in beta, for Singularity runtime to NGC’s deep learning containers, starting with v19.11. We had previously announced Singularity support for the HPC containers from NGC.

The Singularity-centric instructions are documented in respective READMEs so users can easily deploy NGC containers in their HPC environments.

Building the Arm Ecosystem

The Arm platform is rapidly improving as various building blocks from storage to networking to GPU processing start working together. In addition to the hardware infrastructure, developers of the top HPC and visualization applications have ported their code to run on Arm.

To further simplify the use of accelerated HPC applications on GPU-powered systems, we’re expanding NGC to offer HPC, deep learning and visualization containers on Arm. These containers are tested on Singularity, allowing system admins to easily support their users.

Easily Deploy Julia on x86 and Arm

Julia is an open source programming language for HPC. Now available through containers for x86 and Arm, Julia can be used for GPU programming by writing CUDA kernels in Julia or by using the powerful array programming model.

Julia offers a package for a comprehensive HPC ecosystem covering machine learning, data science, various scientific domains and visualization.

An Ecosystem of Container Tools for HPC Clusters

Some supercomputing centers prefers to build and host their own containers. HPC Container Maker is an open-source project that simplifies the development of HPC application containers.

HPCCM encapsulates into modular building blocks, follows the best practices of deploying core HPC components with container best practices, minimizes image size and takes advantage of other container capabilities.

HPC system admins managing air-gapped systems can now automatically download and save the latest versions of the NGC containers in Singularity formats with NGC Container Replicator. This gives users faster access to the software, alleviates their network traffic and saves storage space.

NGC for HPC at the Edge, Data Center and Cloud

The amount of data gathered from billions of sensors in the field means HPC needs to expand beyond traditional simulations to processing sensor data at the edge. For example, ECMWF, the European weather agency, generates over 250TB of data per day. The best option for organizations dealing with massive datasets like these is to analyze it in real time where it’s generated and and to use the important data to build better models.

NGC allows you to easily and consistently deploy the same HPC and AI software stack at the edge, in the data center or the cloud on bare-metal, in virtual machines or on Kubernetes.

Deploy NGC Software Today

Pull your application container from ngc.nvidia.com and run it in Singularity or Docker on any GPU-powered x86 or Arm system.