In 1979, International Harvester memorably promoted “Scout” — considered by many as the forerunner of the modern-day SUV — with the slogan “Anything less is just a car.”

As the vehicle forged a muddy river, the ad campaign made clear that regular cars simply weren’t good enough for all driving requirements, but the rugged Scout brought the benefits of a truck to consumers.

For the many people now working with intense AI and data science workloads, mobile workstations with full GPU acceleration are the Scouts of computing — anything less is just a laptop.

Just as SUVs are built for the rigors of off-road driving, so too are mobile workstations for the extreme demands of data science and AI workloads. A full GPU-accelerated AI stack is needed and large GPU memory is a prerequisite to crunch large datasets required by AI algorithms.

All GPU-enabled laptops provide some level of acceleration for suitable workloads, but mobile data science workstations offer benefits beyond speed. And despite their beefy performance, some of these workstations are surprisingly lightweight.

Here are four primary reasons why mobile workstations are better for data science:

1. Freeing developers to be more productive

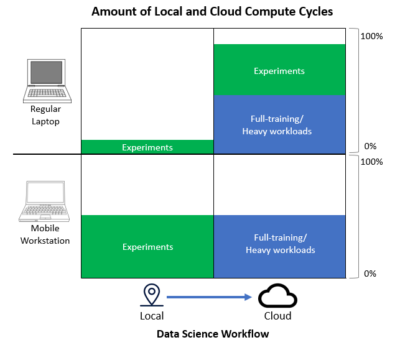

Development on mobile workstations can provide a better balance between local and remote compute by providing developers with greater freedom and productivity.

Freedom for mobile workstations means the ability to try wild experiments and develop creatively from anywhere. Developers can try anything as often as desired, which provides a flexibility that can translate into a better workstyle, lifestyle and greater productivity — with less stress.

The ability to experiment and iterate locally frees data scientists to utilize the cloud where it makes the most sense — such as large datasets and training models. Additionally, developers are more productive when it’s easy to experiment with different algorithms, parameters and data without regard to cloud setup and costs. Other development tasks can also be more efficient and cost-effective, including reviewing and cleaning data, exploring data features and evaluating, building and testing of models.

The ability to experiment and iterate locally frees data scientists to utilize the cloud where it makes the most sense — such as large datasets and training models. Additionally, developers are more productive when it’s easy to experiment with different algorithms, parameters and data without regard to cloud setup and costs. Other development tasks can also be more efficient and cost-effective, including reviewing and cleaning data, exploring data features and evaluating, building and testing of models.

Mobile workstations excel at interactive workloads, live-stream object detection and many NLP models while making exploratory programming much easier. Fine-tuning an NLP model (for example, automatic speech recognition, text-to-speech and optical character recognition) to evaluate locally can shorten experiment time. It’s also speedier for models that directly interact with the camera, microphone and display, such as when prototyping a voicebot.

Many data scientists are optimizing AI workloads on mobile workstations with quick multiple iterations and fast trial results. After optimizing, the larger dataset runs more efficiently on local server or cloud resources.

Productivity is further enhanced when mobile workstations are supported by a catalog of GPU-optimized software such as the NGC hub. At no cost, developers can download pretrained models, GPU-optimized AI, deep-learning frameworks and various industry-specific AI software development kits like NVIDIA Riva for natural language processing and NVIDIA Merlin for recommender systems.

2. Lower cost with faster results

While any laptop can send AI workloads to the cloud, costs there can add up quickly. Scheduling requirements for local or remote shared resources can delay results for data preparation and developing analytics models that use machine learning, deep learning and other intensive workloads.

Mobile data science workstations put the power of the cloud in your lap. Running cloud experiments locally eliminates a large percentage of cloud cost and often saves time. Intensive model training is the only significant cloud cost in mobile workstation development workflows.

Like the largely fixed costs of owning your own car vs. racking up the metered costs of ridesharing, mobile workstations make sense for many sizable data science workloads, including training models using machine learning and deep learning.

Beyond the consideration of opex, shared resources must often be scheduled, can get bottlenecked or are too inconvenient to set up and use. Breakeven costs and the cost of moving big data from edge to cloud must also be accounted for. The reality is that workloads typically run on larger shared resources can make more sense when executed on a mobile GPU-enabled workstation.

3. A consistent experience

Getting a consistent experience across mobile workstations, on-premises workstations and servers requires a single data science stack. Without it, the performance benefit of a powerful mobile workstation can be lost when the stack is transitioned to intermediate or production servers where the workload may ultimately run.

The hard work of developing an effective deep-learning algorithm on a mobile workstation may otherwise be offset with unplanned surprises when run on servers with a different software stack.

The hard work of developing an effective deep-learning algorithm on a mobile workstation may otherwise be offset with unplanned surprises when run on servers with a different software stack.

4. Broad software ecosystem to accelerate development

Like most laptops, mobile data science workstations can run popular Office productivity apps.

But software breadth for GPU-accelerated platforms can allow developers to use a pre-built software pipeline for specific tasks like computer vision, NLP and recommender systems and jump-start development efforts.

A wide variety of libraries ensures development advantages today while providing future flexibility. It’s equally important that broad software libraries are certified to run on desired GPUs.

As the de-facto standard for data science workloads, NVIDIA GPUs have achieved the broadest software support in the industry. This includes a software ecosystem that provides users with a pre-built software pipeline for many specific tasks. It also includes low-level libraries like RAPIDS and CUDA for developers to hack their own pipeline.

What’s Driving Your Data Science?

Businesses and data scientists need to ask themselves if their laptops are tuned for the challenges of data science and AI. They also need to consider the cost of not going mobile to accelerate their development as data-science-driven applications are increasingly pervasive.

NGC-Ready Data Science Mobile Workstations ship with a software stack that includes the most widely used AI frameworks and SDKs, like TensorFlow and PyTorch, to save environment setup time for developers. Learn how to take one for a test drive and measure the resulting gains in cost, productivity and freedom that developers can enjoy at https://www.nvidia.com/en-us/deep-learning-ai/solutions/data-science/workstations/.