To unlock real insights from data, AI and data science research can’t live on the outskirts of an institution — it has to become part of an organization’s core strategy.

The University of Texas MD Anderson Cancer Center, the top-ranked cancer hospital in the U.S., is doing just that, with a new focus on data governance and dozens of researchers pursuing AI-accelerated oncology projects to improve patient care.

“We are focusing on the data in context, ensuring we have a coordinated metadata supply chain to address the current challenges in making AI models translate to impact in the clinic,” said Dr. Caroline Chung, who was recently appointed MD Anderson’s first chief data officer. “To build better and more robust predictive models, we need a coordinated strategy that covers every step from data generation to the clinical use of machine learning insights.”

This data governance strategy will influence the way hospital data is collected and used for insight generation, and enable findability, accessibility, interoperability and reusability of the data.

“It’s a big culture change,” said Chung. “The more data we can capture with contextual information, the more complex questions we can ask and the greater potential we have to use machine learning insights to help our clinicians improve their interactions with patients to guide the data-driven treatment decisions with the best patient outcomes aligned with the goals of care.”

By building a pipeline that collects the high-quality data researchers need, stores it securely and tracks how it’s being used, MD Anderson aims to better support projects to help clinicians analyze radiology data, deliver cancer treatment and predict complications like sepsis.

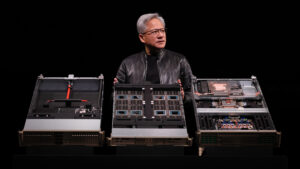

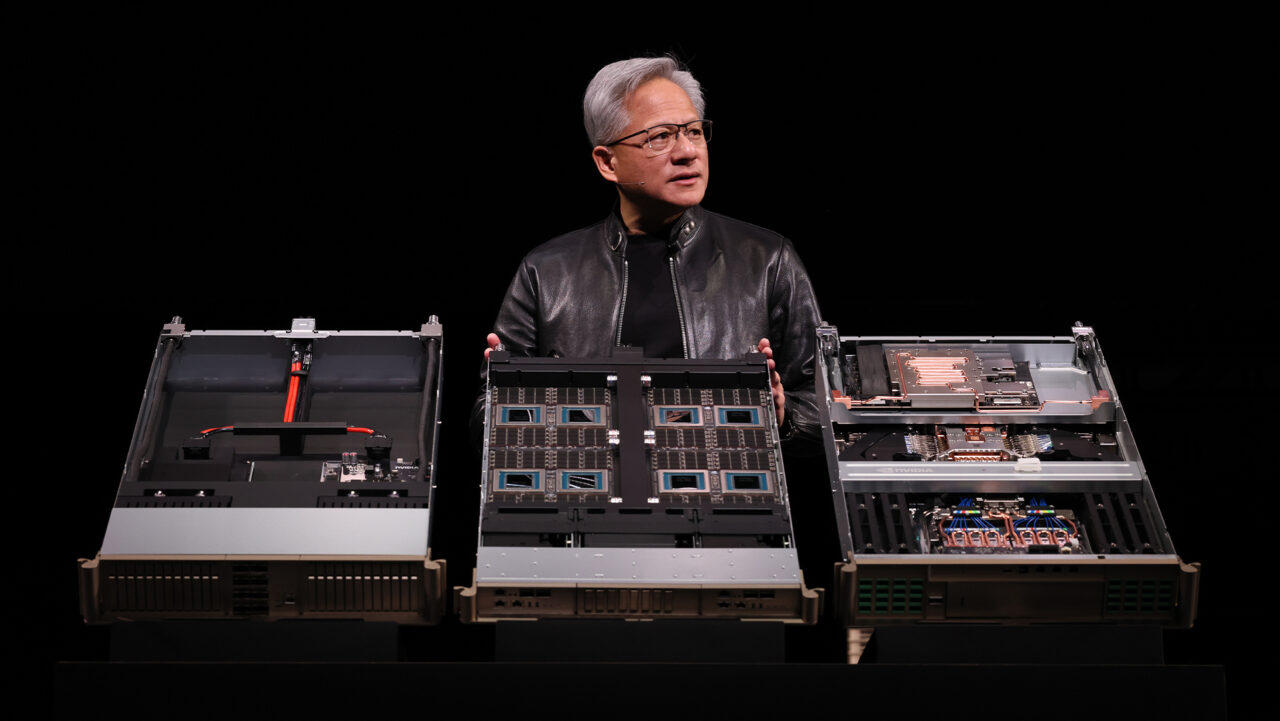

Many of these projects are already underway, accelerated by the speed of new GPU-powered technologies, such as NVIDIA DGX systems. New investments coming online at MD Anderson will give researchers access to thousands of additional GPU cores to support AI projects across the institution.

Applying AI to Diagnostic Imaging

The first step in oncology is detecting tumors — the earlier the better. MD Anderson is developing early detection AI applications to help diagnose patients with pancreatic cancer, which has a five-year survival rate of just 10 percent.

“Pancreatic cancer is often diagnosed after it’s already metastasized, meaning it’s spread to other organs,” said Dr. Eugene Koay, co-director of Gastrointestinal Radiation Oncology at MD Anderson. “We’re working on AI models to analyze the pancreas anytime we see it in a CT scan, MRI study or endoscopic ultrasound, whether or not the patient’s appointment is related to the pancreas.”

Not all pancreatic tumors are the same. Some are slow moving, others are aggressive. Some originate from cysts in the pancreas, others don’t.

In collaboration with the Early Detection Research Network, Koay and his team are working on convolutional neural networks that identify which cases are most likely to develop into malignant cancer, so clinicians can better support patients at risk.

Imaging Insights Inform Treatment Planning

When preparing for radiation therapy to treat cancerous cells, oncologists rely on a process known as contouring to trace the tumors that will be targeted by radiation treatment.

It’s a time-consuming process, and oncologists often have a backlog of radiotherapy treatment plans to create for patients. Dr. Laurence Court, associate professor of Radiation Physics at MD Anderson, hopes to reduce the burden of manual contouring with AI tools, enabling hospitals to treat thousands more cancer patients each year.

He’s especially interested in the impact these AI clinical tools could have in low-resource settings, where a shortage of radiologists and oncologists makes it harder to access lifesaving radiotherapy treatments.

Contouring is also used to plan for MRI-assisted radiosurgery, an advanced form of brachytherapy in which a radiation dose is delivered to cancerous tissue through implanted seeds. MD Anderson radiation oncologist Dr. Steven Frank uses this therapy to treat prostate cancer.

Precise contouring of the prostate and surrounding organs on MRI ensures that radioactive seeds are delivered to the right areas to treat the cancer without harming neighboring tissues.

By adopting an AI model that uses advances in GPU technologies, MD Anderson oncologists have improved the quality of contours for brachytherapy treatment planning and treatment quality assessment, said Dr. Jeremiah Sanders, a medical imaging physics fellow at MD Anderson who’s developing translational AI in Frank’s lab.

Sanders and Frank are also working on a model for use after a brachytherapy procedure — an AI application that analyzes MRI studies of the prostate to determine the quality of the radiation delivery. Insights from this model can help clinicians determine if additional treatment is needed and how to manage patients after their treatments.

Keeping a Watchful AI on Model Accuracy

For an AI model to succeed in a clinical setting, medical researchers need to catch the cases where the neural network struggles and retrain it to improve the application’s performance.

Dr. Kristy Brock, professor of Imaging Physics and Radiation Physics at MD Anderson, is working on an anomaly detection project to determine the cases where an AI model that contours liver tumors from CT scans fails — such as unusual images where a patient has a stent in the liver or fluid around the organ.

By identifying these rare failures, researchers can introduce additional training examples that are similar to cases the neural network previously stumbled on. This continuous training method selectively bolsters training data to improve model performance more efficiently.

“We don’t want to keep collecting data that looks the same as our first 150 scans,” Brock said. “We want to identify cases that will increase the variability of our sample dataset, which in turn boosts the model’s accuracy and generalizability.”

MD Anderson is one of several leading healthcare institutions adopting AI to improve medical research and patient care. Learn more about AI in healthcare at NVIDIA GTC, running online through Nov. 11.

Tune in to a healthcare special address by Kimberly Powell, NVIDIA’s VP of healthcare, on Nov. 9 at 10:30am Pacific. Watch NVIDIA founder and CEO Jensen Huang’s GTC keynote address below. Subscribe to NVIDIA healthcare news here.