The next time you’re in a virtual meeting or streaming a game, live event or TV program, the star of the show may be NVIDIA Maxine, which took center stage at GTC today when NVIDIA CEO Jensen Huang announced the availability of the GPU-accelerated software development kit during his keynote address.

Developers from video conferencing, content creation and streaming providers are using the Maxine SDK to create real-time video-based experiences. And it’s easily deployed to PCs, data centers or in the cloud.

Shift Towards Remote Work

Virtual collaboration continues to grow with 70 million hours of web meetings daily, and more global organizations are looking at technologies to support an increasingly remote workforce.

Pexip, a scalable video conferencing platform that enables interoperability between different video conferencing systems, was looking to push the boundaries of its video communications offering to meet this growing demand.

“We’re already using NVIDIA Maxine for audio noise removal and working on integrating virtual backgrounds to support premium video conferencing experiences for enterprises of all sizes,” said Giles Chamberlin, CTO and co-founder of Pexip.

Working with NVIDIA, Pexip aims to provide AI-powered video communications that support virtual meetings that are better than meetings in person.

It joins other companies in the video collaboration space like Avaya, which incorporated Maxine audio noise reduction into its Spaces app last October and has now implemented virtual background, which allows presenters to overlay their video over presentations.

Headroom uses AI to take distractions out of video conferencing, so participants can focus on interactions during meetings instead. This includes flagging when people have questions, note taking, transcription and smart meeting summarization.

Seeing Face Value for Virtual Events

Research has shown that there are over 1 million virtual events yearly, with more event marketers planning to invest in them in the future. As a result, everyone from event organizers to visual effects artists are looking for faster, more efficient ways to create digital experiences.

Among them is Touchcast, which combines AI and mixed reality to reimagine virtual events. It’s using Maxine’s super-resolution features to convert and deliver 1080p streams into 4K.

“NVIDIA Maxine is paving the future of video communications — a future where AI and neural networks enhance and enrich content in entirely new ways,” said Edo Segal, founder and CEO of Touchcast.

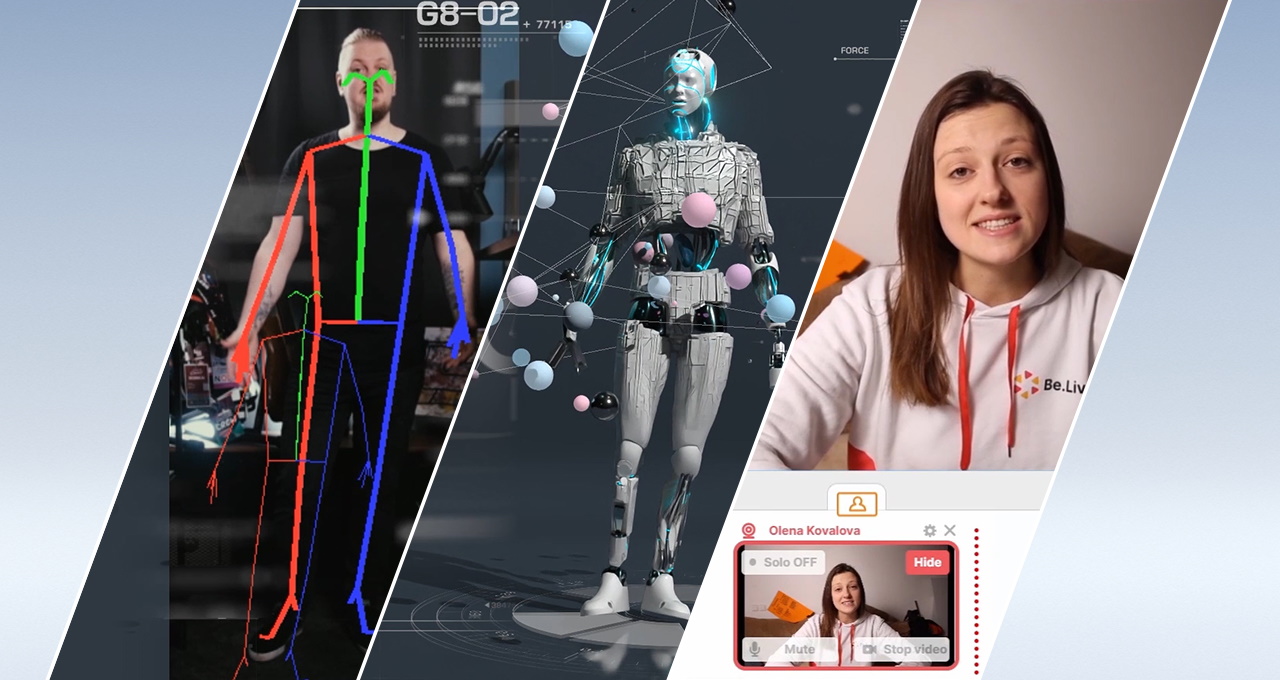

Another example is Notch, which creates tools that enable real-time visual effects and motion graphics for live events. Maxine provides it with real-time, AI-driven face and body tracking along with background removal.

Artists can track and mask performers in a live performance setting for a variety of creative use cases — all using a standard camera feed and eliminating the challenges of special hardware-tracking solutions.

“The integration of the Maxine SDK was very easy and took just a few days to complete,” said Matt Swoboda, founder and director of Notch.

Field of Streams

With nearly 10 million content creators on Twitch per month, becoming a live broadcaster has also never been easier. Live streamers are looking for powerful yet easy-to-use features to excite their audiences.

BeLive, which provides a platform for live streaming user-generated talk shows, is using Maxine to process its video streams in the cloud so customers don’t have to invest in expensive equipment. By running Maxine in the cloud, users can benefit from high-quality background replacement regardless of the hardware they’re running in the client.

With BeLive, live interactive call-in talk shows can be produced easily and streamed to YouTube or Facebook Live, with participants calling in from around the world.

OBS, the leading platform for streaming and recording, is a free and open source software solution broadly used for game streaming and live production. Users with NVIDIA RTX GPUs can now take advantage of noise removal, improving the clarity of their audio during production.

A Look Into NVIDIA Maxine

NVIDIA Maxine includes three AI SDKs covering video effects, audio effects and augmented reality — each with pre-trained deep learning models, so developers can quickly build or enhance their real-time applications.

Starting with the NVIDIA Video Effects SDK, enterprises can now apply AI effects to improve video quality without special cameras or other hardware. Features include super-resolution, generating 720p output live videos from 360p input videos along with artifact reduction to remove defects for crisper pictures.

Video noise removal eliminates low-light camera noise introduced in the video capture process while preserving all of the details. To hide messy rooms or other visual distractions, the Video Effects SDK removes the background of a webcam feed in real time, so only a user’s face and body show up in a livestream.

The NVIDIA Augmented Reality SDK enables real-time 3D face tracking using a standard web camera, delivering a more engaging virtual communication experience by automatically zooming into the face and keeping that face within view of the camera.

It’s now possible to detect human faces in images of video feeds, track the movement of facial expressions, create a 3D mesh representation of a person’s face, use video to track the movement of a human body in 3D space, simulate eye contact through gaze estimation and much more.

The NVIDIA Audio Effects SDK uses AI to remove distracting background noise from incoming and outgoing audio feeds, improving the clarity and quality of any conversation.

This includes the removal of unwanted background noises — like a dog barking or baby crying — to make conversations easier to understand. For meetings in large spaces, it’s also possible to remove room echoes from the background to make voices clearer.

Developers can add Maxine AI effects into their existing applications or develop new pipelines from scratch using NVIDIA DeepStream, an SDK for building intelligent video analytics, and NVIDIA Video Codec, an SDK for accelerated video encode and decode on Windows and Linux.

Maxine can also be used with NVIDIA Riva, a framework for building conversational AI applications, to offer world-class language-based capabilities such as transcription and translation.

Availability

Get started with NVIDIA Maxine.

And don’t let the curtain close on the opportunity to learn more about NVIDIA Maxine during GTC, running April 12-16. Registration is free.

A full list of Maxine-focused sessions can be found here. Be sure to watch Huang’s keynote address on-demand. And check out a demo (below) of Maxine.