Data centers need extremely fast storage access, and no DPU is faster than NVIDIA’s BlueField-2.

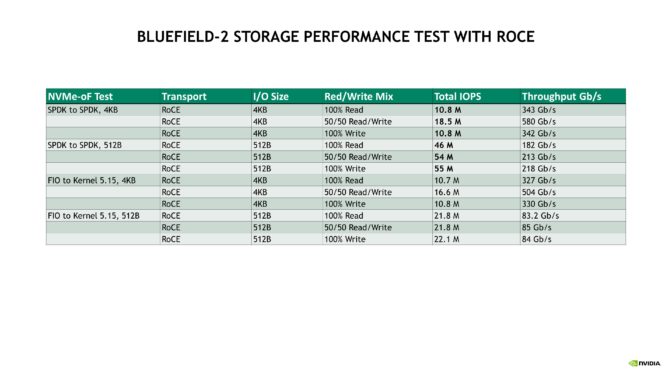

Recent testing by NVIDIA shows that two BlueField-2 data processing units reached 41.5 million input/output operations per second (IOPS) — more than 4x more IOPS than any other DPU.

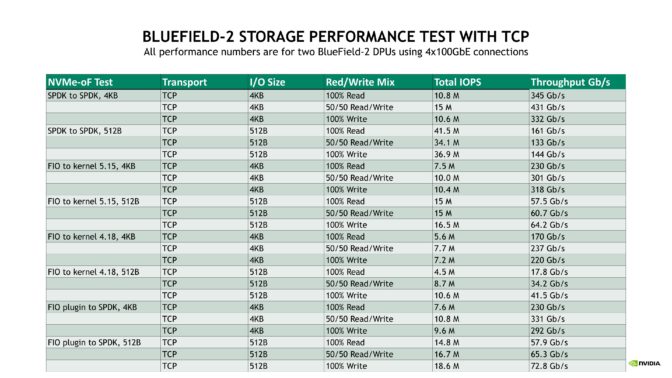

The BlueField-2 DPU delivered record-breaking performance using standard networking protocols and open-source software. It reached more than 5 million 4KB IOPS and from 7 million to over 20 million 512B IOPS for NVMe over Fabrics (NVMe-oF), a common method of accessing storage media, with TCP networking, one of the primary internet protocols.

To accelerate AI, big data and high performance computing applications, BlueField provides even higher storage performance using the popular RoCE network transport option.

In testing, BlueField supercharged performance as both an initiator and target, using different types of storage software libraries and different workloads to simulate real-world storage configurations. BlueField also supports fast storage connectivity over InfiniBand, the preferred networking architecture for many HPC and AI applications.

Testing Methodology

The 41.5 million IOPS reached by BlueField is more than 4x the previous world record of 10 million IOPS, set using proprietary storage offerings. This performance was achieved by connecting two fast Hewlett Packard Enterprise Proliant DL380 Gen 10 Plus servers, one as the application server (storage initiator) and one as the storage system (storage target).

Each server had two Intel “Ice Lake” Xeon Platinum 8380 CPUs clocked at 2.3GHz, giving 160 hyperthreaded cores per server, along with 512GB of DRAM, 120MB of L3 cache (60MB per socket) and a PCIe Gen4 bus.

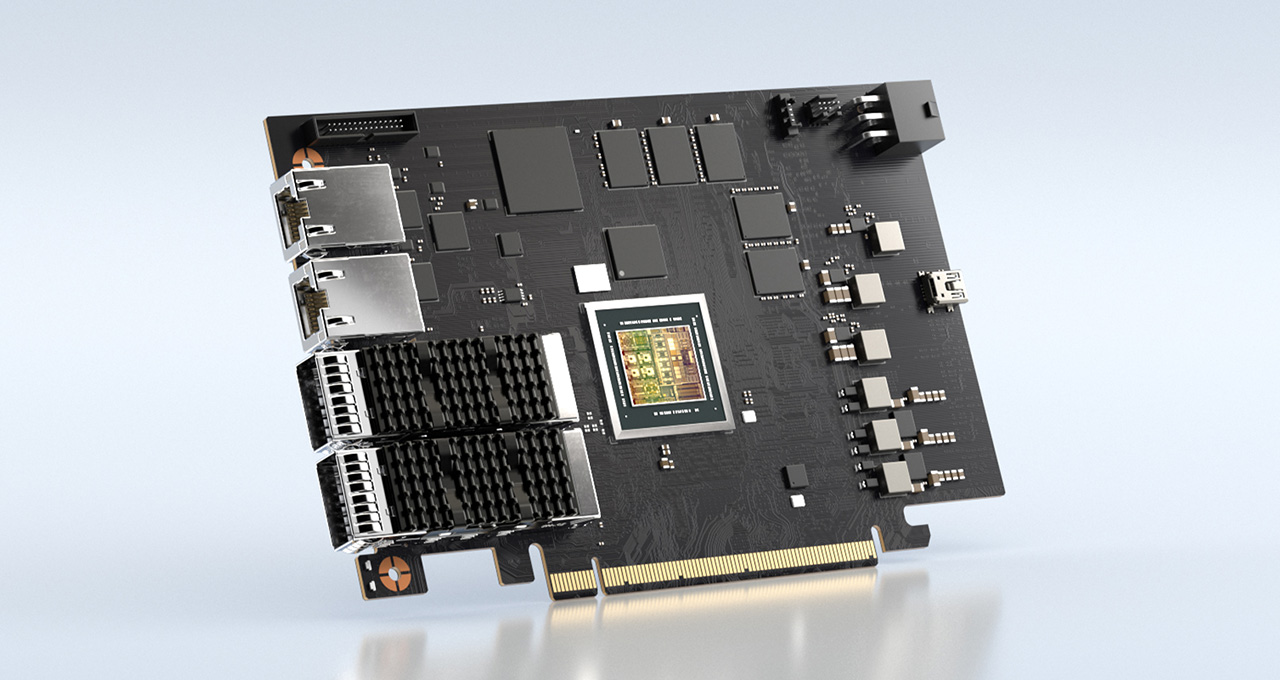

To accelerate networking and NVMe-oF, each server was configured with two NVIDIA BlueField-2 P-series DPU cards, each with two 100Gb Ethernet network ports, resulting in four network ports and 400Gb/s wire bandwidth between initiator and target, connected back-to-back using NVIDIA LinkX 100GbE Direct-Attach Copper (DAC) passive cables. Both servers had Red Hat Enterprise Linux (RHEL) version 8.3.

For the storage system software, both SPDK and the standard upstream Linux kernel target were tested using both the default kernel 4.18 and one of the newest kernels, 5.15. Three different storage initiators were benchmarked: SPDK, the standard kernel storage initiator, and the FIO plugin for SPDK. Workload generation and measurements were run with FIO and SPDK. I/O sizes were tested using 4KB and 512B, which are common medium and small storage I/O sizes, respectively.

The NVMe-oF storage protocol was tested with both TCP and RoCE at the network transport layer. Each configuration was tested with 100 percent read, 100 percent write and 50/50 read/write workloads with full bidirectional network utilization.

Our testing also revealed the following performance characteristics of the BlueField DPU:

- Testing with smaller 512B I/O sizes resulted in higher IOPS but lower-than-line-rate throughput, while 4KB I/O sizes resulted in higher throughput but lower IOPS numbers.

- 100 percent read and 100 percent write workloads provided similar IOPS and throughput, while 50/50 mixed read/write workloads produced higher performance by using both directions of the network connection simultaneously.

- Using SPDK resulted in higher performance than kernel-space software, but at the cost of higher server CPU utilization, which is expected behavior, since SPDK runs in user space with constant polling.

- The newer Linux 5.15 kernel performed better than the 4.18 kernel due to storage improvements added regularly by the Linux community.

Record-Setting DPU Storage Performance Enables Storage Performance With Security

In today’s storage landscape, the vast majority of cloud and enterprise deployments require fast, distributed and networked flash storage, accessed over Ethernet or InfiniBand. Faster servers, GPUs, networks and storage media all tax server CPUs to keep up, and the best way to do so is to deploy storage-capable DPUs.

The incredible storage performance demonstrated by the BlueField-2 DPU enables higher performance and better efficiency across the data center for both application servers and storage appliances.

On top of fast storage access, BlueField also supports hardware-accelerated encryption and decryption of both Ethernet storage traffic and the storage media itself, helping protect against data theft or exfiltration.

It offloads IPsec at up to 100Gb/s (data on the wire) and 256-bit AES-XTS at up to 200Gb/s (data at rest), reducing the risk of data theft if an adversary has tapped the storage network or if the physical storage drives are stolen or sold or disposed of improperly.

Customers and leading security software vendors are using BlueField’s recently updated NVIDIA DOCA framework to run cybersecurity applications – such as a distributed firewall or security groups with micro-segmentation – on the DPU to further improve application and network security for compute servers, which reduces the risk of inappropriate access or data modifications on the storage attached to those servers.

View more detailed results from the NVIDIA BlueField-2 DPU tests:

Learn More About NVIDIA Networking Acceleration

Learn more about NVIDIA DPUs, DOCA, RoCE, and how DPUs and DOCA enable accelerated networking and zero-trust security with these links:

- NVIDIA BlueField DPU information

- NVIDIA DOCA DPU development platform

- NVIDIA Developer Blog: NVIDIA improves the scalability of Zero-Touch RoCE, making it easier for more customers to enjoy the higher storage performance from RoCE

- NVIDIA Blog: NVIDIA Enables Future of Zero-Trust Enterprise Security with DPUs, DOCA and security ISV partnerships

- NVIDIA Blog: What Is Zero Trust?