Prefatory Note: Over the past six weeks, we took NVIDIA’s developer conference on a world tour. The GPU Technology Conference (GTC) was started in 2009 to foster a new approach to high performance computing using massively parallel processing GPUs. GTC has become the epicenter of GPU deep learning — the new computing model that sparked the big bang of modern AI. It’s no secret that AI is spreading like wildfire. The number of GPU deep learning developers has leapt 25 times in just two years. Some 1,500 AI startups have cropped up. This explosive growth has fueled demand for GTCs all over the world. So far, we’ve held events in Beijing, Taipei, Amsterdam, Tokyo, Seoul and Melbourne. Washington is set for this week and Mumbai next month. I kicked off four of the GTCs. Here’s a summary of what I talked about, what I learned and what I see in the near future as AI, the next wave in computing, revolutionizes one industry after another.

A New Era of Computing

Intelligent machines powered by AI computers that can learn, reason and interact with people are no longer science fiction. Today, a self-driving car powered by AI can meander through a country road at night and find its way. An AI-powered robot can learn motor skills through trial and error. This is truly an extraordinary time. In my three decades in the computer industry, none has held more potential, or been more fun. The era of AI has begun.

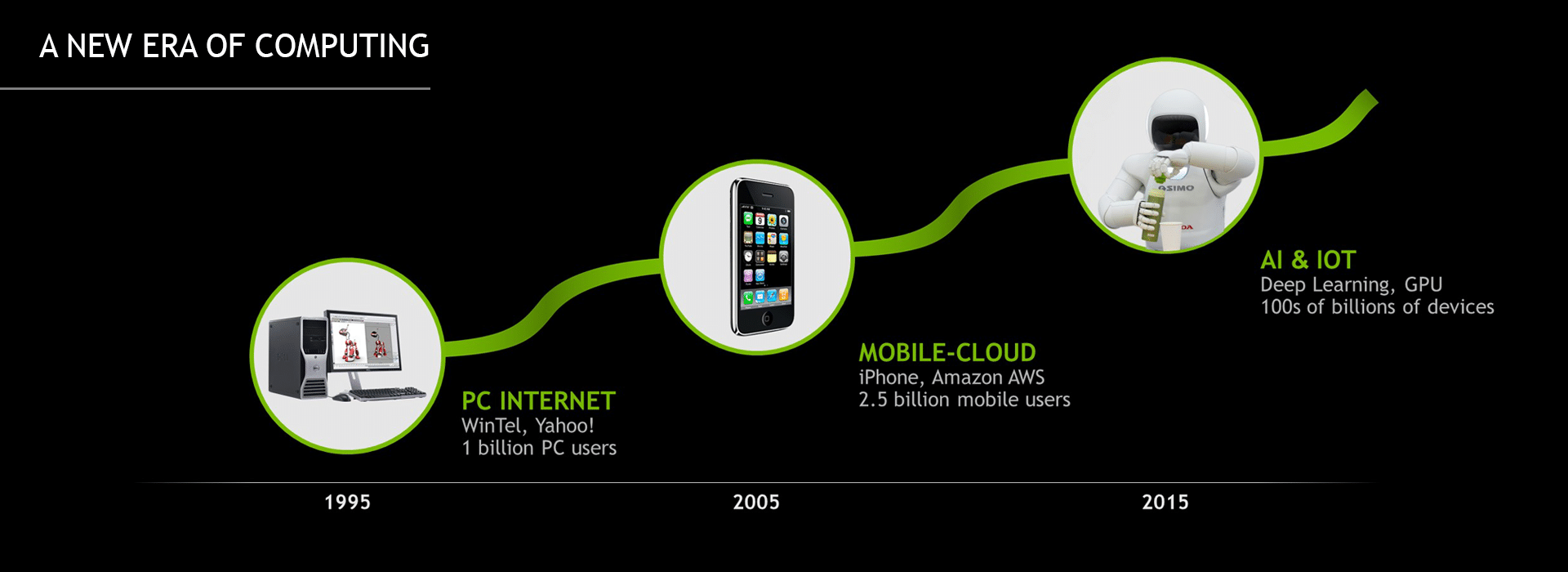

Our industry drives large-scale industrial and societal change. As computing evolves, new companies form, new products are built, our lives change. Looking back at the past couple of waves of computing, each was underpinned by a revolutionary computing model, a new architecture that expanded both the capabilities and reach of computing.

In 1995, the PC-Internet era was sparked by the convergence of low-cost microprocessors (CPUs), a standard operating system (Windows 95), and a new portal to a world of information (Yahoo!). The PC-Internet era brought the power of computing to about a billion people and realized Microsoft’s vision to put “a computer on every desk and in every home.” A decade later, the iPhone put “an Internet communications” device in our pockets. Coupled with the launch of Amazon’s AWS, the Mobile-Cloud era was born. A world of apps entered our daily lives and some 3 billion people enjoyed the freedom that mobile computing afforded.

Today, we stand at the beginning of the next era, the AI computing era, ignited by a new computing model, GPU deep learning. This new model — where deep neural networks are trained to recognize patterns from massive amounts of data — has proven to be “unreasonably” effective at solving some of the most complex problems in computer science. In this era, software writes itself and machines learn. Soon, hundreds of billions of devices will be infused with intelligence. AI will revolutionize every industry.

GPU Deep Learning “Big Bang”

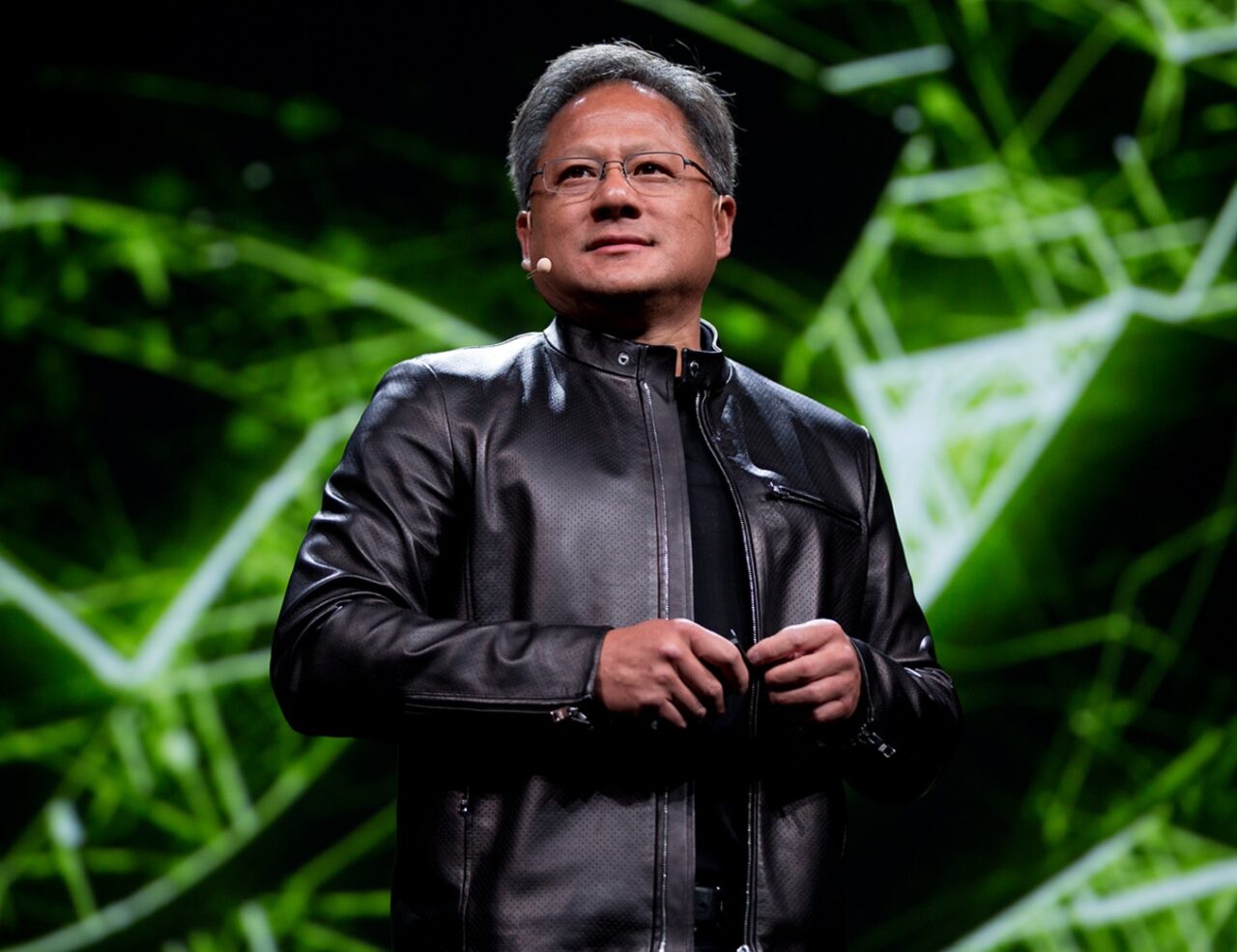

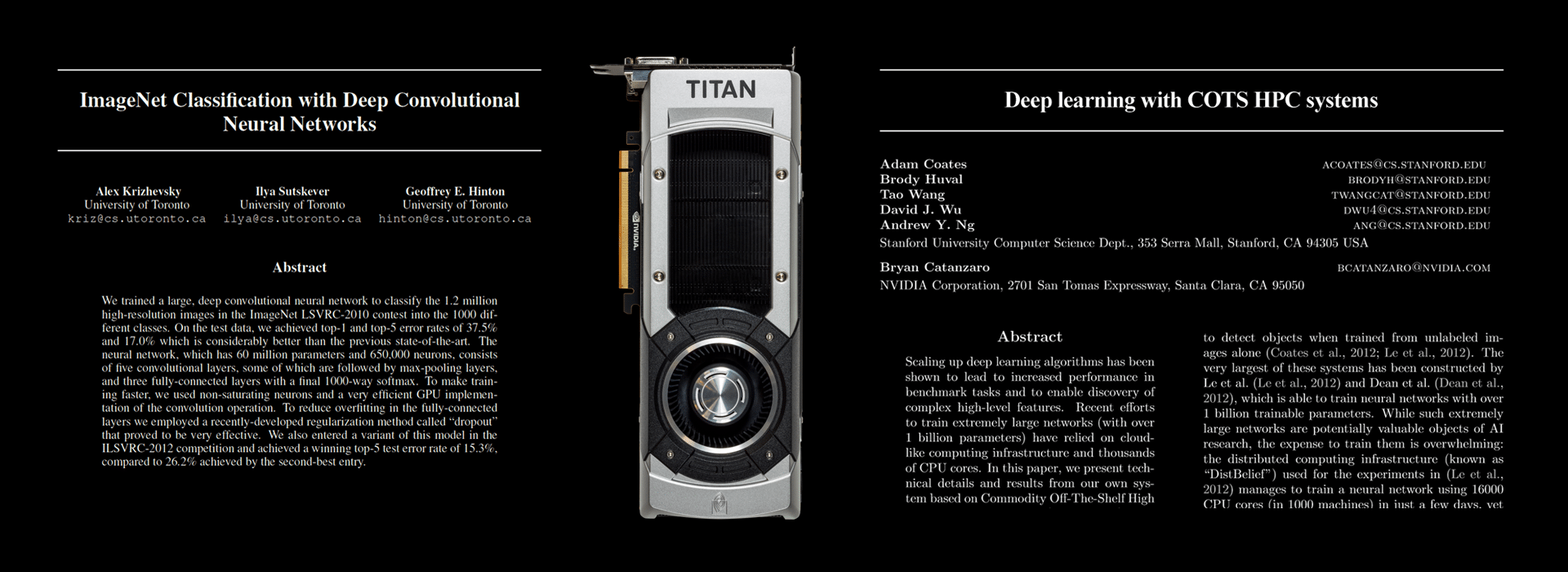

Why now? As I wrote in an earlier post (“Accelerating AI with GPUs: A New Computing Model”), 2012 was a landmark year for AI. Alex Krizhevsky of the University of Toronto created a deep neural network that automatically learned to recognize images from 1 million examples. With just several days of training on two NVIDIA GTX 580 GPUs, “AlexNet” won that year’s ImageNet competition, beating all the human expert algorithms that had been honed for decades. That same year, recognizing that the larger the network, or the bigger the brain, the more it can learn, Stanford’s Andrew Ng and NVIDIA Research teamed up to develop a method for training networks using large-scale GPU-computing systems.

The world took notice. AI researchers everywhere turned to GPU deep learning. Baidu, Google, Facebook and Microsoft were the first companies to adopt it for pattern recognition. By 2015, they started to achieve “superhuman” results — a computer can now recognize images better than we can. In the area of speech recognition, Microsoft Research used GPU deep learning to achieve a historic milestone by reaching “human parity” in conversational speech.

Image recognition and speech recognition — GPU deep learning has provided the foundation for machines to learn, perceive, reason and solve problems. The GPU started out as the engine for simulating human imagination, conjuring up the amazing virtual worlds of video games and Hollywood films. Now, NVIDIA’s GPU runs deep learning algorithms, simulating human intelligence, and acts as the brain of computers, robots and self-driving cars that can perceive and understand the world. Just as human imagination and intelligence are linked, computer graphics and artificial intelligence come together in our architecture. Two modes of the human brain, two modes of the GPU. This may explain why NVIDIA GPUs are used broadly for deep learning, and NVIDIA is increasingly known as “the AI computing company.”

An End-to-End Platform for a New Computing Model

An End-to-End Platform for a New Computing Model

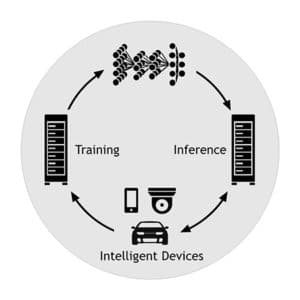

As a new computing model, GPU deep learning is changing how software is developed and how it runs. In the past, software engineers crafted programs and meticulously coded algorithms. Now, algorithms learn from tons of real-world examples — software writes itself. Programming is about coding instruction. Deep learning is about creating and training neural networks. The network can then be deployed in a data center to infer, predict and classify from new data presented to it. Networks can also be deployed into intelligent devices like cameras, cars and robots to understand the world. With new experiences, new data is collected to further train and refine the neural network. Learnings from billions of devices make all the devices on the network more intelligent. Neural networks will reap the benefits of both the exponential advance of GPU processing and large network effects — that is, they will get smarter at a pace way faster than Moore’s Law.

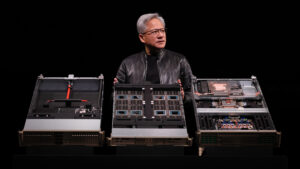

Whereas the old computing model is “instruction processing” intensive, this new computing model requires massive “data processing.” To advance every aspect of AI, we’re building an end-to-end AI computing platform — one architecture that spans training, inference and the billions of intelligent devices that are coming our way.

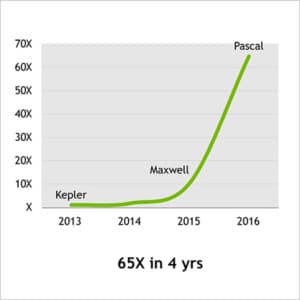

Let’s start with training. Our new Pascal GPU is a $2 billion investment and the work of several thousand engineers over three years. It is the first GPU optimized for deep learning. Pascal can train networks that are 65 times larger or faster than the Kepler GPU that Alex Krizhevsky used in his paper.(1) A single computer of eight Pascal GPUs connected by NVIDIA NVLink, the highest throughput interconnect ever created, can train a network faster than 250 traditional servers.

Let’s start with training. Our new Pascal GPU is a $2 billion investment and the work of several thousand engineers over three years. It is the first GPU optimized for deep learning. Pascal can train networks that are 65 times larger or faster than the Kepler GPU that Alex Krizhevsky used in his paper.(1) A single computer of eight Pascal GPUs connected by NVIDIA NVLink, the highest throughput interconnect ever created, can train a network faster than 250 traditional servers.

Soon, the tens of billions of internet queries made each day will require AI, which means that each query will require billions more math operations. The total load on cloud services will be enormous to ensure real-time responsiveness. For faster data center inference performance, we announced the Tesla P40 and P4 GPUs. P40 accelerates data center inference throughput by 40 times. P4 requires only 50 watts and is designed to accelerate 1U OCP servers, typical of hyperscale data centers. Software is a vital part of NVIDIA’s deep learning platform. For training, we have CUDA and cuDNN. For inferencing, we announced TensorRT, an optimizing inferencing engine. TensorRT improves performance without compromising accuracy by fusing operations within a layer and across layers, pruning low-contribution weights, reducing precision to FP16 or INT8, and many other techniques.

Someday, billions of intelligent devices will take advantage of deep learning to perform seemingly intelligent tasks. Drones will autonomously navigate through a warehouse, find an item and pick it up. Portable medical instruments will use AI to diagnose blood samples onsite. Intelligent cameras will learn to alert us only to the circumstances that we care about. We created an energy-efficient AI supercomputer, Jetson TX1, for such intelligent IoT devices. A credit card-sized module, Jetson TX1 can reach 1 TeraFLOP FP16 performance using just 10 watts. It’s the same architecture as our most powerful GPUs and can run all the same software.

In short, we offer an end-to-end AI computing platform — from GPU to deep learning software and algorithms, from training systems to in-car AI computers, from cloud to data center to PC to robots. NVIDIA’s AI computing platform is everywhere.

AI Computing for Every Industry

Our end-to-end platform is the first step to ensuring that every industry can tap into AI. The global ecosystem for NVIDIA GPU deep learning has scaled out rapidly. Breakthrough results triggered a race to adopt AI for consumer internet services — search, recognition, recommendations, translation and more. Cloud service providers, from Alibaba and Amazon to IBM and Microsoft, make the NVIDIA GPU deep learning platform available to companies large and small. The world’s largest enterprise technology companies have configured servers based on NVIDIA GPUs. We were pleased to highlight strategic announcements along our GTC tour to address major industries:

AI Transportation: At $10 trillion, transportation is a massive industry that AI can transform. Autonomous vehicles can reduce accidents, improve the productivity of trucking and taxi services, and enable new mobility services. We announced that both Baidu and TomTom selected NVIDIA DRIVE PX 2 for self-driving cars. With each, we’re building an open “cloud-to-car” platform that includes an HD map, AI algorithms and an AI supercomputer.

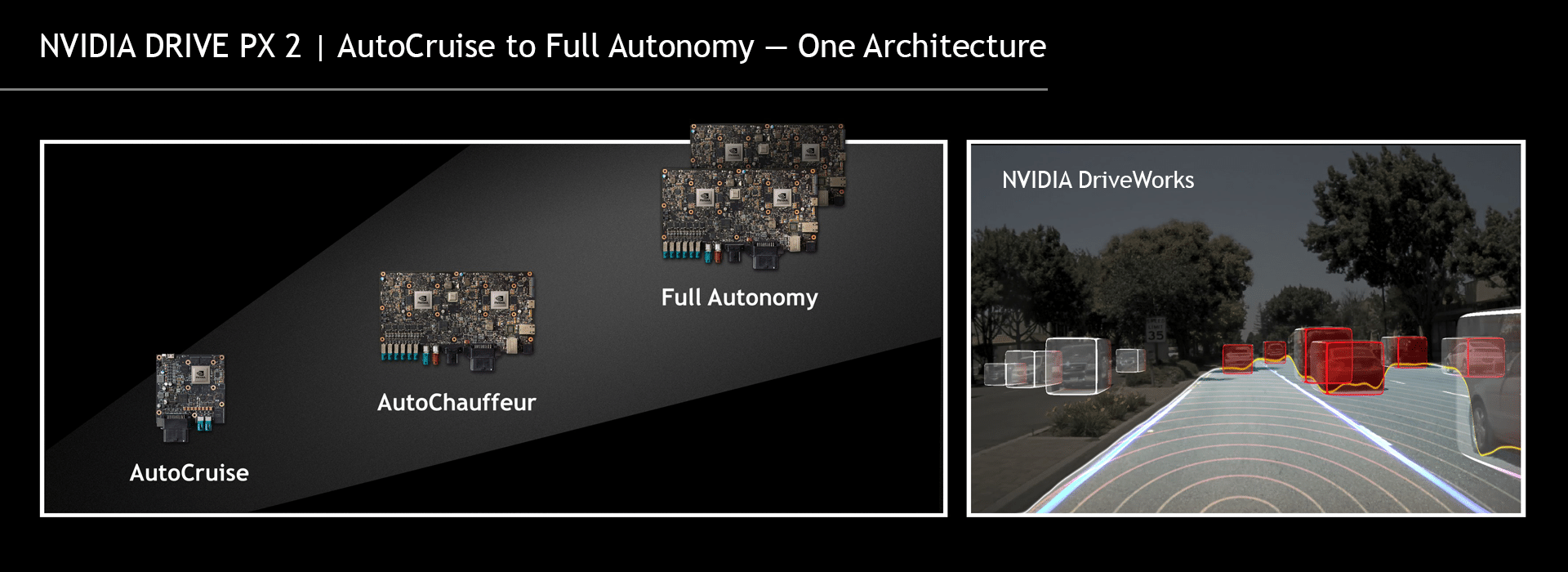

Driving is a learned behavior that we do as second nature. Yet one that is impossible to program a computer to perform. Autonomous driving requires every aspect of AI — perception of the surroundings, reasoning to determine the conditions of the environment, planning the best course of action, and continuously learning to improve our understanding of the vast and diverse world. The wide spectrum of autonomous driving requires an open, scalable architecture — from highway hands-free cruising, to autonomous drive-to-destination, to fully autonomous shuttles with no drivers.

NVIDIA DRIVE PX 2 is a scalable architecture that can span the entire range of AI for autonomous driving. At GTC, we announced DRIVE PX 2 AutoCruise designed for highway autonomous driving with continuous localization and mapping. We also released DriveWorks Alpha 1, our OS for self-driving cars that covers every aspect of autonomous driving — detection, localization, planning and action.

We bring all of our capabilities together into our own self-driving car, NVIDIA BB8. Here’s a little video:

NVIDIA is focused on innovation at the intersection of visual processing, AI and high performance computing — a unique combination at the heart of intelligent and autonomous machines. For the first time, we have AI algorithms that will make self-driving cars and autonomous robots possible. But they require a real-time, cost-effective computing platform.

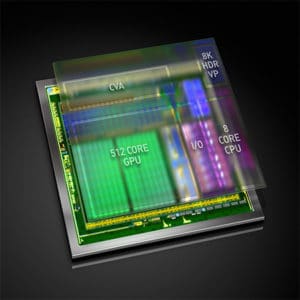

At GTC, we introduced Xavier, the most ambitious single-chip computer we have ever undertaken — the world’s first AI supercomputer chip. Xavier is 7 billion transistors — more complex than the most advanced server-class CPU. Miraculously, Xavier has the equivalent horsepower of DRIVE PX 2 launched at CES earlier this year — 20 trillion operations per second of deep learning performance — at just 20 watts. As Forbes noted, we doubled down on self-driving cars with Xavier.

At GTC, we introduced Xavier, the most ambitious single-chip computer we have ever undertaken — the world’s first AI supercomputer chip. Xavier is 7 billion transistors — more complex than the most advanced server-class CPU. Miraculously, Xavier has the equivalent horsepower of DRIVE PX 2 launched at CES earlier this year — 20 trillion operations per second of deep learning performance — at just 20 watts. As Forbes noted, we doubled down on self-driving cars with Xavier.

AI Enterprise: IBM, which sees a $2 trillion opportunity in cognitive computing, announced a new POWER8 and NVIDIA Tesla P100 server designed to bring AI to the enterprise. On the software side, SAP announced that it has received two of the first NVIDIA DGX-1 supercomputers and is actively building machine learning enterprise solutions for its 320,000 customers in 190 countries.

AI City: There will be 1 billion cameras in the world in 2020. Hikvision, the world leader in surveillance systems, is using AI to help make our cities safer. It uses DGX-1 for network training and has built a breakthrough server, called “Blade,” based on 16 Jetson TX1 processors. Blade requires 1/20 the space and 1/10 the power of the 21 CPU-based servers of equivalent performance.

AI Factory: There are 2 billion industrial robots worldwide. Japan is the epicenter of robotics innovation. At GTC, we announced that FANUC, the Japan-based industrial robotics giant, will build the factory of the future on the NVIDIA AI platform, from end to end. Its deep neural network will be trained with NVIDIA GPUs, GPU-powered FANUC Fog units will drive a group of robots and allow them to learn together, and each robot will have an embedded GPU to perform real-time AI. MIT Tech Review wrote about it in its story “Japanese Robotics Giant Gives Its Arms Some Brains.”

AI Factory: There are 2 billion industrial robots worldwide. Japan is the epicenter of robotics innovation. At GTC, we announced that FANUC, the Japan-based industrial robotics giant, will build the factory of the future on the NVIDIA AI platform, from end to end. Its deep neural network will be trained with NVIDIA GPUs, GPU-powered FANUC Fog units will drive a group of robots and allow them to learn together, and each robot will have an embedded GPU to perform real-time AI. MIT Tech Review wrote about it in its story “Japanese Robotics Giant Gives Its Arms Some Brains.”

The Next Phase of Every Industry: GPU deep learning is inspiring a new wave of startups — 1,500+ around the world — in healthcare, fintech, automotive, consumer web applications and more. Drive.ai, which was recently licensed to test its vehicles on California roads, is tackling the challenge of self-driving cars by applying deep learning to the full driving stack. Preferred Networks, the Japan-based developer of the Chainer framework, is developing deep learning solutions for IoT. Benevolent.ai, based in London and one of the first recipients of DGX-1, is using deep learning for drug discovery to tackle diseases like Parkinson’s, Alzheimer’s and rare cancers. According to CB Insights, funding for AI startups hit over $1 billion in the second quarter, an all-time high.

The explosion of startups is yet another indicator of AI’s sweep across industries. As Fortune recently wrote, deep learning will “transform corporate America.”

AI for Everyone

AI can solve problems that seemed well beyond our reach just a few years back. From real-world data, computers can learn to recognize patterns too complex, too massive or too subtle for hand-crafted software or even humans. With GPU deep learning, this computing model is now practical and can be applied to solve challenges in the world’s largest industries. Self-driving cars will transform the $10 trillion transportation industry. In healthcare, doctors will use AI to detect disease at the earliest possible moment, to understand the human genome to tackle cancer, or to learn from the massive volume of medical data and research to recommend the best treatments. And AI will usher in the 4th industrial revolution — after steam, mass production and automation — intelligent robotics will drive a new wave of productivity improvements and enable mass consumer customization. AI will touch everyone. The era of AI is here.