AI has shown off a broad repertoire of creative talents in recent years — composing music, writing stories, crafting meme captions. Now, the stage is set for AI models to take on a new venture in the performing arts — by stepping onto the dance floor.

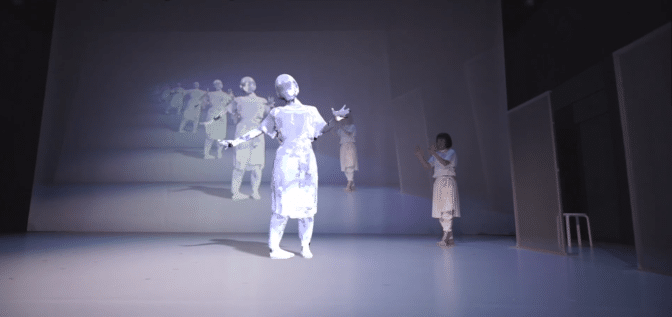

In a Japanese production titled discrete figures, an AI dancer was projected on stage, joining a live dancer for a duet. The show also presented a partly trained neural network that used recordings of audience members dancing as input data.

Japanese digital art group Rhizomatiks, multimedia dance troupe Elevenplay and media artist Kyle McDonald collaborated on the work, which was performed last year in Canada, the United States, Japan and Spain.

AI Choreography, Behind the Scenes

An artist with a technical background, McDonald has worked with dancers since 2010 on visual experiences that “play with the boundary between real and virtual spaces,” he said. He’s been an adjunct professor at NYU’s Tisch School of the Arts and has a graduate degree in electronic arts from Rensselaer Polytechnic Institute.

For discrete figures, McDonald collected 2.5 hours of motion capture data from eight dancers improvising to a consistent beat. Using NVIDIA GPUs, this training data was fed into a neural network called dance2dance, which generated movements that can be rendered as 3D stick-figures.

During a duet between the AI and troupe dancer Masako Maruyama, the AI was overlaid with a 3D body projected on stage, appearing to float next to Maruyama. It started out as a silvery outline before crystallizing into Maruyama’s doppelganger.

The projected figure first performed movements generated in advance by the neural network, then switched to moves set by the dance company’s choreographer, Mikiko. Maruyama and the AI dancer moved in unison until she left the stage, leaving the projection to transition back into its silvery outline and AI-generated choreography.

The interplay between human-created and AI-generated movement spoke to the main themes of discrete figures.

“I think the audience will start asking more questions about where human intentionality ends, and where automation begins,” said McDonald. “It seems appropriate to ask in a time when algorithms rule everything from what we consume to how we express ourselves.”

Step Up: Audience Members Hit the Dance Floor

Another scene in the production incorporated the pix2pixHD algorithm developed by NVIDIA Research — and called for audience participation.

For each performance, between the time the doors opened and the show began, the team had 16 audience members (of all ages and dance experience levels) step into a booth to record one minute of dance movement against a black backdrop. Each dance video was sent to a single NVIDIA GPU in the cloud for pose estimation and training the pix2pixHD algorithm from scratch.

After just 15 minutes of training, the algorithm generated an output video for a single synchronized dance. By the time the show began, McDonald had compiled these 16 AI-created videos into a montage set to music, which was shown during the performance on a projection screen.

Using audience recordings allowed participants to experience their “movement data being reimagined by the machine,” McDonald wrote in a Medium post describing the project. “It follows a recurring theme throughout the entire performance: seeing your humanity reflected in the machine, and vice versa.”

The scene also included a creative visualization of the neural network’s raw data using 3D point clouds and a skeleton model of the generated dancer.

“When we saw the unfinished look of pix2pixHD after only 15 minutes of training, we felt that part of the training process would be perfect for the stage,” said McDonald. “The first 15 minutes of training really captures some themes in the performance such as the emergence of AI and the messy boundaries between the digital and physical worlds.”