NVIDIA’s playing a bigger role in high performance computing than ever, just as supercomputing itself has become central to meeting the biggest challenges of our time.

Speaking just hours ahead of the start of the annual SC18 supercomputing conference in Dallas, NVIDIA CEO Jensen Huang told 700 researchers, lab directors and execs about forces that are driving the company to push both into “scale-up” computing — focused on large supercomputing systems — as well as “scale-out” efforts, for researchers, data scientists and developers to harness the power of however many GPUs they need.

“The HPC industry is fundamentally changing,” Huang told the crowd. “It started out in scientific computing, and the architecture was largely scale up. Its purpose in life was to simulate from first principles the laws of physics. In the future, we will continue to do that, but we have a new tool — this tool is called machine learning.”

Machine learning — which has caught fire over the past decade among businesses and researchers — can now take advantage of both the ability to to scale up, with powerful GPU-accelerated machines, as well as the fast-growing ability to scale out workloads to sprawling GPU-powered data centers.

Scaling Up the World’s Supercomputers

That’s because data scientists are facing the same kinds of challenges that those in the hyperscale community have faced for more than a decade: the need to continue accelerating their work, even as Moore’s law — which long drove increases in the computing power offered by CPUs — sputtered out.

This year 127 of the world’s top 500 supercomputers are powered by NVIDIA, according to the newly released TOP500 list of the world’s fastest supercomputers. And fully half of their overall processing power is driven by GPUs.

In addition to powering the world’s two fastest supercomputers, NVIDIA GPUs power 22 of the top 25 machines on the Green500 list of the most energy-efficient supercomputers.

“The No. 1 fastest supercomputer in the world, the fastest supercomputer in America, the fastest supercomputer in Europe and the fastest supercomputer in Japan — all powered by NVIDIA Volta V100,” Huang said.

One Architecture — Scaling Out, Scaling Up

NVIDIA’s bringing that ability to scale up to the world’s data centers. Shortly after its introduction, NVIDIA’s new T4 cloud GPU with revolutionary multi-precision Tensor Cores — is being adopted at a record pace. Huang said that it’s now available on the Google Cloud Platform, in addition to being featured in 57 server designs from the world’s leading computer makers.

“I am just blown away by how fast Google Cloud works, in the 30 days from production, it was deployed at scale on the cloud,” Huang said.

Another example of scaling up: NVIDIA’s DGX-2, a single node with 16 V100 GPUs connected with NVLink, which produce two petaflops of processing power.

Huang showed off the system several times to the audience, and joked that it’s “super heavy” and you have to be “super strong” to hold it, while hefting in the HGX-2 board — two of which are at the heart of the DGX-2 — and which are being sold by leading OEMs.

“This is the hallmark of ‘scale up,’ this is what scale-up computing is all about,” Huang said.

Because it all runs on a single software ecosystem that developers have painstakingly woven together over the past decade, Huang explained, developers can now instantly scale out across growing numbers of GPUs.

As part of that effort, Huang announced new multi-node HPC and visualization containers for the NGC container registry, which allow supercomputing users to run GPU-accelerated applications on large-scale clusters. NVIDIA also announced a new NGC-Ready program, including workstations and servers from top vendors.

These systems are ready to run a growing collection of software that just works, on one system or across many. “This isn’t the app store for apps you want, this is the app store for the apps you need,” Huang said. “It’s all lovingly maintained and tested and optimized.”

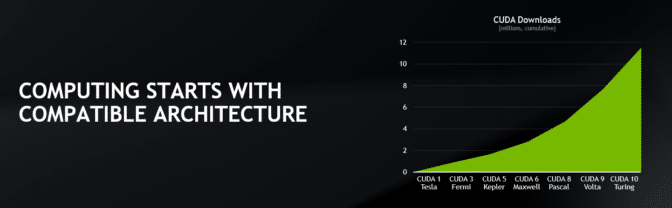

Compatible Architecture

All of this builds on the CUDA foundation NVIDIA has been developing for more than 10 years. That foundation allows “investments made in the past to be carried into the future,” Huang explained.

Each new version of CUDA, now on version 10.0, offers faster performance than the last, and each new GPU architecture — Tesla, Fermi, Kepler, Maxwell, Pascal, Volta and now Turing — accelerates software running on CUDA even further.

“Your investments in software last a long time, and the investment you make in hardware is instantly worthwhile,” Huang said.

GPUs Powering HPC Breakthroughs

This performance is paying off for top researchers. NVIDIA GPUs run on the newly commissioned world’s fastest Summit supercomputer at Oak Ridge National Laboratory and power five of the six finalists for the ACM Gordon Bell Prize, Huang said.

The winner of the coveted prize — which recognizes outstanding achievements in HPC — will be announced at the end of the show on Thursday.

Now GPUs are being put to work by growing numbers of businesses and researchers in machine learning. Huang noted that the entire computer industry is moving toward high performance computing.

RAPIDS, an open-source software suite for accelerating data analytics and machine learning, allows data scientists to execute end-to-end data science training pipelines completely on NVIDIA GPUs. It works the way data scientists work, Huang explained.

RAPIDS relies on CUDA primitives for low-level compute optimization, but exposes that GPU parallelism and high-memory bandwidth through user-friendly Python interfaces. The RAPIDS open source software library mimics the pandas API and is built on Apache Arrow to maximize interoperability and performance.

“We made it super easy to come onto this platform, and it’s completely open sourced,” Huang said.

Flower Power

Huang also showed the latest version of an inferencing demo that identifies flowers, which seems to grow more staggering every time he shows it. Initially showing how a system running Intel CPUs can classify five images per second, he showed how the new NVIDIA T4 GPU on Google Cloud can take advantage of NGC to recognize images of more than 50,000 flowers a second.

And, in a striking model of galactic winds, Huang transported the audience to another galaxy — a dwarf galaxy one-fifth the size of the Milky Way — to show how IndeX, an NVIDIA plug-in for the ParaView data analysis and visualization application, harnesses GPUs to deliver real-time performance on breathtakingly large datasets.

While the audience watched, with just a few keystrokes, a 7-terabyte dataset was turned into an interactive simulation of gasses flying in all directions as a galaxy spun around its axis.

Huang also demonstrated how GPU-accelerated systems running key applications such as COSMO, WRF and MPAS can tackle one of HPC’s biggest challenges — weather prediction — creating visually stunning models of the weather over the Alps.

“We’re not looking at a video right now, we’re looking at the future of predicting microclimates,” Huang said.

It’s only one latest example of why researchers are clamoring for GPU-powered systems. And why, thanks to GPUs, demand for the world’s fastest computers is growing faster than ever.