You don’t need specialized hardware to do ray tracing, but you want it.

Software-based ray tracing, of course, is decades old. And it looks great: movie makers have been using ray tracing for decades now.

But it’s now clear that specialized hardware — like the RT Cores built into NVIDIA’s Turing architecture — makes a huge difference if you’re doing ray tracing in real time. Games require real-time ray tracing.

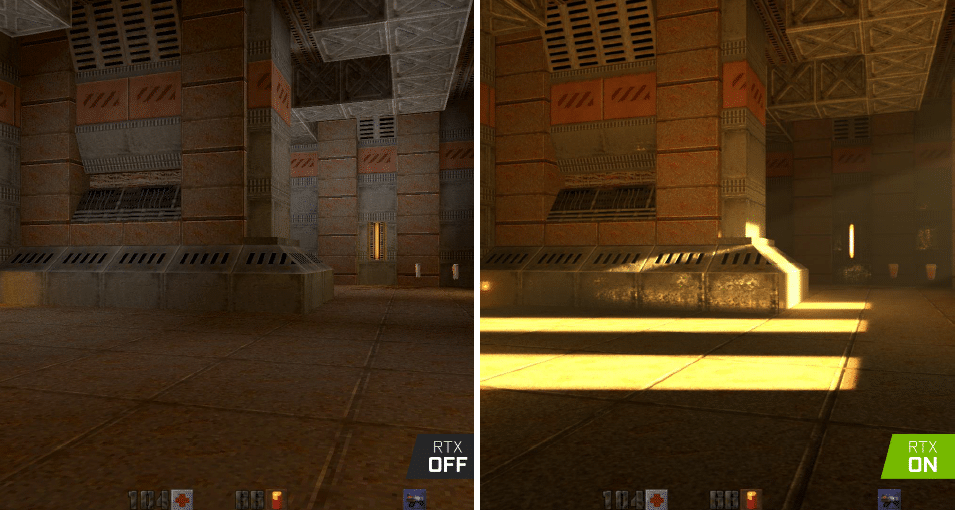

Once considered the “holy grail” of graphics, real-time ray tracing brings the same techniques long used by movie makers to gamers and creators.

Thanks to a raft of new AAA games developers have introduced this year — and the introduction last year of NVIDIA GeForce RTX GPUs — this once wild idea is mainstream.

Millions are now firing up PCs that benefit from the RT Cores and Tensor Cores built into RTX. And they’re enjoying ray-tracing enhanced experiences many thought would be years, even decades, away.

Real-time ray tracing, however, is possible without dedicated hardware. That’s because — while ray tracing has been around since the 1970s — the real trend is much newer: GPU-accelerated ray tracing with dedicated cores.

The use of GPUs to accelerate ray-tracing algorithms gained fresh momentum last year with the introduction of Microsoft’s DirectX Raytracing (DXR) API. And that’s great news for gamers and creators.

Ray Tracing Isn’t New

So what is ray tracing? Look around you. The objects you’re seeing are illuminated by beams of light. Now follow the path of those beams backwards from your eye to the objects that light interacts with. That’s ray tracing.

It’s a technique first described by IBM’s Arthur Appel, in 1969, in “Some Techniques for Shading Machine Renderings of Solids.” Thanks to pioneers such as Turner Whitted, Lucasfilm’s Robert Cook, Thomas Porter and Loren Carpenter, CalTech’s Jim Kajiya, and a host of others, ray tracing is now the standard in the film and computer graphics industry for creating lifelike lighting and images.

However, until last year, almost all ray tracing was done offline. It’s very compute intensive. Even today, the effects you see at movie theaters require sprawling, CPU-equipped server farms. Gamers want to play interactive, real-time games. They won’t wait minutes or hours per frame.

GPUs, by contrast, can move much faster, thanks to the fact they rely on larger numbers of computing cores to get complex tasks done more quickly. And, traditionally, they’ve used another rendering technique, known as “rasterization,” to display three-dimensional objects on a two-dimensional screen.

With rasterization, objects on the screen are created from a mesh of virtual triangles, or polygons, that create 3D models of objects. In this virtual mesh, the corners of each triangle — known as vertices — intersect with the vertices of other triangles of different sizes and shapes. It’s fast and the results have gotten very good, even if it’s still not always as good as what ray tracing can do.

GPUs Take on Ray Tracing

But what if you used these GPUs — and their parallel processing capabilities — to accelerate ray tracing? This is where GPU-accelerated software ray tracing comes in. NVIDIA OptiX, introduced in 2009, targeted design professionals with GPU-accelerated ray tracing. Over the next decade, OptiX rode the steady advance in speed delivered by successive generations of NVIDIA GPUs.

By 2015, NVIDIA was demonstrating at SIGGRAPH how ray tracing could turn a CAD model into a photorealistic image — indistinguishable from a photograph — in seconds, speeding up the work of architects, product designers and graphic artists.

That approach — GPU-accelerated software ray tracing — was endorsed by Microsoft early last year, with the introduction of DXR, which enables full support of NVIDIA RTX ray-tracing software through Microsoft’s DXR API.

Delivering high-performance, real-time ray tracing required two innovations: dedicated ray-tracing hardware, RT Cores; and Tensor Cores for high-performance AI processing for advanced denoising, anti-aliasing and super resolution.

RT Cores accelerate ray tracing by speeding up the process of finding out where a ray intersects with the 3D geometry of a scene. These specialized cores accelerate a tree-based ray-tracing structure called a bounding volume hierarchy, or BVH, used to calculate where rays and the triangles that comprise a computer-generated image intersect.

Tensor Cores — first unveiled with NVIDIA’s Volta architecture aimed at enterprise and scientific computing in 2018 to accelerate AI algorithms — further accelerate graphically intense workloads. That’s through a special AI technique called NVIDIA DLSS, short for Deep Learning Super Sampling. RTX’s Tensor Cores make this possible.

Turing at Work

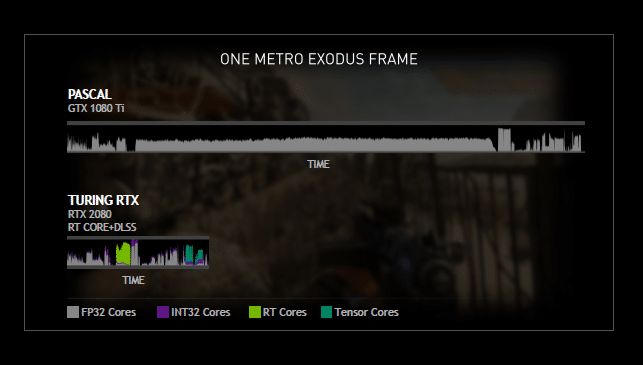

You can see how this works by comparing how quickly Turing and our previous generation Pascal architecture render a single frame of Metro Exodus.

On Turing, you can see several things happening. One is green, that’s our RT cores kicking in. As you can see, the same ray tracing done on Pascal GPU is done in one-fifth of the time on Turing.

Reinventing graphics, NVIDIA and our partners have been driving Turing to market through a stack of products that now range from the highest performance product, at $999, all the way down to an entry gamer, at $149. The RTX products, with RT Cores and Tensor Cores, start at $349.

Broad Support

There’s no question that real-time ray tracing is the next generation of gaming.

Some of the most important ecosystem partners have announced their support and are now opening the floodgates for real-time ray tracing in games.

Inside of Microsoft’s DirectX 12 multimedia programming interfaces is a ray-tracing component they call DirectX Raytracing (DXR). So every PC, if enabled by the GPU, is capable of accelerated ray tracing.

At the Game Developers Conference in March, we turned on DXR-accelerated ray tracing on our Pascal and Turing GTX GPUs.

To be sure, earlier GPU architectures, such as Pascal, were designed to accelerate DirectX 12. So on this hardware, these calculations are performed on the programmable shader cores, a resource shared with many other graphics functions of the GPU.

So while your mileage will vary — since there are many ways ray tracing can be implemented — Turing will consistently perform better when playing games that make use of ray-tracing effects.

And that performance advantage on the most popular games is only going to grow.

EA’s AAA engine Frostbite, supports ray tracing. Unity and Unreal, which together power 90 percent of the world’s games, now support Microsoft’s DirectX ray tracing in the engine.

Collectively, that opens up an easy path for thousands and thousands of game developers to implement ray tracing in their games.

All told, NVIDIA’s engaged somewhere in excess of 100 developers who are working on ray-traced games.

To date we have millions of gamers who are gaming on RTX hardware, GPU-accelerated hardware with RT Cores.

And — thanks to ray tracing — that number is growing every week.

Learn about the key concepts of this technology in the on-demand webinar, Ray-Tracing Essentials.