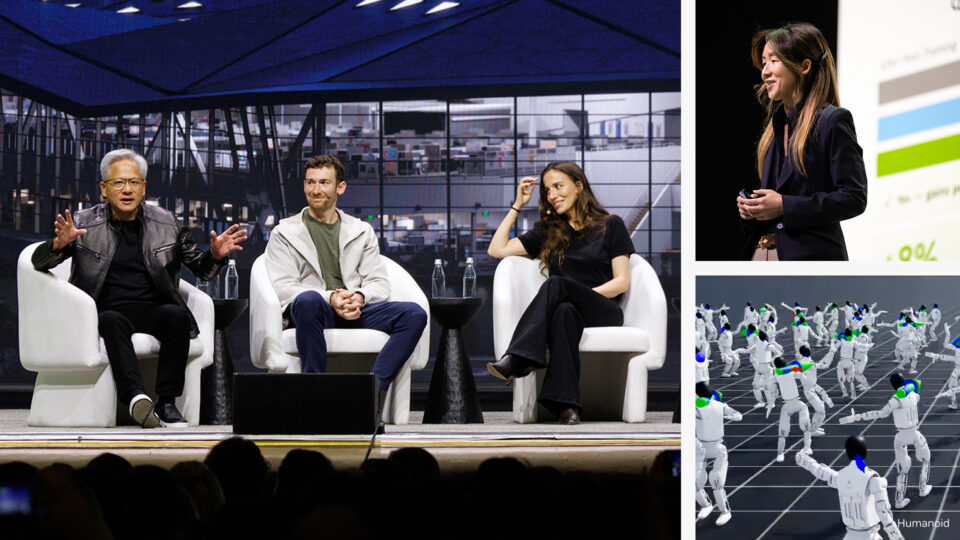

HPE and NVIDIA Debut AI Factory Stack to Power Next Industrial Shift

To speed up AI adoption across industries, HPE and NVIDIA today launched new AI factory offerings at HPE Discover in Las Vegas. The new lineup includes everything from modular AI...