Innovation in medical device technology combined with AI is giving healthcare professionals better decision-making tools to deliver care in robot-assisted surgery, interventional radiology, radiation therapy planning and more.

To enable this for clinical applications, AI-supported medical devices must have an accelerated pipeline to process, predict and visualize data in real time.

NVIDIA Clara Holoscan, a new AI computing platform for the healthcare industry, powered by NVIDIA AGX Orin, provides the computational infrastructure needed for scalable, software-defined, end-to-end processing of streaming data from medical devices.

Built as an end-to-end platform for seamlessly bridging medical devices with edge servers, it allows developers to create AI microservices that run low-latency streaming applications on devices while passing more complex tasks to data center resources.

From Pipe Dream to Real-Time Pipeline

Virtually every intelligent medical device has a similar processing pipeline that starts at the sensor, goes to the data domain and then is visualized for human decision-making. Depending on the device at hand — a CT scanner, an endoscope or an ICU in-room camera — there’s a different level of computation required at each stage of the workflow.

NVIDIA Clara Holoscan accelerates each of these phases:

- High-speed I/O: NVIDIA GPUDirect RDMA through NVIDIA ConnectX SmartNICs or third-party PCI Express cards allows for streaming data directly to the GPU memory for ultra-low-latency downstream processing.

- Physics processing: Once the data has been transmitted to the GPU, CUDA-X and NVIDIA Triton Inference Server accelerate physics-based calculations or AI processing to transform the sensor data into the image domain — for example, through image reconstruction in X-ray and CT, or beamforming in ultrasound.

- Image processing: Image data is fed into AI models using NVIDIA Triton to detect, classify, segment or track objects.

- Data processing: By combining image data streaming from the sensor with other previously acquired images using the NVIDIA cuCIM library, developers can perform registration or enhance the data with supplemental information like electronic health records.

- Rendering: The device data and resulting predictions can be visualized in 3D, in real time with Clara Render Server — or as an interactive cinematic render in NVIDIA Omniverse or in augmented reality with CloudXR — for example, to give clinicians a better picture of an organ or tumor being segmented.

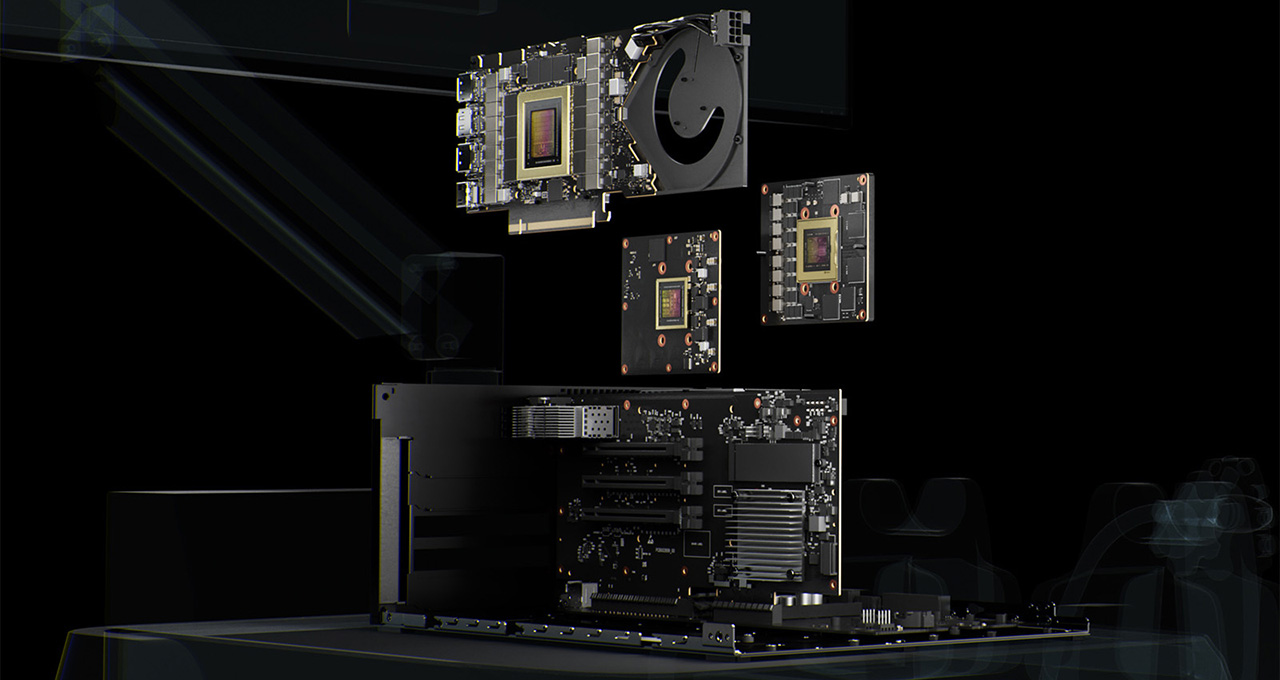

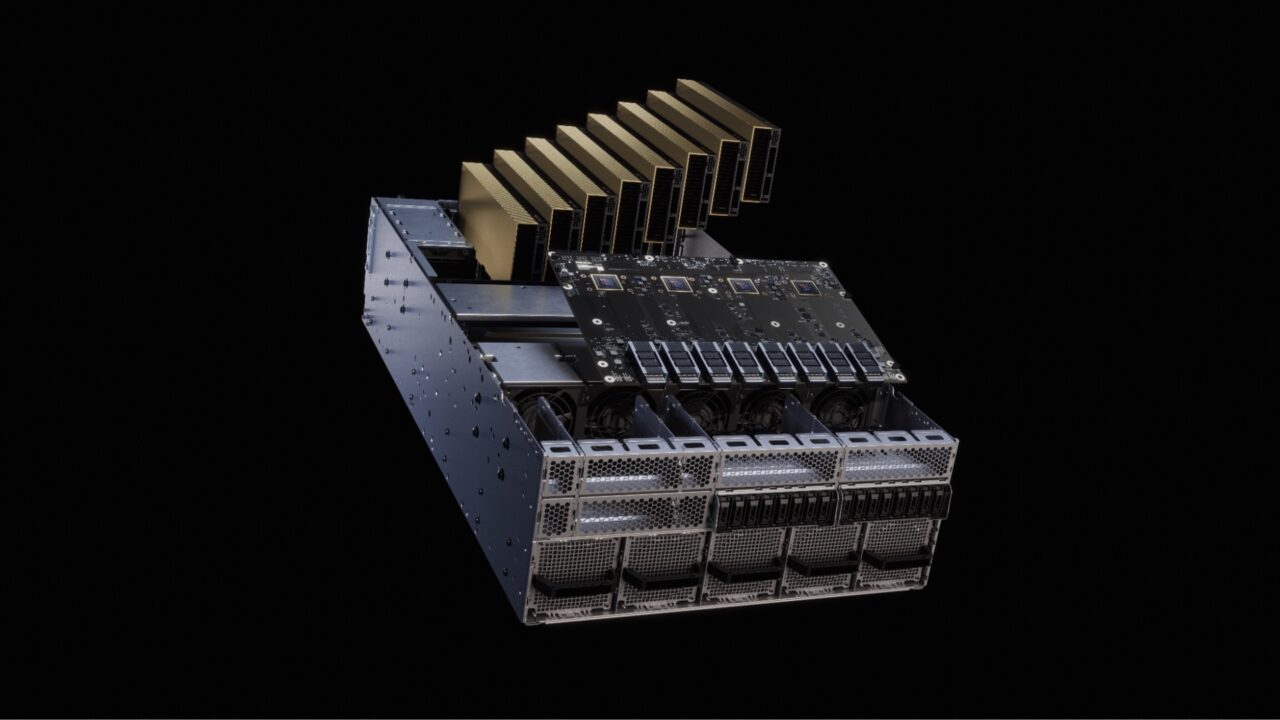

Clara Holoscan is a scalable architecture, extending from medical devices to NVIDIA-Certified edge servers, to NVIDIA DGX systems in the data center or the cloud. The platform allows developers to add as much or as little compute and input/output capability in their medical device as needed, balanced against the demands of latency, cost, space, power and bandwidth.

Accelerating the Medical Device Ecosystem

Many medical device companies are innovating with AI and robotics and using NVIDIA accelerated computing platforms to develop applications for robotic surgery, mobile CT scans, bronchoscopy and more.

NVIDIA Clara Holoscan was developed to better support applications like these by helping device makers scale up from device to data center — and scale out by accessing the breadth of NVIDIA AI solutions.

To accelerate the development of real-time medical devices with a range of sensor inputs, the Clara Holoscan platform supports I/O cards from members of the NVIDIA Inception accelerator program for AI and data science startups including:

- AJA Video Systems – video capture cards for endoscopy and surgical visualization applications

- KAYA Instruments – video capture cards commonly used in microscopy and scientific imaging instruments.

- us4us – research front-end devices enabling development of software-defined ultrasound solutions

Verasonics, a leader in ultrasound research front-end hardware, will enable support for Clara Holoscan to stream data directly to NVIDIA GPUs for processing using high-speed networking technologies.

Clara Holoscan SDK: Develop Once, Deploy Anywhere

With Clara Holoscan, developers can customize their applications to run as a series of modular microservices on the device as well as the server. Because it’s software-defined, medical device companies can continue to upgrade and improve their solutions over time.

The Clara Holoscan SDK supports this work with acceleration libraries, AI models and reference applications in ultrasound, digital pathology, endoscopy and more to help developers take advantage of embedded and scalable hybrid-cloud compute. With an end-to-end platform for deployment, it’s easier for companies to upgrade their install base, bringing new research breakthroughs to the day-to-day practice of medicine.

To get started, visit the NVIDIA Clara Holoscan site. Learn more about AI in healthcare at NVIDIA GTC, running online through Nov. 11.

Watch NVIDIA founder and CEO Jensen Huang’s GTC keynote address below. Tune in to a healthcare special address by Kimberly Powell, NVIDIA’s VP of healthcare. Subscribe to NVIDIA healthcare news.