Editor’s note: This post was updated on Aug. 8, 2023 to include details on the NVIDIA Ada Lovelace architecture.

The largest AI models can take months to train on today’s computing platforms. That’s too slow for businesses.

And AI, high performance computing and data analytics have grown in complexity; some large language models and others now include trillions of parameters.

The NVIDIA Hopper architecture is built from the ground up to accelerate these next-generation AI workloads with massive compute power and fast memory to handle growing networks and datasets.

Transformer Engine, first introduced with the NVIDIA Hopper architecture and then incorporated in the NVIDIA Ada Lovelace architecture, significantly speeds up AI performance and capabilities, and helps train large models within days or even hours.

Training AI Models With Transformer Engine

Transformer models are the backbone of LLMs used widely today, such as BERT and GPT. Initially developed for natural language processing use cases, their versatility is increasingly being applied to computer vision, drug discovery and more.

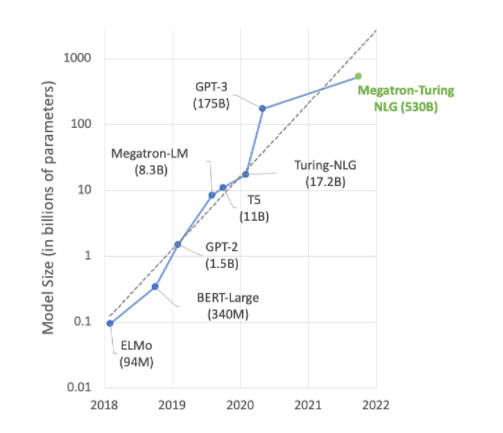

However, model size has continued to increase exponentially, now reaching trillions of parameters. This trend is causing training times to stretch into months due to huge amounts of computation, which is impractical for many business needs.

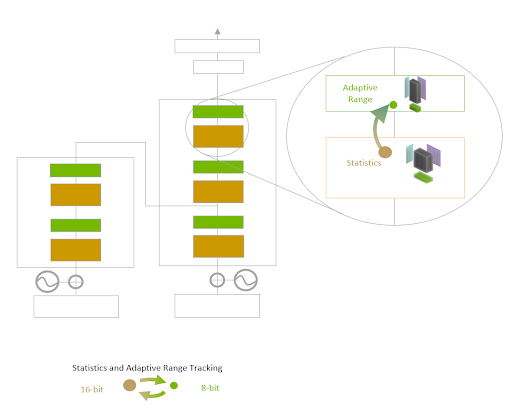

Transformer Engine uses 16-bit floating-point precision and a newly added 8-bit floating-point data format combined with advanced software algorithms that will further speed up AI performance and capabilities.

AI training relies on floating-point numbers, which have fractional components, like 3.14. Introduced with the NVIDIA Ampere architecture, the TensorFloat-32 (TF32) floating point format is the default 32-bit format used in NVIDIA’s TensorFlow and PyTorch containers.

Most AI floating-point math is done using 16-bit “half” precision (FP16) and 32-bit “single” precision (FP32). By reducing the math operations to just eight bits, Transformer Engine makes it possible to train larger networks faster without compromising accuracy.

When coupled with other new features in the Hopper architecture — like the NVLink Switch system, which provides a direct high-speed interconnect between nodes — H100-accelerated server clusters can train enormous networks that were nearly impossible to train at the speed necessary for enterprises.

Diving Deeper Into Transformer Engine

Transformer Engine uses software and custom NVIDIA fourth-generation Tensor Core technology designed to accelerate training for models built from the prevalent AI model building block, the transformer. These Tensor Cores can apply mixed FP8 and FP16 formats to dramatically accelerate AI calculations for transformers. Tensor Core operations in FP8 have twice the computational throughput of 16-bit operations.

The challenge for models is to intelligently manage the precision to maintain accuracy while gaining the performance of smaller, faster numerical formats. Transformer Engine enables this with custom, NVIDIA-tuned heuristics that dynamically choose between FP8 and FP16 calculations and automatically handle re-casting and scaling between these precisions in each layer of a neural network.

The NVIDIA Hopper architecture in particular also advances fourth-generation Tensor Cores by tripling the floating-point operations per second compared with prior-generation TF32, FP64, FP16 and INT8 precisions. Combined with Transformer Engine and fourth-generation NVLink, Hopper Tensor Cores enable an order-of-magnitude speedup for HPC and AI workloads.

Revving Up Transformer Engine

Much of the cutting-edge work in AI revolves around LLMs like Megatron 530B. The chart below shows the growth of model size in recent years, a trend that’s widely expected to continue. Many researchers are already working on trillion-plus parameter models for natural language understanding and other applications, showing an unrelenting appetite for AI compute power.

Meeting the demands of these growing models requires a combination of computational power and a ton of high-speed memory. The NVIDIA H100 Tensor Core GPU delivers on both fronts, with the speedups made possible by Transformer Engine to take AI training to the next level.

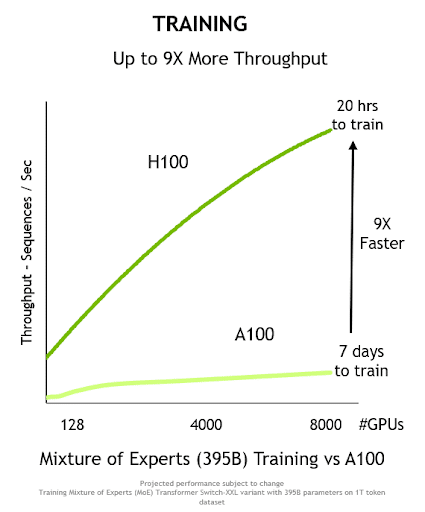

Combined, these innovations deliver higher throughput and a 9x reduction in time to train, from seven days to just 20 hours on the Mixture of Experts 395B model:

Transformer Engine can also be used for inference without any data format conversions. Previously, INT8 was the go-to precision for optimal inference performance. However, it requires that the trained networks be converted to INT8 as part of the optimization process, something the NVIDIA TensorRT inference optimizer makes easy.

Using models trained with FP8 will allow developers to skip this conversion step altogether and do inference operations using that same precision. And like INT8-formatted networks, deployments using Transformer Engine can run in a much smaller memory footprint.

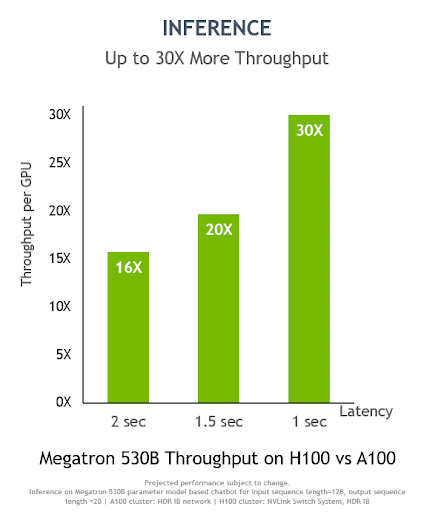

On Megatron 530B, NVIDIA H100 inference per-GPU throughput is up to 30x higher than with the NVIDIA A100 Tensor Core GPU, with a one-second response latency, showcasing it as the optimal platform for AI deployments:

Transformer Engine can also boost inference even on smaller transformer-based networks that are already highly optimized. For example, on industry-standard MLPerf Inference 3.0 benchmarks, NVIDIA H100 delivered up to 4.3x higher inference performance compared to the prior-generation NVIDIA A100 on BERT, a popular network for natural language understanding.

To learn more about the NVIDIA H100 GPU and the Hopper architecture, read this NVIDIA Technical Blog post, the Hopper architecture whitepaper and NVIDIA’s most recent results on MLPerf Inference and Training.