Rendered.ai is easing AI training for developers, data scientists and others with its platform-as-a-service for synthetic data generation, or SDG.

Training computer vision AI models requires massive, high-quality, diverse and unbiased datasets. These can be challenging and costly to obtain, especially with increasing demands both of and for AI.

The Rendered.ai platform-as-a-service helps to solve this issue by generating physically accurate synthetic data — data that’s created from 3D simulations — to train computer vision models.

“Real-world data often can’t capture all of the possible scenarios and edge cases necessary to generalize an AI model, which is where SDG becomes key for AI and machine learning engineers,” said Nathan Kundtz, founder and CEO of Rendered.ai, which is based in Bellevue, Wash., a Seattle suburb.

Register for NVIDIA GTC, which takes place March 17-21, to hear how companies like Rendered.ai are using the latest innovations in AI and graphics. Join OpenUSD Day to learn how to build generative AI-enabled 3D pipelines and tools using Universal Scene Description.

A member of the NVIDIA Inception program for cutting-edge startups, Rendered.ai has now integrated into its platform NVIDIA Omniverse Replicator, a core extension of the Omniverse platform for developing and operating industrial metaverse applications.

Omniverse Replicator enables developers to generate labeled synthetic data for many such applications, including visual inspection, robotics and autonomous driving. It’s built on open standards for 3D workflows, including Universal Scene Description (“OpenUSD”), Material Definition Language (MDL) and PhysX.

Synthetic images generated with Rendered.ai have been used to model landscapes and vegetation for virtual worlds, detect objects in satellite imagery, and even test the viability of human oocytes, or egg cells.

With Rendered.ai tapping into the RTX-accelerated functionalities of Omniverse Replicator — such as ray tracing, domain randomization and multi-sensor simulation — computer vision engineers, data scientists and other users can quickly and easily generate synthetic data through a simple web interface in the cloud.

“The data that we have to train AI is really the dominant factor on the AI’s performance,” Kundtz said. “Integrating Omniverse Replicator into Rendered.ai will enable new levels of ease and efficiency for users tapping synthetic data to train bigger, better AI models for applications across industries.”

Rendered.ai will demonstrate its platform integration with Omniverse Replicator at the Conference on Computer Vision and Pattern Recognition (CVPR), running June 18-22 in Vancouver, Canada.

Synthetic Data Generation in the Cloud

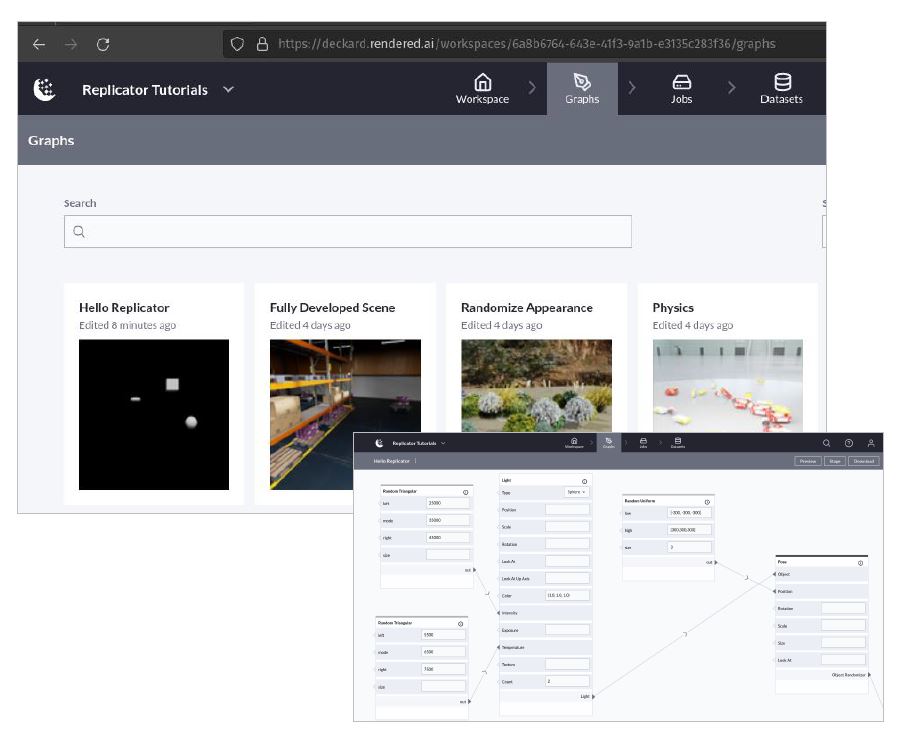

Rendered.ai, now available through AWS Marketplace, brings to the cloud a collaborative web interface for developers and teams to design SDG applications that can be easily configured by computer vision engineers and data scientists.

It’s a one-stop shop for people to share workspaces containing SDG datasets, tasks, graphs and more — all through a web browser.

Omniverse Replicator tutorials, examples and other 3D assets can now be easily tapped in a Rendered.ai channel running on AWS infrastructure. This gives developers a starting point for building their own SDG capabilities in the cloud. And the process will be especially seamless for Omniverse Replicator users who are already familiar with these tools.

Rendered.ai focuses on offering physically accurate synthetic data through the platform, as this enables introducing new information to AI systems based on how physical processes work in the real world, according to Kundtz.

“In the future, every company using AI is going to have a synthetic data engineering team for physics-based modeling and domain-specific AI-model building,” he added. “It’s often not good enough to simulate something once, as complex AI tasks require training on large batches of data covering diverse scenarios — this is where synthetic data becomes key.”

Hear more from Kundtz on the NVIDIA AI Podcast.

Learn more about Omniverse Replicator and the new cloud capability on the Rendered.ai site. Sign up for a 30-day trial of the Rendered.ai Developer account using the content code “OVDEMO” — which will enable access to the new Rendered.ai Channel for Omniverse.

Featured image courtesy of Rendered.ai.