Outscanding: That’s one way to describe the groundbreaking work in AI and medical imaging from researchers at top medical centers and universities.

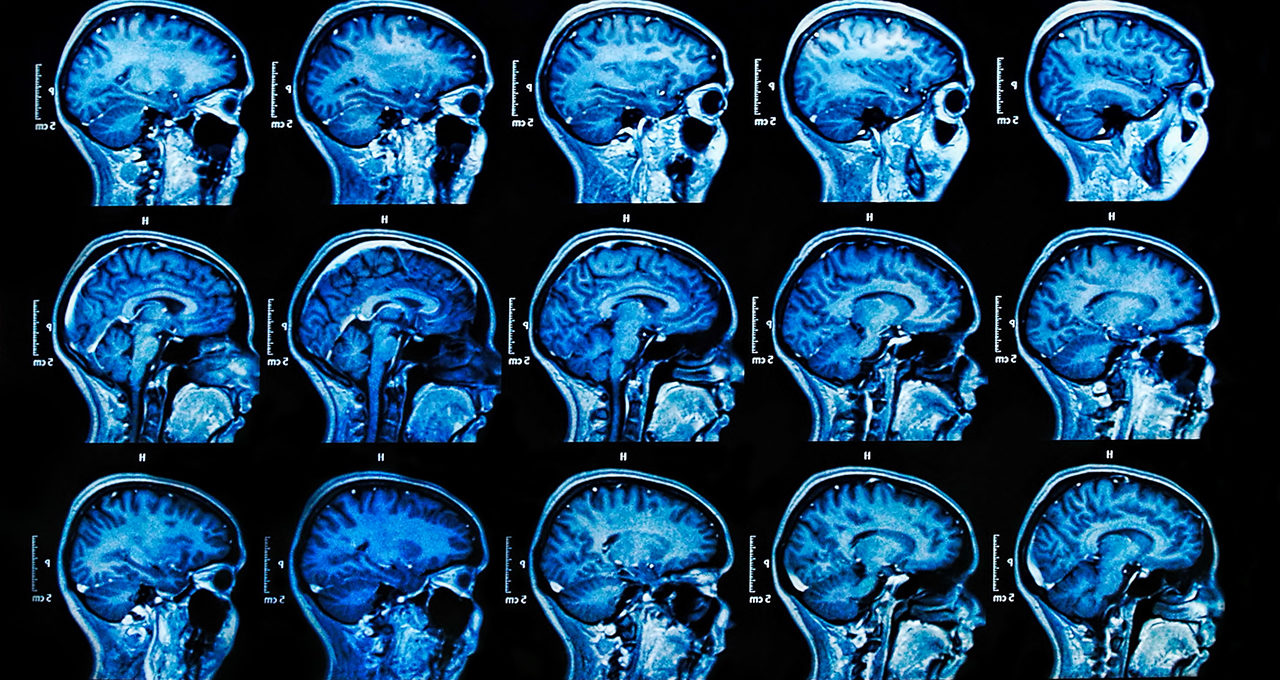

The arrival of AI in healthcare is arguably nowhere more apparent than in radiology, where machine learning is supporting workflows side by side with caregivers every step of the way.

At St. Jude Children’s Research Hospital, researchers are using AI to study the effects of cancer treatment on brain structures.

Data scientists at the University of California, San Francisco, are helping clinical teams automate parts of their radiology workflow with machine learning.

In Shanghai, radiologists are using AI to help identify bone fractures and multiple diseases from single images.

Learn more about the incredible research and technology advancing medical imaging worldwide below. And register for the next GPU Technology Conference, running online Nov. 8-11, to hear from healthcare experts using AI and accelerated computing around the globe.

St. Jude Researchers Extract Data to Find Cures With NVIDIA DGX

Apart from treating and curing childhood diseases, the team at St. Jude Children’s Research Hospital drives the research for developing treatments for pediatric brain tumors.

Medulloblastoma is the most common malignant brain tumor pediatric diagnosis. With extensive therapy, the average survival rate is between 70 to 75 percent. However, the therapy, while treating cancer, can cause other problems such as long-term neurocognitive deficits.

It can also cause short-term brain damage called posterior fossa syndrome, causing problems with language, emotions and movement that can last for weeks or years.

Zhaohua Lu, a biostatistician at St. Jude Children’s Research Hospital, is using neuroimaging data acquired after radiotherapy to compare the neurocognitive outcomes of patient subgroups with different brain structures affected by the cancer treatment.

This research into structural brain changes following medulloblastoma treatment will enable earlier prognosis and more targeted interventions.

“We use neural imaging data to distinguish the patients into subgroups according to their MRI measurements. So the MRI measurement at baseline after radiotherapy is a quite promising predictor for the neurocognitive outcomes 36 months later,” said Lu.

Using an NVIDIA DGX A100 for the study, Lu and the team developed a 3D convolutional autoencoder to extract features from the neuroimaging data. In a future study, the team will investigate potential confounders like age, gender and treatment intensity to build a more rigorous model that further supports their hypothesis.

Driving UCSF Research to Clinical Radiology Application With NVIDIA Clara

As demand for imaging and radiologist efforts increases, clinical teams can benefit from university research by bringing machine learning into every step of the radiology workflow — from data acquisition and inference to review and clinical practice.

One application of this machine learning model is to detect hip fractures in patients for triage. The classification algorithm uses object detection to identify the left and right hip and classify it as fractured or not fractured, and identify if there is hardware in the hip. When a fracture is detected, the case is accelerated to the top of the list for faster review.

“We were once discussing the implementation of inserting an algorithm into a clinical workflow and the physician told us that ‘if we could do it in two clicks, that would be too slow.’ It needed to be one click for the physicians to get into the data,” said Beck Olson, a data scientist at the UCSF Center for Intelligent Imaging. “These workflows are razor-thin in terms of efficiency and we need to seamlessly integrate these results.”

The UCSF framework routes the data through radiologists’ existing workflows using the NVIDIA Clara Deploy software development kit. This data then goes to the inference model and quantification is performed using custom operators running on the NVIDIA Clara Train SDK.

The results are then sent into the open-source XNAT platform for clinical review. Clinicians are able to provide feedback to data scientists, who in turn retrain the machine learning model, which can then be used to update the inference model NVIDIA Clara Deploy is using — providing a seamless experience for radiologists.

Modality Manufacturer Uses AI to Identify Multiple Diseases From a Single Image

In trauma patients, multiple rib fractures can indicate how severe their injuries are — a key indicator of potential respiratory failure and overall mortality. Radiologists have to dedicate time and effort to catch these injuries before it’s too late.

Shanghai-based United Imaging Intelligence, a member of the NVIDIA Inception acceleration platform, uses AI to make medical devices more efficient, optimize clinical workflows and facilitate advanced research.

“We believe the proper way to see AI is making it a ‘best friend’ for healthcare professionals and empower them to be better in doing their jobs — but not replace them,” said Terrence Chen, CEO of UII America.

With AI, UII’s technologies can analyze and detect multiple diseases from a single image. From a chest CT scan, its uAI Portal can identify rib fractures, lung nodules and lymphadenopathy, as well as other diseases like pneumonia, COVID-19, esophageal cancer, spinal tumors and breast masses and lesions.

The uAI technologies run on the NVIDIA JetPack SDK and Jetson embedded modules including Jetson TX2, Jetson Nano and Xavier NX. With NVIDIA TensorRT, efficiency is improved by up to 40 percent and GPU memory requirements are reduced by 50 percent.

Find these full talk replays from the NVIDIA GPU Technology Conference, and hundreds of other free sessions, at NVIDIA On-Demand.

Subscribe to NVIDIA healthcare news here.