Known around the world for its broad range of personal computers, phones, servers, networking and services, Lenovo also has years of experience in designing and delivering high performance computing and AI systems.

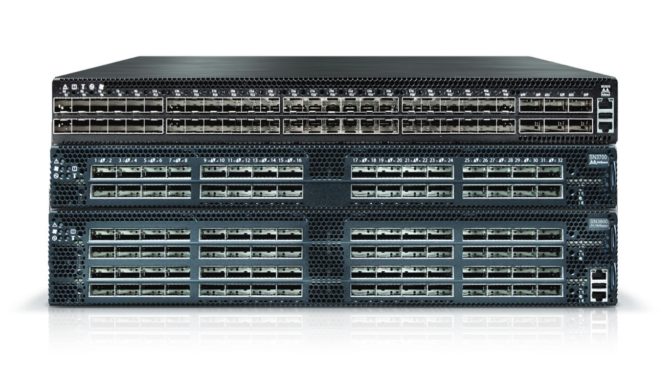

NVIDIA and Mellanox have been long-time collaborators with Lenovo and now this relationship is expanding in a big way. This fall, Lenovo will begin providing NVIDIA Mellanox Spectrum Ethernet switches to its customers in selected integrated solutions, joining the NVIDIA Quantum InfiniBand switches already offered by the company.

These are the fastest, most efficient, switches for end-to-end InfiniBand and Ethernet networking, built for any type of compute and storage infrastructures serving data-intensive applications, scientific simulations, AI and more.

The Spectrum Ethernet switches are the most advanced available in the market and are optimized for high-performance, AI, cloud and other enterprise-class systems. They offer connectivity from 10 to 400 gigabits per second and come in designs tailored for top-of-rack, storage clusters, spine and superspine uses.

All Spectrum Ethernet switches feature a fully shared buffer to support fair bandwidth allocation and predictably low latency, as well as traffic flow prioritization and optimization technology. They also offer What Just Happened — the most useful Ethernet switch telemetry technology on the market — which provides faster and easier network monitoring, troubleshooting and problem resolution.

The Spectrum switches from Lenovo will include Cumulus Linux, the leading Linux-based network operating system, recently acquired by NVIDIA.

All the Best Networking for High-Performance and AI Data Centers

With a broad portfolio of NVIDIA Mellanox ConnectX adapters, Mellanox Quantum InfiniBand switches, Mellanox Spectrum Ethernet switches and LinkX cables and transceivers, Lenovo customers can select from the fastest and most advanced networking for their data center compute and storage infrastructures.

InfiniBand provides the most efficient data throughput and lowest latency for keeping hungry CPUs and GPUs well fed with data, as well as connecting high-speed file and block storage for computation and checkpointing. It also offers in-network computing engines that enable the network to perform data processing on transferred data, including data reduction and data aggregation, message passing interface acceleration engines and more. This speeds up calculations and increases performance for high performance and AI applications.

For high-performance and AI data centers using Ethernet for storage or management connectivity, the Spectrum switches provide an ideal network infrastructure. InfiniBand data centers can seamlessly connect to an Ethernet fabric via the Mellanox Skyway 100G and 200G InfiniBand-to-Ethernet gateways.

Expanding Into Private and Hybrid Cloud

Lenovo also will use Spectrum Ethernet switches as part of its enterprise offerings, such as private and hybrid cloud, hyperconverged infrastructure, Microsoft Azure and SAP HANA solutions.

Additionally, Lenovo ThinkAgile products will qualify NVIDIA Spectrum switches to provide top-of-rack and rack-to-rack connectivity. This lineup includes:

- Lenovo ThinkAgile SX for Microsoft Azure Stack for hybrid cloud

- Lenovo ThinkAgile CP series for enterprise private cloud

- Lenovo ThinkAgile HX HCI for SAP HANA

Ongoing Innovation

Lenovo and NVIDIA have long collaborated in creating faster, denser, more efficient solutions for high performance computing, AI and, most recently, for private clouds.

- GPU servers: Lenovo offers a full portfolio of ThinkSystem servers with NVIDIA GPUs to deliver the fastest compute times for high-performance and AI workloads. These servers can be connected with Mellanox ConnectX adapters using InfiniBand or Ethernet connectivity as needed.

- Liquid cooling: Lenovo Neptune water-cooled server technology offers faster, quieter, more efficient cooling. This includes the ability to cool server CPUs, GPUs, InfiniBand adapters and entire racks using water instead of fan-blown air to carry away heat more quickly.

- InfiniBand speed: Lenovo was one of the earliest server vendors to implement new generations of InfiniBand connectivity, including EDR (100Gb/s) and HDR (200Gb/s), both of which can be water-cooled, and the companies continue to innovate around InfiniBand interconnects.

- Network topologies: Lenovo and Mellanox were first to build an InfiniBand Dragonfly+ topology supercomputer, at the University of Toronto. Born as an ultimate software-defined network, InfiniBand supports many network topologies, including Fat Tree, Torus and Dragonfly+, which provide a rich set of cost/performance/scale-optimized options for data center deployments.

Learn More

To learn more about NVIDIA Mellanox interconnect products and Lenovo’s support for any type of compute, storage or management traffic for high-performance, AI, private/hybrid cloud and hyperconverged infrastructure, check out the following resources.

- NVIDIA Mellanox Spectrum Ethernet Switches

- NVIDIA Mellanox Quantum Switches

- Lenovo HPC solutions

- Lenovo Neptune water-cooled technology

- Lenovo Intelligent Computing Orchestration for AI/HPC with NVIDIA GPUs

- NVIDIA AI and Virtualization GPUs for Lenovo ThinkSystem Servers

- Lenovo ConnectX-6 HDR InfiniBand Adapters

- NVIDIA Mellanox blog: Liquid-Cooled HDR InfiniBand Adapters for Lenovo ThinkSystem SD650

- NVIDIA Cumulus Linux NOS

Feature image by Gerd Altmann.