The path to autonomous vehicle deployment is accelerating through the Omniverse.

During his opening keynote at GTC, NVIDIA founder and CEO Jensen Huang announced the next generation of autonomous vehicle simulation, NVIDIA DRIVE Sim, now powered by NVIDIA Omniverse.

DRIVE Sim enables high-fidelity simulation by tapping into NVIDIA’s core technologies to deliver a powerful, cloud-based computing platform. It can generate datasets to train the vehicle’s perception system and provide a virtual proving ground to test the vehicle’s decision-making process while accounting for edge cases. The platform can be connected to the AV stack in software-in-the-loop or hardware-in-the-loop configurations to test the full driving experience.

DRIVE Sim on Omniverse is a major step forward as NVIDIA transitions the foundation for autonomous vehicle simulation from a game engine to a simulation engine.

This shift to simulation architected specifically for self-driving development has required significant effort, but brings an array of new capabilities and opportunities.

Enter the Omniverse

Creating a purpose-built autonomous vehicle simulation platform is not a simple undertaking. Game engines are powerful tools that provide incredible capabilities, however, they’re designed to build games, not scientific, physically accurate, repeatable simulations.

Designing the next generation of DRIVE Sim required a new approach. This new simulator had to be repeatable with precise timing, easily scale across GPUs and server nodes, simulate sensor feeds with physical accuracy and act as a modular and extensible platform.

NVIDIA Omniverse is the confluence of almost every core technology developed by NVIDIA. And DRIVE Sim takes advantage of the company’s expertise in graphics, high performance computing, AI and hardware design. Combining these capabilities provides a technology platform that is perfect for autonomous vehicle simulation.

Specifically, Omniverse provides a platform that was designed from the ground up to support multi-GPU computing. It incorporates a physically accurate, ray-tracing renderer based on NVIDIA RTX technology.

NVIDIA Omniverse also includes “Kit,” a scalable and extensible simulation framework for building interactive 3D applications and microservices. Using Kit over the last year, NVIDIA has implemented the DRIVE Sim core simulation engine in a way that supports repeatable simulation with precise control over all processes.

Timing and Repeatability

Autonomous vehicle simulation can only be an effective development tool if scenarios are repeatable and timing is accurate.

For instance, NVIDIA Omniverse schedules and manages all sensor and environment rendering functions to ensure repeatability without loss of accuracy. It does this across GPUs and across nodes giving DRIVE Sim the ability to handle detailed environments and test vehicles with complex sensor suites. Additionally, it can manage such workloads at slower or faster than real time, while generating repeatable results.

Not only does the platform enable this flexibility and accuracy, it does so in a way that’s scalable, so developers can run fleets of vehicles with various sensor suites at large scale and at the highest levels of fidelity.

Physically Accurate Sensors

In addition to accurately recreating real-world driving conditions, the simulation environment must also render vehicle sensor data in the exact same way cameras, radars and lidars take in data from the physical world.

With NVIDIA RTX technology, DRIVE Sim is able to render physically accurate sensor data in real time. Ray tracing provides realistic lighting by simulating the physical properties of visible and non-visible waveforms. And the NVIDIA Omniverse RTX renderer coupled with NVIDIA RTX GPUs enables ray tracing at real-time frame rates.

The capability to simulate light in real time has significant benefits for autonomous vehicle simulation. It makes it possible to recreate lighting environments that can be virtually impossible to capture using rasterization — from the reflections off a tanker truck to the shadows inside a dim tunnel.

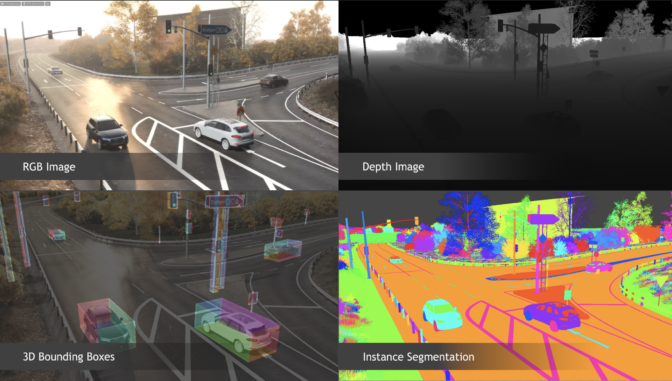

Generating physically accurate sensor data is especially powerful for building datasets to train AI-based perception networks, outputting the ground-truth data with the virtual sensor data. DRIVE Sim includes tools for advanced dataset creation including a powerful Python scripting interface and domain randomization tools.

Using this synthetic data in the DNN training process saves the cost of collecting and labeling real-world data, and speeds up iteration for streamlined autonomous vehicle deployment.

Modular and Extensible

As a modular, open and extensible platform, DRIVE Sim provides developers the ultimate flexibility and efficiency in simulation testing.

DRIVE Sim on Omniverse allows different components of the simulator to be run to support different use cases. One group of engineers can run just the perception stack in simulation. Another can focus on the planning and control stack by simulating scenarios based on ground-truth object data (thus bypassing the perception stack).

This modularity significantly cuts down on development time by allowing developers to focus on the task at hand, while ensuring that the entire team is using the same tools, scenarios, models and assets in simulation for consistent results.

Using the NVIDIA Omniverse Kit SDK, DRIVE Sim allows developers to build custom models, 3D content and validation tools or to interface with other simulations. Users can create their own plugins or choose from a rich library of vehicle, sensor and traffic plugins provided by DRIVE Sim ecosystem partners. This flexibility enables users to customize DRIVE Sim for their unique use case and tailor the simulation experience to their development and validation needs.

DRIVE Sim on Omniverse will be available to developers via an early access program this summer. Check out how Omniverse is already changing the automotive world by watching some of our various GTC 21 sessions here.

Learn more about DRIVE Sim and accelerate the development of safer, more efficient transportation today.