At SIGGRAPH, the world’s largest gathering of computer graphics experts, NVIDIA announced significant updates for creators and developers using NVIDIA Omniverse, a real-time 3D design collaboration and world simulation platform.

The next wave of Omniverse worlds is moving to the cloud — and new features for Omniverse Kit, Nucleus, and the Audio2Face and Machinima apps allow users to better build physically accurate digital twins and realistic avatars, and redefine how virtual worlds are created and experienced.

Individuals and organizations across industries can substantially accelerate complex 3D graphics workflows with Omniverse. Whether an engineer, researcher, animator or designer, Omniverse users around the world have created vast virtual worlds and realistic simulations using the platform’s core rendering, physics and AI technologies.

The Omniverse community portals into the platform using NVIDIA RTX-enabled Studio laptops, workstations and OVX servers — and with the shift of Omniverse to the cloud, users can work virtually using non-RTX systems like Macs and Chromebooks.

Plus, NVIDIA Omniverse Avatar Cloud Engine (ACE) launched today — along with new Omniverse Connectors and applications — enabling users to easily build and customize virtual assistants and digital humans.

Bringing Real-Time, Real-World Accuracy to 3D Virtual Worlds

Omniverse users can enhance interaction and flexibility in USD workflows with OmniLive, a major development that delivers non-destructive live workflows at increased speed and performance to those who are connecting and collaborating among third-party applications.

Live layers will allow users to know who’s in a session with them — so teams can easily assign ownership and have an effortless way to save, discard and pause a collaborative session. OmniLive also enables custom versions of USD to live-sync seamlessly, making Omniverse Connectors much easier to develop.

Omniverse users can also experience the next wave of AI and industrial simulation with the latest updates to NVIDIA PhysX, an advanced real-time engine for simulating realistic physics.

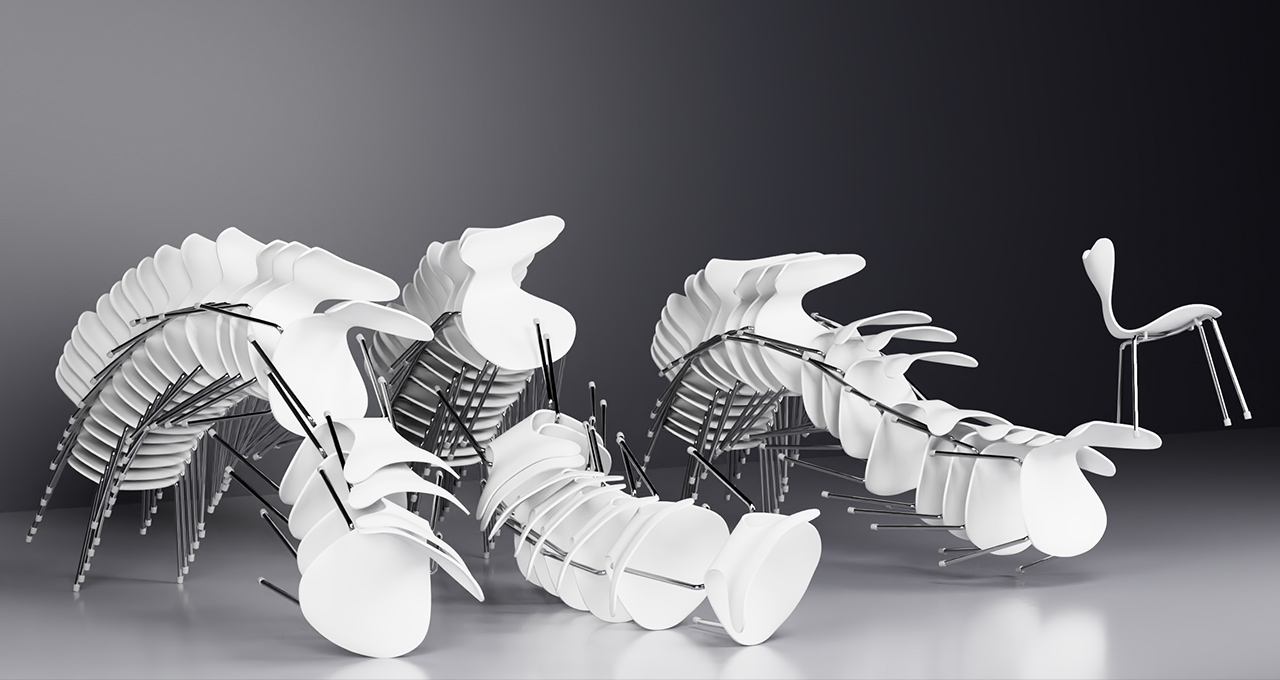

The NVIDIA PhysX updates allow Omniverse users to build some of the world’s most advanced physics solvers, enabling 3D virtual worlds to obey the laws of physics. New features include scalable signed-distance-field (SDF) simulation, soft-body simulation, particle-cloth simulation for cloth manipulation and soft-contact models.

Additional Omniverse Kit updates include:

- Improvements for synthetic data generation. This includes an improved content library with more assets to help prepare scenes for training, as well as more USD and SimReady assets.

- Viewport 2.0, which is the new extendable viewport for all Kit-based applications. With Viewport 2.0, users can create custom user interfaces and use multiple viewport windows at once.

- Improved layout tools, which allow for better manipulation of content in the viewport. Users can align, snap, randomize and use other features for quickly laying out vast quantities of content to better improve the stage-generation process.

- New review tools, which gives users the ability to measure and convey changes to team members during collaborative sessions.

- ActionGraph improvements. New direct-in-viewport buttons and improvements allow users to build configurators directly in their viewport with action graph alone.

Omniverse Expands With New Connectors and 3D Assets

Omniverse now includes a new customizable viewport, improved user interfaces, enhanced review tools and major releases to its free 3D asset library. Users can access several free USD scenes and content packs to build virtual worlds faster than ever.

Additionally, NVIDIA is developing several new Omniverse Connectors, including Autodesk Alias and Autodesk Civil 3D, Blender, Open Geospatial Consortium, Siemens JT, SimScale and Unity. New Connectors now available in beta are PTC Creo, SideFX Houdini and Visual Components.

Plus, partners are continuously releasing new updates to the Omniverse-ready connections, including:

- Maxon’s Redshift Hydra Renderer as well as OTOY’s OctaneRender Hydra plugin and Omniverse Connector, which let artists and designers use their preferred renderers directly in Omniverse.

- Ipolog’s spinoff SyncTwin, which offers a new suite of tools and services built on Omniverse to enable development of industrial digital twins.

- Prevu3D, a solution that converts 3D point clouds into high-quality and interactive meshes for digital twins, now with USD support enabling workflows in Omniverse.

- Lightning AI, which built a machine learning app that uses Omniverse Replicator to generate synthetic data.

Bringing Animation and Avatars to the Next Level With AI

With AI, Omniverse users can quickly bring 3D characters to life with realistic facial features and movements.

Creators can use the Omniverse Audio2Face app to simplify animation of a 3D character by matching virtually any voice-over track. Audio2Face is powered by an AI model that can create facial animations based solely on voices. And with its latest updates, users can now direct the emotions of avatars over time. This means creators can easily mix key emotions such as joy, amazement, anger and sadness.

Additional Audio2Face updates include:

- Audio2Emotion, a new feature allowing avatar-emotion controls to be automatically keyed by AI that infers emotion from an audio clip.

- Full-face animation, which enables Omniverse users to direct eye, teeth and tongue motion, in addition to the avatar’s skin, for more complete facial animation.

- Character setup. The Character Transfer retargeting tool now supports full-face animation with easy-to-use tools to define meshes that make up the eyes, teeth and tongue.

Omniverse Machinima capabilities are also expanding. The latest update to the Machinima app includes Audio2Face and Audio2Gesture integration into Sequencer, which allows for easier playback and editing of AI-driven performances. Coupled with pose tracker improvements, the update will allow a user to drive a full performance directly from a single application.

Omniverse Machinima also includes new content from Post Scriptum, Beyond the Wire and Shadow Warrior, supporting partners of the #MadeinMachinima contest.

Lastly, Omniverse DeepSearch is now available for Omniverse Enterprise customers. This allows teams to use the power of AI to intuitively search through massive untagged asset databases. DeepSearch lets users search on qualitative or vague inputs, delivering accurate results to help creators develop the ideal look and lighting for their virtual scenes.

See how legendary studio ILM is using DeepSearch to create perfect skies:

Developing Realistic, AI-Driven Digital Humans

Omniverse ACE — also launched at SIGGRAPH — is a collection of cloud-based AI models and services for developers to easily build avatars.

It encompasses NVIDIA’s body of avatar technologies — from vision and speech AI, to natural language processing, to Audio2Face and Audio2Emotion — all running as application programming interfaces in the cloud.

With Omniverse ACE, developers can build, configure and deploy their avatar applications across nearly any engine, in any public or private cloud.

MetaHuman in Unreal Engine image courtesy of Epic Games.

Building Stronger Foundations for Creating More Virtual Worlds

Omniverse Nucleus is the database and collaboration engine of Omniverse. It allows a variety of client applications, renderers and microservices to share and modify representations of virtual worlds.

One of the biggest updates for Nucleus is the new headless single sign-on authorization, which means users can now write tools and carry tasks without needing to access the user interface to log in.

Additional Omniverse Nucleus updates include:

- Nucleus Navigator, an app for navigating and managing content — now with improved search functionality, as well as support for NGSearch and DeepSearch for Omniverse Enterprise.

- Nucleus Cache, services that can be used to optimize data transfers — now with bug fixes and performance improvements.

Watch Omniverse in Action

Our partners will be showcasing the latest capabilities of Omniverse at SIGGRAPH. These include software and cloud-service vendors such as Adobe, Amazon Web Services (AWS) and Microsoft.

Partners including Dell and HP will also demonstrate the power of RTX-capable, NVIDIA-Certified systems running Omniverse on the show floor, for an on-premises or hybrid approach.

ASUS is working with MoonShine, a visual effects company based in Taiwan known for animation and virtual production. At SIGGRAPH, ASUS and MoonShine will showcase their most comprehensive virtual production solutions, which are supercharged by NVIDIA Omniverse.

Watch the video below to discover how industry-level production processes can be enhanced with ASUS creator solutions and Omniverse.

Other partners will present on topics such as AI and virtual worlds. Check out all of the NVIDIA-powered sessions at SIGGRAPH to learn more about these breakthrough technologies.

NVIDIA Omniverse is available for free. Download it to start creating today.

Join NVIDIA at SIGGRAPH to learn more about the latest Omniverse announcements, watch the company’s special address on demand and see the global premiere of the documentary, The Art of Collaboration: NVIDIA, Omniverse and GTC, on Wednesday, Aug. 10, at 10 a.m. PT.