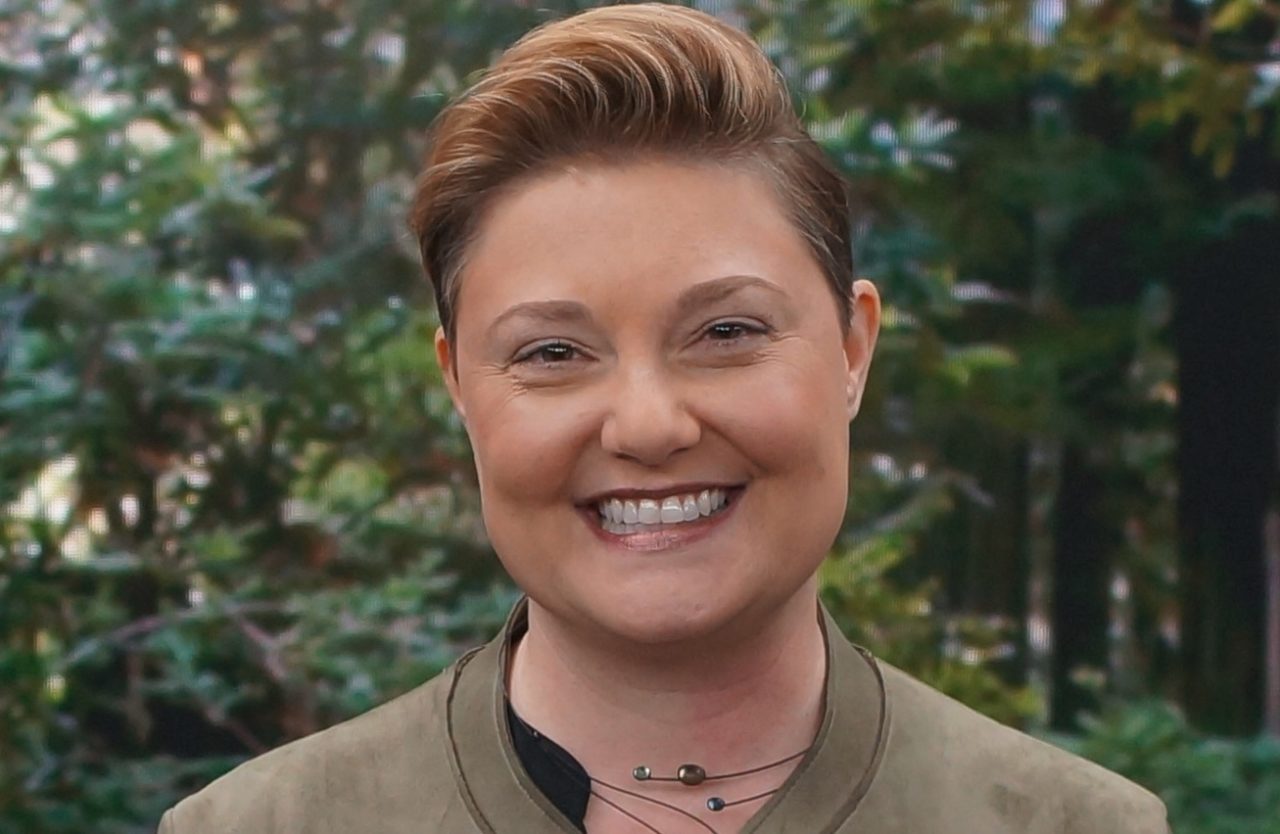

Kathy Baxter, the architect of the ethical AI practice at Salesforce, is helping her team and clients create more responsible technology. To do so, she supports employee education, the inclusion of safeguards in Salesforce technology, and collaboration with other companies to improve ethical AI across industries.

Baxter spoke with AI Podcast host Noah Kravitz about her role at the company, a position she helped create as the need for AI ethicists became apparent.

She’s helped construct practices such as release readiness planning, in which teams brainstorm any potential unintended negative consequences, along with ways to mitigate them.

In the future, Baxter predicts more global policies that will help companies define ethical AI and guide them in creating responsible technology.

Key Points From This Episode:

- There are several ways to correct bias in AI. This includes making edits to the training data or editing the model itself (for example, not using race or gender as a factor).

- Einstein is Salesforce’s AI platform. The company implements in-app guidance through a feature called Einstein Discovery. One of its functions is to alert users when they might be using sensitive variables such as age, race or gender. Administrators can also select the variables they don’t want to include in their model, to avoid accidental bias.

Tweetables:

“We have to understand that everything that we build and bring into society has an impact,” — Kathy Baxter [2:29]

“One of the magical things about AI is that we can become aware of biases that we might not have known even existed in our business processes in the first place.” — Kathy Baxter [10:18]

You Might Also Like

How Federated Learning Can Help Keep Data Private

Walter De Brouwer, CEO of Doc.ai — a company building a medical research platform that addresses the issue of data privacy with federated learning — talks about the complications of putting data to work in industries such as healthcare.

Good News About Fake News: AI Can Now Help Detect False Information

If only there was a way to filter the fake news from the real. Thanks to Vagelis Papalexakis, a professor of computer science at the University of California, Riverside, there is. He discusses his algorithm that can detect fake news with 75 percent accuracy.

Teaching Families to Embrace AI

Tara Chklovski is CEO and founder of Iridescent, a nonprofit that provides access to hands-on learning opportunities to prepare underrepresented children and adults for the future of work. She talks about Iridescent, the UN’s AI for Good Global Summit and the AI World Championship — part of the AI Family Challenge.

Tune In to the AI Podcast

Get the AI Podcast through iTunes, Google Podcasts, Google Play, Castbox, DoggCatcher, Overcast, PlayerFM, Pocket Casts, Podbay, PodBean, PodCruncher, PodKicker, Soundcloud, Spotify, Stitcher and TuneIn.

Make Our Podcast Better

Have a few minutes to spare? Fill out this short listener survey. Your answers will help us make a better podcast.