The highly anticipated NVIDIA DLSS 3.5 update, including Ray Reconstruction for NVIDIA Omniverse — a platform for connecting and building custom 3D tools and apps — is now available.

RTX Video Super Resolution (VSR) will be available with tomorrow’s NVIDIA Studio Driver release — which also supports the DLSS 3.5 update in Omniverse and is free for RTX GPU owners. The version 1.5 update delivers greater overall graphical fidelity, upscaling for native videos and support for GeForce RTX 20 Series GPUs.

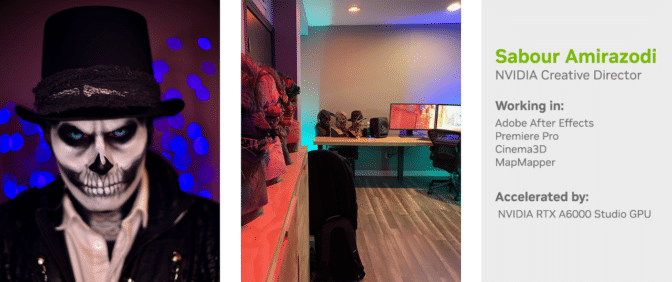

NVIDIA Creative Director and visual effects producer Sabour Amirazodi returns In the NVIDIA Studio to share his Halloween-themed project: a full projection mapping show on his house, featuring haunting songs, frightful animation, spooky props and more.

Get ready for some Halloween magic! 🎃

Check out this amazing artwork by @itsfunkyboy as part of our #SeasonalArtChallenge.👻

Don't miss the chance to showcase your own spooky creations using #SeasonalArtChallenge for a chance to be featured. 🙌 pic.twitter.com/uKcuFmHK4K

— NVIDIA Studio (@NVIDIAStudio) October 23, 2023

Creators can join the #SeasonalArtChallenge by submitting harvest- and fall-themed pieces through November.

The latest Halloween-themed Studio Standouts video features ghouls, creepy monsters, haunted hospitals, dimly lit homes and is not for the faint-of-heart.

Remarkable Ray Reconstruction

NVIDIA DLSS 3.5 — featuring Ray Reconstruction — enhances ray-traced image quality on GeForce RTX GPUs by replacing hand-tuned denoisers with an NVIDIA supercomputer-trained AI network that generates higher-quality pixels in between sampled rays.

Previewing content in the viewport, even with high-end hardware, can sometimes offer less than ideal image quality, as traditional denoisers require hand-tuning for every scene.

With DLSS 3.5, the AI neural network recognizes a wide variety of scenes, producing high-quality preview images and drastically reducing time spent rendering scenes.

NVIDIA Omniverse and the USD Composer app — featuring the Omniverse RTX Renderer — specialize in real-time preview modes, offering ray-tracing inference and higher-quality previews while building and iterating.

The feature can be enabled by opening “Render Settings” under “Ray Tracing,” opening the “Direct Lighting” tab and ensuring “New Denoiser (experimental)” is turned on.

The ‘Haunted Sanctuary’ Returns

Sabour Amirazodi’s “home-made” installation, Haunted Sanctuary, has become an annual tradition, much to the delight of his neighbors.

Amirazodi begins by staging props, such as pumpkins and skeletons, around his house.

Then he carefully positions his projectors — building protective casings to keep them both safe and blended into the scene.

“In the last few years, I’ve rendered 32,862 frames of 5K animation out of the Octane Render Engine. The loop has now become 21 minutes long, and the musical show is another 28 minutes!” — Sabour Amirazodi

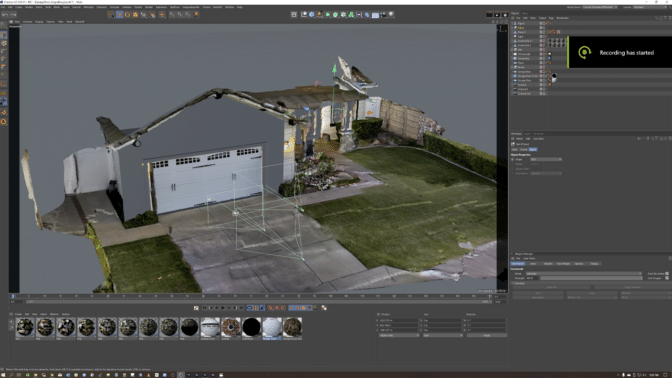

Building a virtual scene onto a physical object requires projection mapping, so Amirazodi used NVIDIA GPU-accelerated MadMapper software and its structured light-scan feature to map custom visuals onto his house. He achieved this by connecting a DSLR camera to his mobile workstation, which was powered by an NVIDIA RTX A5000 GPU.

He used the camera to shoot a series of lines and capture photos. Then, he translated to the projector’s point of view an image on which to base a 3D model. Basic camera-matching tools found in Cinema 4D helped recreate the scene. Afterward, Amirazodi applied various mapping and perspective correction edits.

Next, Amirazodi animated and rigged the characters. GPU acceleration in the viewport enabled smooth interactivity with complex 3D models.

“I like having a choice between several third-party NVIDIA GPU-accelerated 3D renderers, such as V-Ray, OctaneRender and Redshift in Cinema 4D,” noted Amirazodi.

“I switched to NVIDIA graphics cards in 2017. GPUs are the only way to go for serious creators.” — Sabour Amirazodi

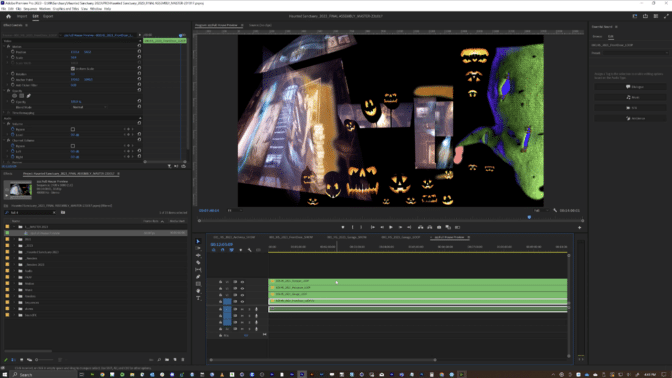

Amirazodi then spent hours on his RTX 6000 workstation creating and rendering out all the animations, assembling them in Adobe After Effects and compositing them on the scanned canvas in MadMapper. There, he crafted individual scenes to render out as chunks and assembled them in Adobe Premiere Pro. Remarkably, he repeated this workflow for every projector.

Once satisfied with the sequences, Amirazodi encoded everything using Adobe Media Encoder and loaded them onto BrightSign digital players — all networked to run the show synchronously.

Amirazodi used the advantages of GPU acceleration to streamline his workflow — saving him countless hours. “After Effects has numerous plug-ins that are GPU-accelerated — plus, Adobe Premiere Pro and Media Encoder use the new dual encoders found in the Ada generation of NVIDIA RTX 6000 GPUs, cutting my export times in half,” he said.

Amirazodi’s careful efforts are all in the Halloween spirit — creating a hauntingly memorable experience for his community.

“The hard work and long nights all become worth it when I see the smile on my kids’ faces and all the joy it brings to the entire neighborhood,” he reflected.

Discover more of Amirazodi’s work on IMDb.

Follow NVIDIA Studio on Instagram, Twitter and Facebook. Access tutorials on the Studio YouTube channel and get updates directly in your inbox by subscribing to the Studio newsletter.