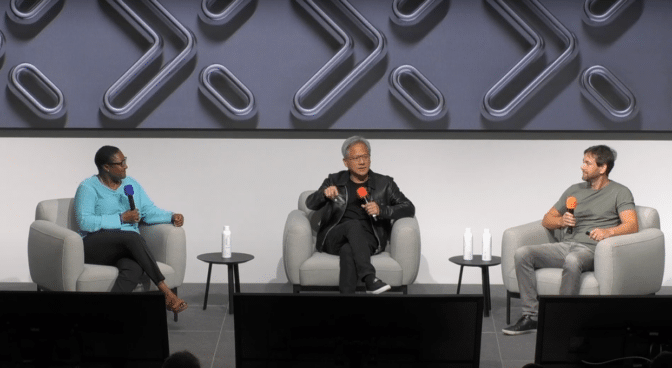

In celebration of Zoox’s 10th anniversary, NVIDIA founder and CEO Jensen Huang recently joined the robotaxi company’s CEO, Aicha Evans, and its cofounder and CTO, Jesse Levinson, to discuss the latest in autonomous vehicle (AV) innovation and experience a ride in the Zoox robotaxi.

In a fireside chat at Zoox’s headquarters in Foster City, Calif., the trio reflected on the two companies’ decade of collaboration. Evans and Levinson highlighted how Zoox pioneered the concept of a robotaxi purpose-built for ride-hailing and created groundbreaking innovations along the way, using NVIDIA technology.

“The world has never seen a robotics company like this before,” said Huang. “Zoox started out solely as a sustainable robotics company that delivers robots into the world as a fleet.”

Since 2014, Zoox has been on a mission to create fully autonomous, bidirectional vehicles purpose-built for ride-hailing services. This sets it apart in an industry largely focused on retrofitting existing cars with self-driving technology.

A decade later, the company is operating its robotaxi, powered by NVIDIA GPUs, on public roads.

Computing at the Core

Zoox robotaxis are, at their core, supercomputers on wheels. They’re built on multiple NVIDIA GPUs dedicated to processing the enormous amounts of data generated in real time by their sensors.

The sensor array includes cameras, lidar, radar, long-wave infrared sensors and microphones. The onboard computing system rapidly processes the raw sensor data collected and fuses it to provide a coherent understanding of the vehicle’s surroundings.

The processed data then flows through a perception engine and prediction module to planning and control systems, enabling the vehicle to navigate complex urban environments safely.

NVIDIA GPUs deliver the immense computing power required for the Zoox robotaxis’ autonomous capabilities and continuous learning from new experiences.

Using Simulation as a Virtual Proving Ground

Key to Zoox’s AV development process is its extensive use of simulation. The company uses NVIDIA GPUs and software tools to run a wide array of simulations, testing its autonomous systems in virtual environments before real-world deployment.

These simulations range from synthetic scenarios to replays of real-world scenarios created using data collected from test vehicles. Zoox uses retrofitted Toyota Highlanders equipped with the same sensor and compute packages as its robotaxis to gather driving data and validate its autonomous technology.

This data is then fed back into simulation environments, where it can be used to create countless variations and replays of scenarios and agent interactions.

Zoox also uses what it calls “adversarial simulations,” carefully crafted scenarios designed to test the limits of the autonomous systems and uncover potential edge cases.

The company’s comprehensive approach to simulation allows it to rapidly iterate and improve its autonomous driving software, bolstering AV safety and performance.

“We’ve been using NVIDIA hardware since the very start,” said Levinson. “It’s a huge part of our simulator, and we rely on NVIDIA GPUs in the vehicle to process everything around us in real time.”

A Neat Way to Seat

Zoox’s robotaxi, with its unique bidirectional design and carriage-style seating, is optimized for autonomous operation and passenger comfort, eliminating traditional concepts of a car’s “front” and “back” and providing equal comfort and safety for all occupants.

“I came to visit you when you were zero years old, and the vision was compelling,” Huang said, reflecting on Zoox’s evolution over the years. “The challenge was incredible. The technology, the talent — it is all world-class.”

Using NVIDIA GPUs and tools, Zoox is poised to redefine urban mobility, pioneering a future of safe, efficient and sustainable autonomous transportation for all.

From Testing Miles to Market Projections

As the AV industry gains momentum, recent projections highlight the potential for explosive growth in the robotaxi market. Guidehouse Insights forecasts over 5 million robotaxi deployments by 2030, with numbers expected to surge to almost 34 million by 2035.

The regulatory landscape reflects this progress, with 38 companies currently holding valid permits to test AVs with safety drivers in California. Zoox is currently one of only six companies permitted to test AVs without safety drivers in the state.

As the industry advances, Zoox has created a next-generation robotaxi by combining cutting-edge onboard computing with extensive simulation and development.

In the image at top, NVIDIA founder and CEO Jensen Huang stands with Zoox CEO Aicha Evans and Zoox cofounder and CTO Jesse Levinson in front of a Zoox robotaxi.