Editor’s note: As of June 6, 2025, NVIDIA Edify is no longer available as an NVIDIA NIM microservice preview. To explore available visual AI models, visit build.nvidia.com.

The pace of technology innovation has accelerated in the past year, most dramatically in AI. And in 2024, there was no better place to be a part of creating those breakthroughs than NVIDIA Research.

NVIDIA Research is comprised of hundreds of extremely bright people pushing the frontiers of knowledge, not just in AI, but across many areas of technology.

In the past year, NVIDIA Research laid the groundwork for future improvements in GPU performance with major research discoveries in circuits, memory architecture and sparse arithmetic. The team’s invention of novel graphics techniques continues to raise the bar for real-time rendering. And we developed new methods for improving the efficiency of AI — requiring less energy, taking fewer GPU cycles and delivering even better results.

But the most exciting developments of the year have been in generative AI.

We’re now able to generate, not just images and text, but 3D models, music and sounds. We’re also developing better control over what is generated: to generate realistic humanoid motion and to generate sequences of images with consistent subjects.

The application of generative AI to science has resulted in high-resolution weather forecasts that are more accurate than conventional numerical weather models. AI models have given us the ability to accurately predict how blood glucose levels respond to different foods. Embodied generative AI is being used to develop autonomous vehicles and robots.

And that was just this year. What follows is a deeper dive into some of NVIDIA Research’s greatest generative AI work in 2024. Of course, we continue to develop new models and methods for AI, and expect even more exciting results next year.

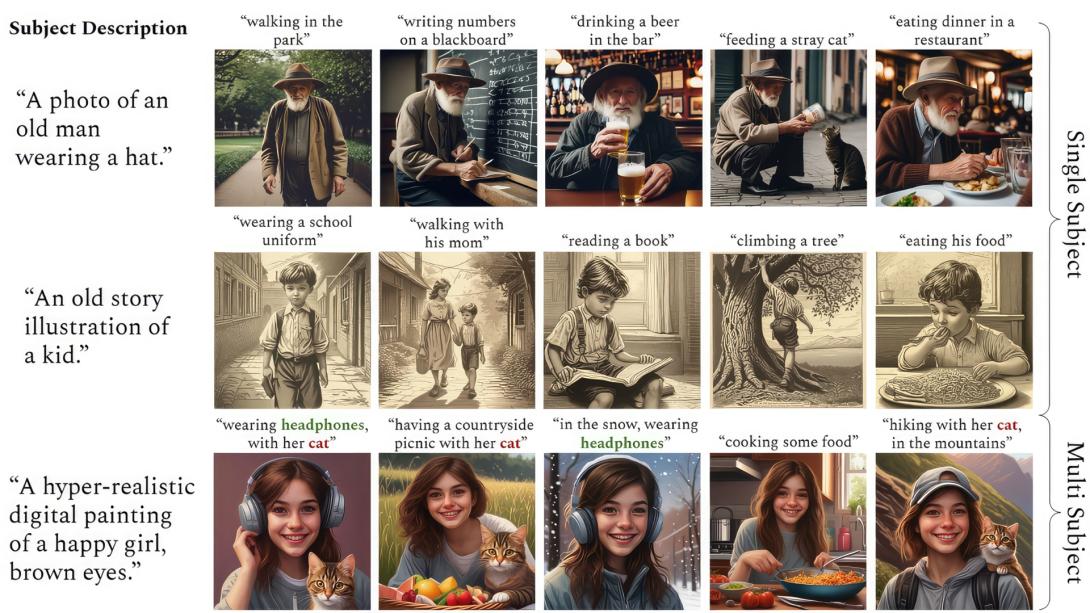

ConsiStory: AI-Generated Images With Main Character Energy

ConsiStory, a collaboration between researchers at NVIDIA and Tel Aviv University, makes it easier to generate multiple images with a consistent main character — an essential capability for storytelling use cases such as illustrating a comic strip or developing a storyboard.

The researchers’ approach introduced a technique called subject-driven shared attention, which reduces the time it takes to generate consistent imagery from 13 minutes to around 30 seconds.

Read the ConsiStory paper.

Edify 3D: Generative AI Enters a New Dimension

NVIDIA Edify 3D is a foundation model that enables developers and content creators to quickly generate 3D objects that can be used to prototype ideas and populate virtual worlds.

Edify 3D helps creators quickly ideate, lay out and conceptualize immersive environments with AI-generated assets. Novice and experienced content creators can use text and image prompts to harness the model, which is now part of the NVIDIA Edify multimodal architecture for developing visual generative AI.

Read the Edify 3D paper and watch the video on YouTube.

GluFormer: AI Predicts Blood Sugar Levels Four Years Out

Researchers from the Weizmann Institute of Science, Tel Aviv-based startup Pheno.AI and NVIDIA led the development of GluFormer, an AI model that can predict an individual’s future glucose levels and other health metrics based on past glucose monitoring data.

The researchers showed that, after adding dietary intake data into the model, GluFormer can also predict how a person’s glucose levels will respond to specific foods and dietary changes, enabling precision nutrition. The research team validated GluFormer across 15 other datasets and found it generalizes well to predict health outcomes for other groups, including those with prediabetes, type 1 and type 2 diabetes, gestational diabetes and obesity.

Read the GluFormer paper.

LATTE3D: Enabling Near-Instant Generation, From Text to 3D Shape

Another 3D generator released by NVIDIA Research this year is LATTE3D, which converts text prompts into 3D representations within a second — like a speedy, virtual 3D printer. Crafted in a popular format used for standard rendering applications, the generated shapes can be easily served up in virtual environments for developing video games, ad campaigns, design projects or virtual training grounds for robotics.

Read the LATTE3D paper.

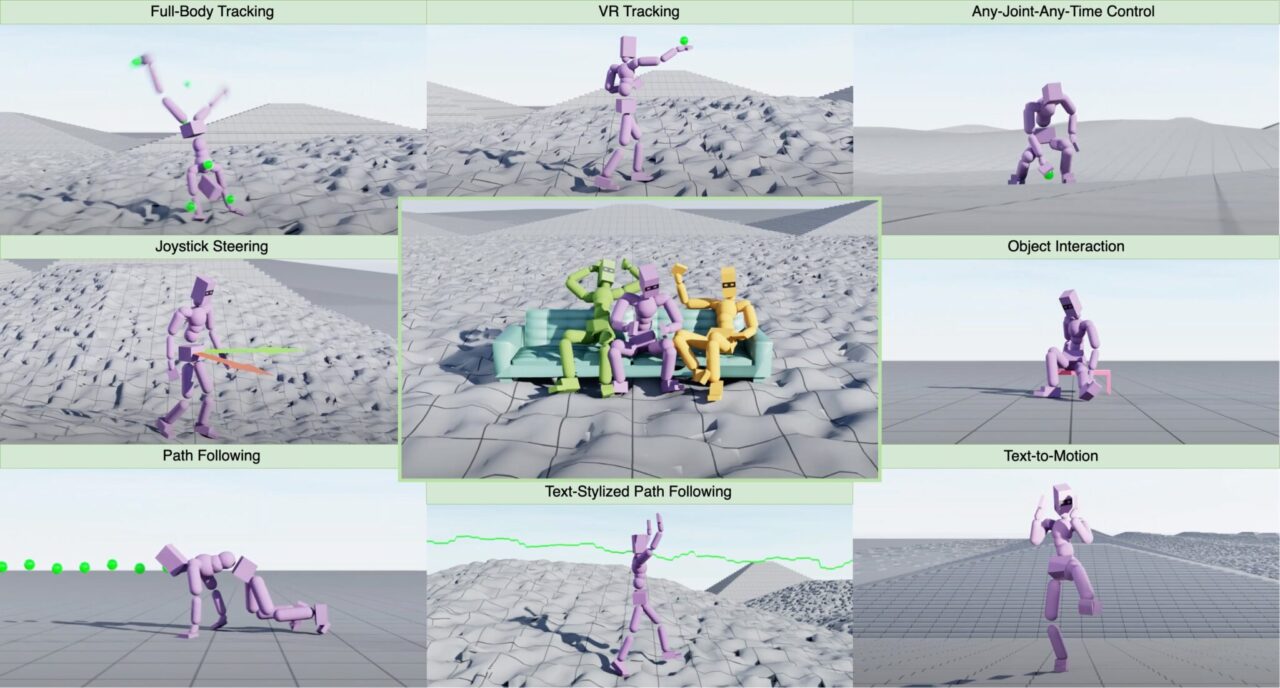

MaskedMimic: Reconstructing Realistic Movement for Humanoid Robots

To advance the development of humanoid robots, NVIDIA researchers introduced MaskedMimic, an AI framework that applies inpainting — the process of reconstructing complete data from an incomplete, or masked, view — to descriptions of motion.

Given partial information, such as a text description of movement, or head and hand position data from a virtual reality headset, MaskedMimic can fill in the blanks to infer full-body motion. It’s become part of NVIDIA Project GR00T, a research initiative to accelerate humanoid robot development.

Read the MaskedMimic paper.

StormCast: Boosting Weather Prediction, Climate Simulation

In the field of climate science, NVIDIA Research announced StormCast, a generative AI model for emulating atmospheric dynamics. While other machine learning models trained on global data have a spatial resolution of about 30 kilometers and a temporal resolution of six hours, StormCast achieves a 3-kilometer, hourly scale.

The researchers trained StormCast on approximately three-and-a-half years of NOAA climate data from the central U.S. When applied with precipitation radars, StormCast offers forecasts with lead times of up to six hours that are up to 10% more accurate than the U.S. National Oceanic and Atmospheric Administration’s state-of-the-art 3-kilometer regional weather prediction model.

Read the StormCast paper, written in collaboration with researchers from Lawrence Berkeley National Laboratory and the University of Washington.

NVIDIA Research Sets Records in AI, Autonomous Vehicles, Robotics

Through 2024, models that originated in NVIDIA Research set records across benchmarks for AI training and inference, route optimization, autonomous driving and more.

NVIDIA cuOpt, an optimization AI microservice used for logistics improvements, has 23 world-record benchmarks. The NVIDIA Blackwell platform demonstrated world-class performance on MLPerf industry benchmarks for AI training and inference.

In the field of autonomous vehicles, Hydra-MDP, an end-to-end autonomous driving framework by NVIDIA Research, achieved first place on the End-To-End Driving at Scale track of the Autonomous Grand Challenge at CVPR 2024.

In robotics, FoundationPose, a unified foundation model for 6D object pose estimation and tracking, obtained first place on the BOP leaderboard for model-based pose estimation of unseen objects.

Learn more about NVIDIA Research, which has hundreds of scientists and engineers worldwide. NVIDIA Research teams are focused on topics including AI, computer graphics, computer vision, self-driving cars and robotics.