Ten miles in from Long Island’s Atlantic coast, Shinjae Yoo is revving his engine.

The computational scientist and machine learning group lead at the U.S. Department of Energy’s Brookhaven National Laboratory is one of many researchers gearing up to run quantum computing simulations on a supercomputer for the first time, thanks to new software.

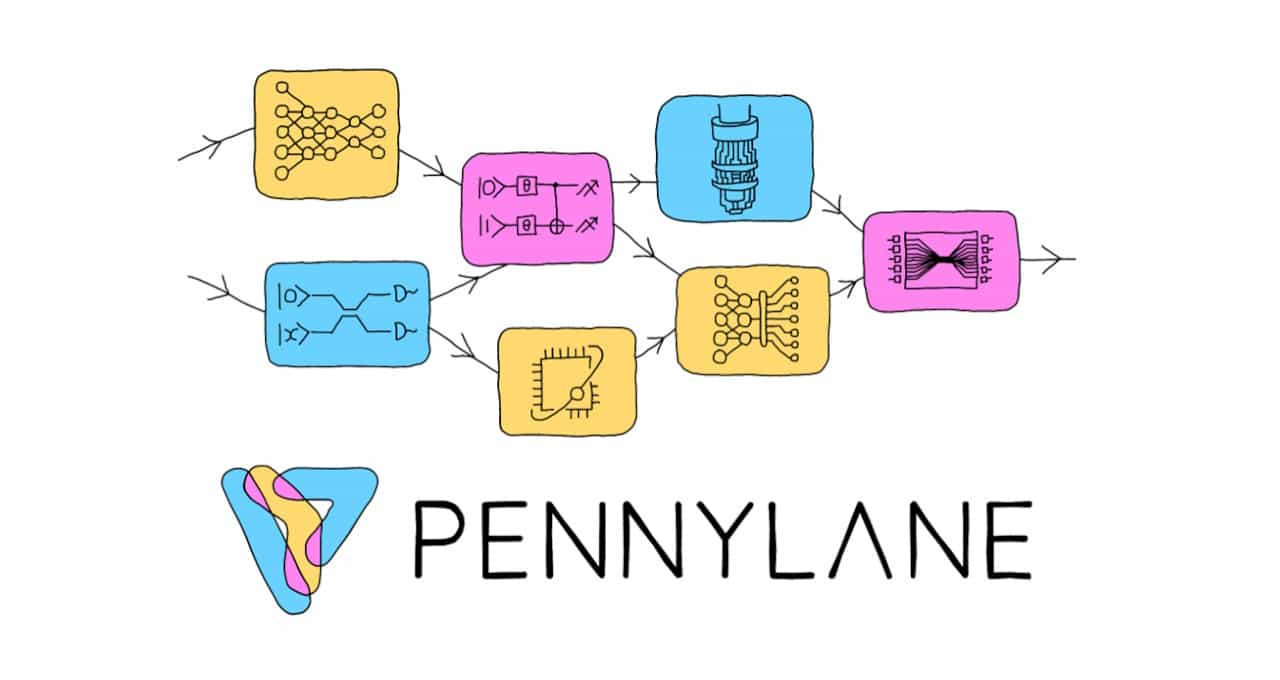

Yoo’s engine, the Perlmutter supercomputer at the National Energy Research Scientific Computing Center (NERSC), is using the latest version of PennyLane, a quantum programming framework from Toronto-based Xanadu. The open-source software, which builds on the NVIDIA cuQuantum software development kit, lets simulations run on high-performance clusters of NVIDIA GPUs.

The performance is key because researchers like Yoo need to process ocean-size datasets. He’ll run his programs across as many as 256 NVIDIA A100 Tensor Core GPUs on Perlmutter to simulate about three dozen qubits — the powerful calculators quantum computers use.

That’s about twice the number of qubits most researchers can model these days.

Powerful, Yet Easy to Use

The so-called multi-node version of PennyLane, used in tandem with the NVIDIA cuQuantum SDK, simplifies the complex job of accelerating massive simulations of quantum systems.

“This opens the door to letting even my interns run some of the largest simulations — that’s why I’m so excited,” said Yoo, whose team has six projects using PennyLane in the pipeline.

His work aims to advance high-energy physics and machine learning. Other researchers use quantum simulations to take chemistry and materials science to new levels.

Quantum computing is alive in corporate R&D centers, too.

For example, Xanadu is helping companies like Rolls-Royce develop quantum algorithms to design state-of-the-art jet engines for sustainable aviation and Volkswagen Group invent more powerful batteries for electric cars.

Four More Projects on Perlmutter

Meanwhile, at NERSC, at least four other projects are in the works this year using multi-node Pennylane, according to Katherine Klymko, who leads the quantum computing program there. They include efforts from NASA Ames and the University of Alabama.

“Researchers in my field of chemistry want to study molecular complexes too large for classical computers to handle,” she said. “Tools like Pennylane let them extend what they can currently do classically to prepare for eventually running algorithms on large-scale quantum computers.”

Blending AI, Quantum Concepts

PennyLane is the product of a novel idea. It adapts popular deep learning techniques like backpropagation and tools like PyTorch to programming quantum computers.

Xanadu designed the code to run across as many types of quantum computers as possible, so the software got traction in the quantum community soon after its introduction in a 2018 paper.

“There was engagement with our content, making cutting-edge research accessible, and people got excited,” recalled Josh Izaac, director of product at Xanadu and a quantum physicist who was an author of the paper and a developer of PennyLane.

Calls for More Qubits

A common comment on the PennyLane forum these days is, “I want more qubits,” said Lee J. O’Riordan, a senior quantum software developer at Xanadu, responsible for PennyLane’s performance.

“When we started work in 2022 with cuQuantum on a single GPU, we got 10x speedups pretty much across the board … we hope to scale by the end of the year to 1,000 nodes — that’s 4,000 GPUs — and that could mean simulating more than 40 qubits,” O’Riordan said.

Scientists are still formulating the questions they’ll address with that performance — the kind of problem they like to have.

Companies designing quantum computers will use the boost to test ideas for building better systems. Their work feeds a virtuous circle, enabling new software features in PennyLane that, in turn, enable more system performance.

Scaling Well With GPUs

O’Riordan saw early on that GPUs were the best vehicle for scaling PennyLane’s performance. He co-authored last year a paper on a method for splitting a quantum program across more than 100 GPUs to simulate more than 60 qubits, split into many 30 qubit sub-circuits.

“We wanted to extend our work to even larger workloads, so when we heard NVIDIA was adding multi-node capability to cuQuantum, we wanted to support it as soon as possible,” he said.

Within four months, multi-node PennyLane was born.

“For a big, distributed GPU project, that was a great turnaround time. Everyone working on cuQuantum helped make the integration as easy as possible,” O’Riordan said.

A Xanadu blog details how developers can simulate large-scale systems with more than 30 qubits using PennyLane and cuQuantum.

The team is still collecting data, but so far on “sample-based workloads, we see almost linear scaling,” he said.

Or, as NVIDIA founder and CEO Jensen Huang might say, “The more you buy, the more you save.”