AI is the rocket fuel that will get us to Mars. It’s the vaccine that will save us on Earth. And it’s the people who aspire to make a dent in the universe.

Our latest “I Am AI” video, unveiled during NVIDIA CEO Jensen Huang’s keynote address at the GPU Technology Conference, pays tribute to the scientists, researchers, artists and many others making historic advances with AI.

To grasp AI’s global impact, consider: the technology is expected to generate $2.9 trillion worth of business value by 2021, according to Gartner.

It’s on course to classify 2 trillion galaxies to understand the universe’s origin, and to zero in on the molecular structure of the drugs needed to treat coronavirus and cancer.

As depicted in the latest video, AI has an artistic side, too. It can paint as well as Bob Ross. And its ability to assist in the creation of original compositions is worthy of the London Symphony Orchestra, which plays the accompanying theme music, a piece that started out written by a recurrent neural network.

AI is also capable of creating text-to-speech synthesis for narrating a short documentary. And that’s just what it did.

These fireworks and more are the story of I Am AI. Sixteen companies and research organizations are featured in the video. The action moves fast, so grab a bowl of popcorn, kick back and enjoy this tour of some of the highlights of AI in 2020.

Reaching Into Outer Space

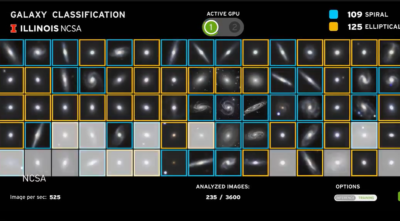

Understanding the formation of the structure and the amount of matter in the universe requires observing and classifying celestial objects such as galaxies. With an estimated 2 trillion galaxies to examine in the observable universe, it’s what cosmologists call a “computational grand challenge.”

The recent Dark Energy Survey collected data from over 300 million galaxies. To study them with unprecedented precision, the Center for Artificial Intelligence Innovation at the National Center for Supercomputing Applications at the University of Illinois at Urbana Champaign teamed up with the Argonne Leadership Computing Facility at the U.S. Department of Energy’s Argonne National Laboratory.

NCSA tapped the Galaxy Zoo project, a crowdsourced astronomy effort that labeled millions of galaxies observed by the Sloan Digital Sky Survey. Using that data, an AI model with 99.6 percent accuracy can now chew through unlabeled galaxies to ID them and accelerate scientific research.

NASA has set a goal of human exploration of Mars by the mid-2030s. To accomplish this feat safely, scientists must understand how the sun and space weather will affect the journey. High-resolution images of the sun taken by NASA’s Solar Dynamics Observatory are aiding scientists in this effort.

Researchers at NASA’s Goddard Space Flight Center have developed algorithms using NVIDIA GPUs to analyze the immense number of solar images taken since 2010. They are using errors in the images to help identify origins of energetic particles in Earth’s orbit that could damage interplanetary spacecraft. They are also using the same images to track solar surface flows to support better models for predicting the weather in space.

Restoring Voice and Limb

Voiceitt — a Tel Aviv-based startup that’s developed signal processing, speech recognition technologies and deep neural nets — offers a synthesized voice for those whose speech has been distorted. The company’s app converts unintelligible speech into easily understood speech.

The University of North Carolina at Chapel Hill’s Neuromuscular Rehabilitation Engineering Laboratory and North Carolina State University’s Active Robotic Sensing (ARoS) Laboratory develop experimental robotic limbs used in the labs.

The two research units have been working on walking environment recognition, aiming to develop environmental adaptive controls for prostheses. They’ve been using CNNs for prediction running on NVIDIA GPUs. And they aren’t alone.

Helping in Pandemic

Whiteboard Coordinator remotely monitors the temperature of people entering buildings to minimize exposure to COVID-19. The Chicago-based startup provides temperature-screening rates of more than 2,000 people per hour at checkpoints. Whiteboard Coordinator and NVIDIA bring AI to the edge of healthcare with NVIDIA Clara Guardian, an application framework that simplifies the development and deployment of smart sensors.

Viz.ai uses AI to inform neurologists about strokes much faster than traditional methods. With the onset of the pandemic, Viz.ai moved to help combat the new virus with an app that alerts care teams to positive COVID-19 results.

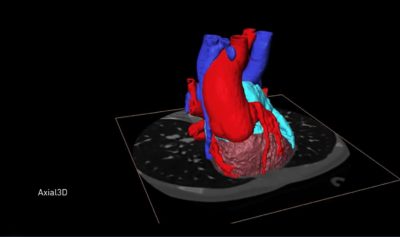

Axial3D is a Belfast, Northern Ireland, startup that enlists AI to accelerate the production time of 3D-printed models for medical images used in planning surgeries. Having redirected its resources at COVID-19, the company is now supplying face shields and is among those building ventilators for the U.K.’s National Health Service. It has also begun 3D printing of swab kits for testing as well as valves for respirators. (Check out their on-demand webinar.)

KiwiBot, a cheery-eyed food delivery bot from Berkeley, Calif., has included in its path a way to provide COVID-19 services. It’s autonomously delivering masks, sanitizers and other supplies with its robot-to-human service.

(All the companies above are members of the NVIDIA Inception program for AI startups.)

Masterpieces of Art, Compositions and Narration

Researchers from London-based startup Oxia Palus demonstrated in a paper, “Raiders of the Lost Art,” that AI could be used to recreate lost works of art that had been painted over. Beneath Picasso’s 1902 The Crouching Beggar lies a mountainous landscape that art curators believe is of Parc del Laberint d’Horta, near Barcelona.

They also know that Santiago Rusiñol painted Parc del Laberint d’Horta. Using a modified X-ray fluorescence image of The Crouching Beggar and Santiago Rusiñol’s Terraced Garden in Mallorca, the researchers applied neural style transfer, running on NVIDIA GPUs, to reconstruct the lost artwork, creating Rusiñol’s Parc del Laberint d’Horta.

For GTC a few years ago, Luxembourg-based AIVA AI composed the start — melodies and accompaniments — of what would become an original classical music piece meriting an orchestra. Since then we’ve found it one.

Late last year, the London Symphony Orchestra agreed to play the moving piece, which was arranged for the occasion by musician John Paesano and was recorded at Abbey Road Studios.

NVIDIA alum Helen was our voice-over professional for videos and events for years. When she left the company, we thought about how we might continue the tradition. We turned to what we know: AI. But there weren’t publicly available models up to the task.

A team from NVIDIA’s Applied Deep Learning Research group published the answer to the problem: Flowtron: an Autoregressive Flow-based Generative Network for Text-to-Speech Synthesis. Licensing Helen’s voice, we trained the network on dozens of hours of it.

First, Helen produced multiple takes, guided by our creative director. Then our creative director was able to generate multiple takes from Flowtron and adjust parameters of the model to get the desired outcome. And what you hear is “Helen” speaking in the I Am AI video narration.